Get your free personalized podcast brief

We scan new podcasts and send you the top 5 insights daily.

The research on re-ranking that influenced Retrieval Augmented Generation (RAG) started with PhD student Rodrigo Nogueira's goal to create an AI researcher. He realized that before an AI could reason, it first needed a scalable way to navigate and retrieve relevant information from vast document sets.

Related Insights

Frontier labs like OpenAI are now focused on building autonomous AI agents capable of conducting research and running experiments. This "auto researcher" is seen as the "final boss battle" to accelerate AI development itself.

The vast majority of enterprise information, previously trapped in formats like PDFs and documents, was largely unusable. AI, through techniques like RAG and automated structure extraction, is unlocking this data for the first time, making it queryable and enabling new large-scale analysis.

Google is moving beyond AI as a mere analysis tool. The concept of an 'AI co-scientist' envisions AI as an active partner that helps sift through information, generate novel hypotheses, and outline ways to test them. This reframes the human-AI collaboration to fundamentally accelerate the scientific method itself.

According to IBM's AI Platform VP, Retrieval-Augmented Generation (RAG) was the killer app for enterprises in the first year after ChatGPT's release. RAG allows companies to connect LLMs to their proprietary structured and unstructured data, unlocking immense value from existing knowledge bases and proving to be the most powerful initial methodology.

Standard Retrieval-Augmented Generation (RAG) systems often fail because they treat complex documents as pure text, missing crucial context within charts, tables, and layouts. The solution is to use vision language models for embedding and re-ranking, making visual and structural elements directly retrievable and improving accuracy.

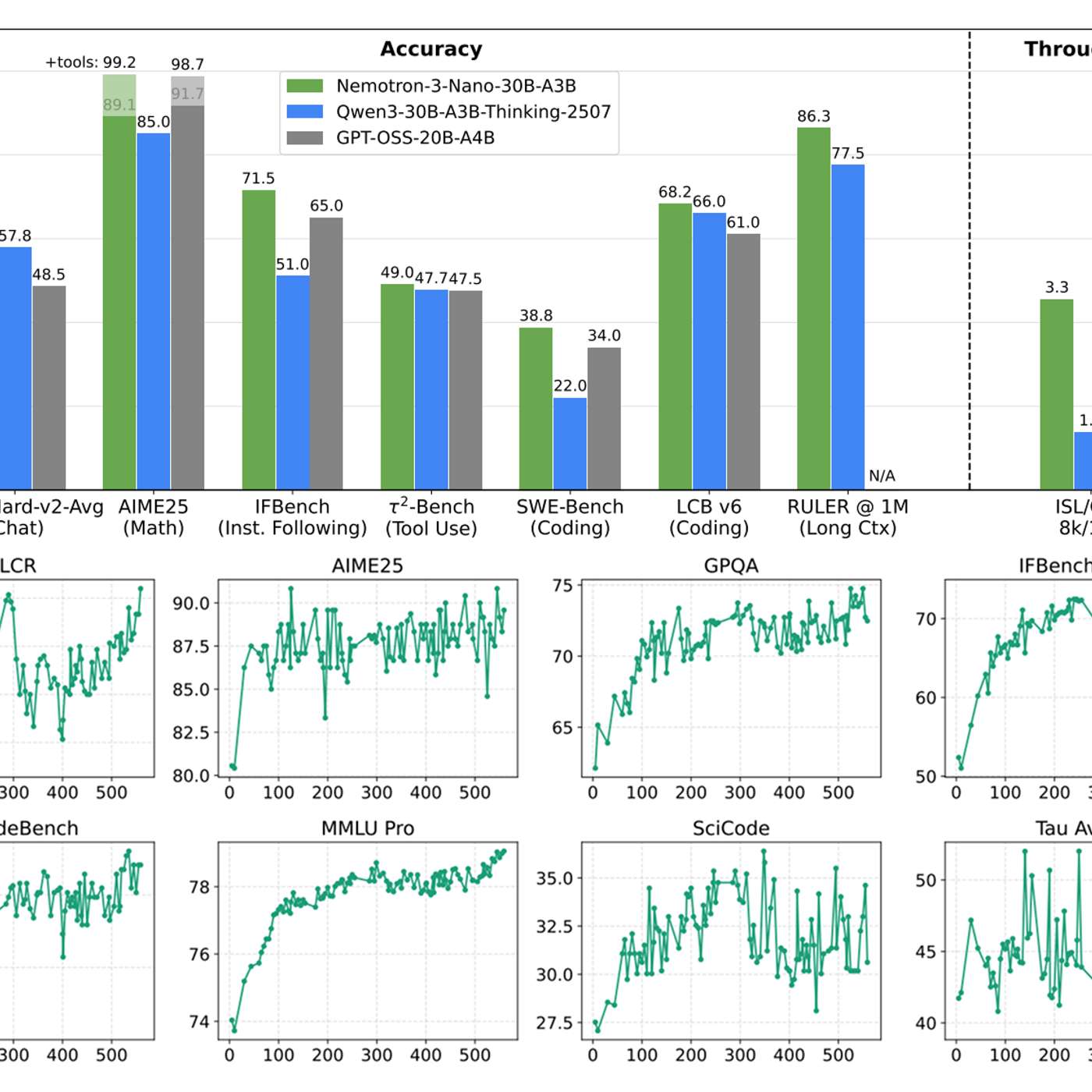

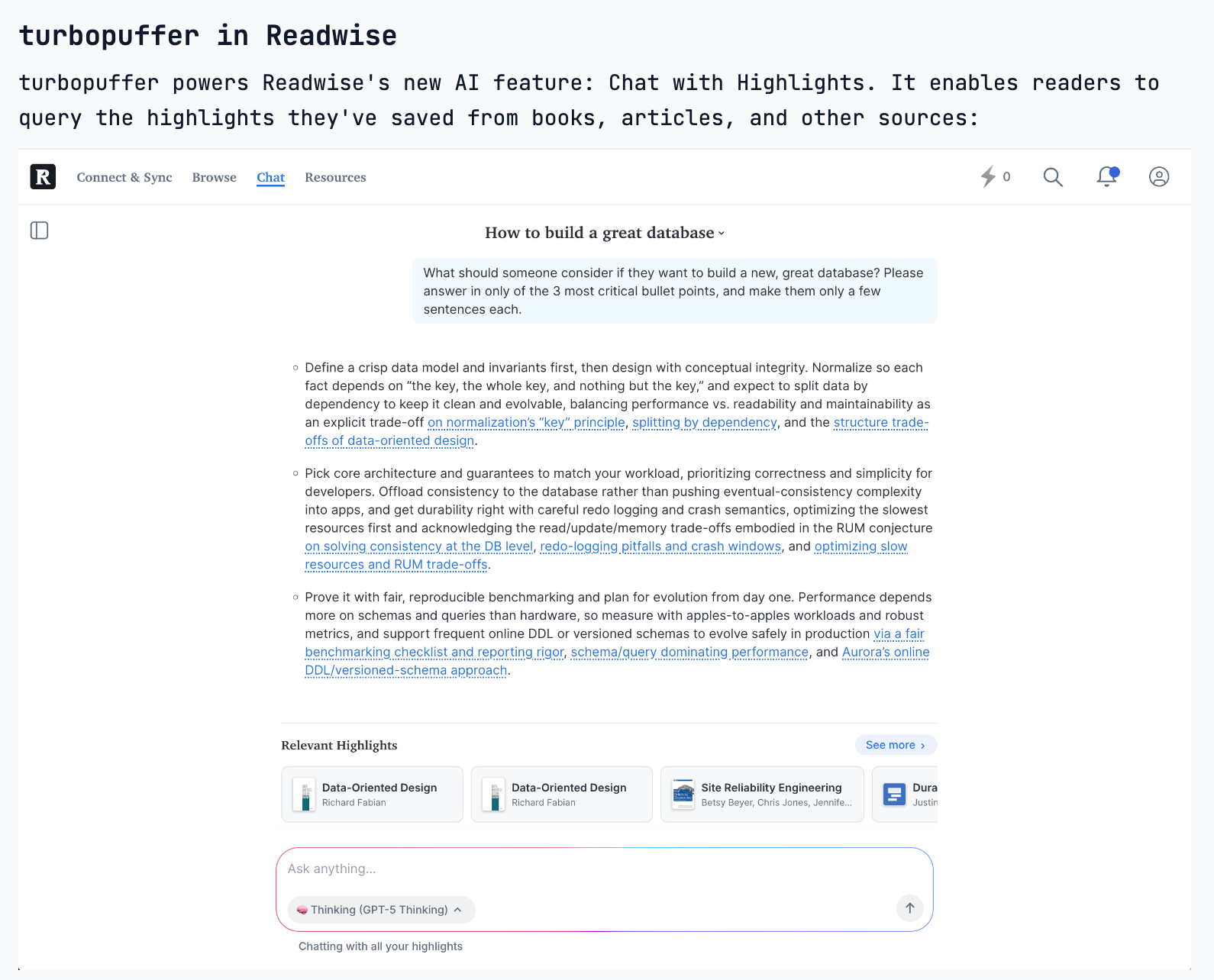

Retrieval Augmented Generation (RAG) uses vector search to find relevant documents based on a user's query. This factual context is then fed to a Large Language Model (LLM), forcing it to generate responses based on provided data, which significantly reduces the risk of "hallucinations."

Teams often agonize over which vector database to use for their Retrieval-Augmented Generation (RAG) system. However, the most significant performance gains come from superior data preparation, such as optimizing chunking strategies, adding contextual metadata, and rewriting documents into a Q&A format.

AEO is not about getting into an LLM's training data, which is slow and difficult. Instead, it focuses on Retrieval-Augmented Generation (RAG)—the process where the LLM performs a live search for current information. This makes AEO a real-time, controllable marketing channel.

Classic RAG involves a single data retrieval step. Its evolution, "agentic retrieval," allows an AI to perform a series of conditional fetches from different sources (APIs, databases). This enables the handling of complex queries where each step informs the next, mimicking a research process.

The nature of Retrieval-Augmented Generation (RAG) is evolving. Instead of a single search to populate an initial context window, AI agents are now performing numerous concurrent queries in a single turn. This allows them to explore diverse information paths simultaneously, driving new database requirements.