Get your free personalized podcast brief

We scan new podcasts and send you the top 5 insights daily.

Mistral-Medium-3.5 allows users to adjust its "reasoning effort" per request. This unique feature enables the same model weights to deliver either quick responses for simple queries or perform extended computation for complex agentic tasks, optimizing the trade-off between latency and solution quality.

Related Insights

By providing a model with a few core tools (context management, web search, code execution), Artificial Analysis found it performed better on complex tasks than the integrated agentic systems within major web chatbots. This suggests leaner, focused toolsets can be more effective.

The Qwen 3.6 model was fine-tuned using "chain of thought distillation" data from the more powerful Claude Opus. This technique allows smaller models to learn and replicate the structured problem-solving capabilities of larger systems, making advanced AI reasoning more accessible.

Classifying a model as "reasoning" based on a chain-of-thought step is no longer useful. With massive differences in token efficiency, a so-called "reasoning" model can be faster and cheaper than a "non-reasoning" one for a given task. The focus is shifting to a continuous spectrum of capability versus overall cost.

Benchmarking reasoning models revealed no clear correlation between the level of reasoning and an LLM's performance. In fact, even when there is a slight accuracy gain (1-2%), it often comes with a significant cost increase, making it an inefficient trade-off.

The traditional lever of `temperature` for controlling model creativity has been superseded in modern reasoning models, where it's often fixed. The new critical parameter is the "thinking budget"—the amount of reasoning tokens a model can use before responding. A larger budget allows for more internal review and higher-quality outputs.

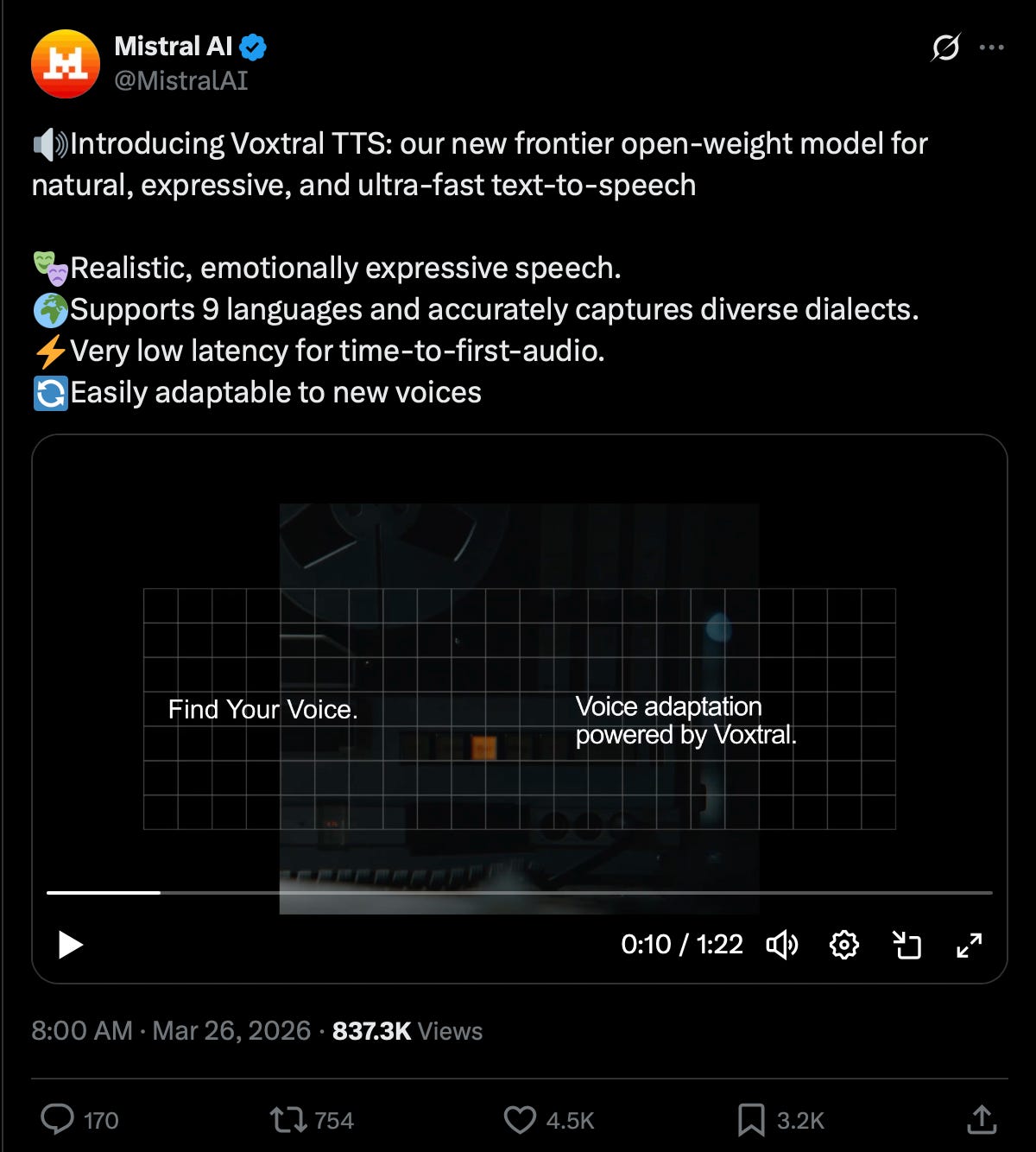

Instead of a single "omni-model," Mistral offers both large, general-purpose models and smaller, highly optimized models for specific tasks like transcription. This allows customers to choose a cost-effective solution for dedicated use cases without paying for unneeded capabilities.

The binary distinction between "reasoning" and "non-reasoning" models is becoming obsolete. The more critical metric is now "token efficiency"—a model's ability to use more tokens only when a task's difficulty requires it. This dynamic token usage is a key differentiator for cost and performance.

Unlike approaches using separate specialized models (like Mixture-of-Experts), Mistral-Medium-3.5 employs a dense, "merged" architecture. This single 128B parameter system consolidates diverse capabilities into a unified framework, simplifying deployment and ensuring consistent performance across different task types without needing to switch models.

A single AI agent can run multiple "sub-bots" for different tasks. To optimize performance and cost, assign different underlying models to each. Use a powerful model like Claude Opus for complex tasks, and a cheaper model like Sonnet for routine functions.

Microsoft's Copilot platform doesn't rely on a single foundation model. It automatically routes user tasks to different models based on what works best for the job—using OpenAI for interactive chat but switching to Claude for long-running, tool-using background tasks.