Get your free personalized podcast brief

We scan new podcasts and send you the top 5 insights daily.

Quilter's RL agent gets fast feedback using a three-tiered reward system. It starts with cheap geometric rules, moves to faster quasi-static physics approximations, and only finally uses expensive full-wave simulations. This provides rapid, conservative feedback essential for efficient training.

Related Insights

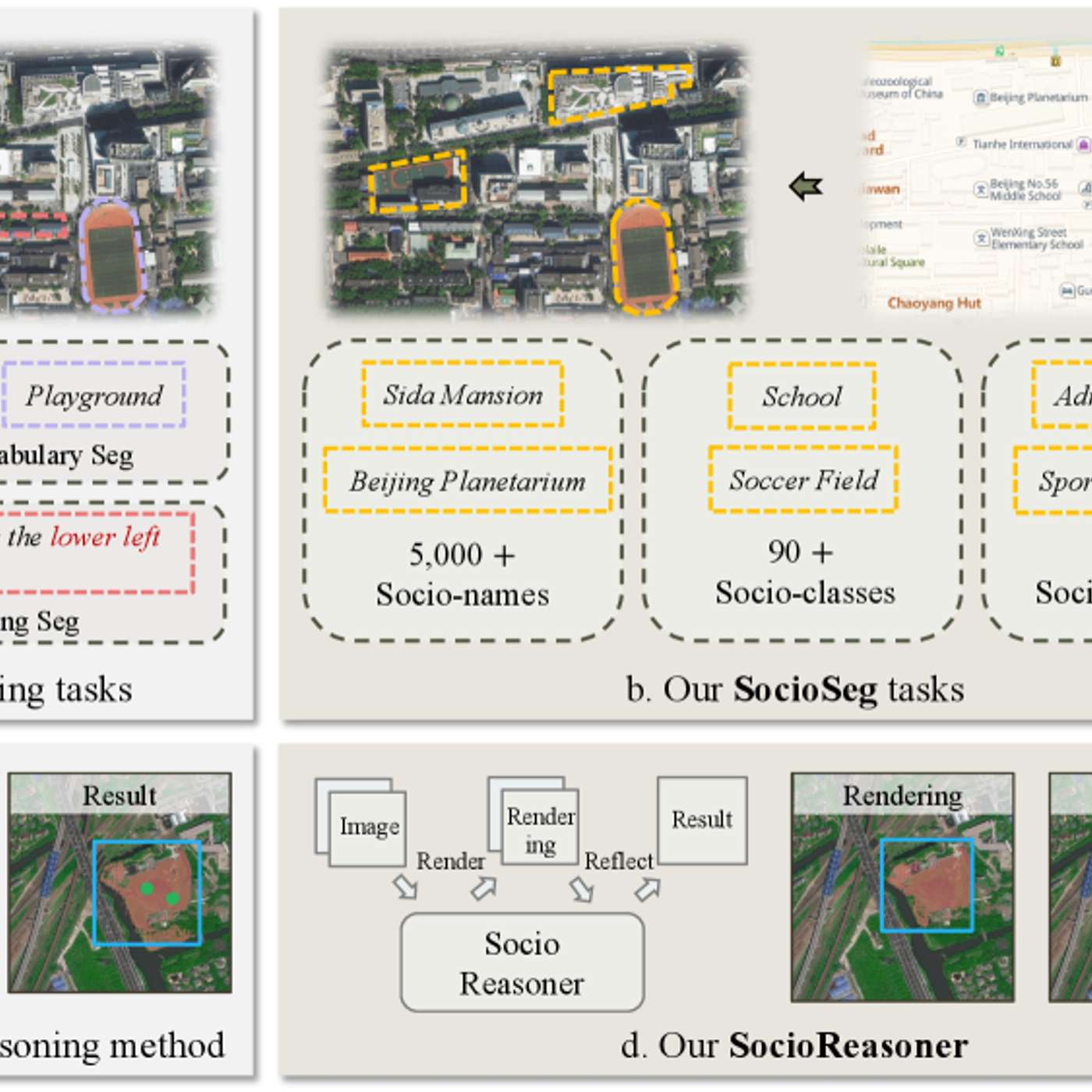

The AI system is fine-tuned using reinforcement learning (RL) instead of standard backpropagation. This allows it to learn from a simple reward signal (correct segmentation), cleverly bypassing the problem that key parts of its process are not mathematically differentiable.

AI labs like Anthropic find that mid-tier models can be trained with reinforcement learning to outperform their largest, most expensive models in just a few months, accelerating the pace of capability improvements.

Quilter avoids the intractability of training an RL agent on every minute detail of circuit board design. Instead, they structure the environment to present the agent with key, high-level decisions (e.g., "go clockwise or counter-clockwise"), drastically reducing the search space and making learning feasible.

Beyond supervised fine-tuning (SFT) and human feedback (RLHF), reinforcement learning (RL) in simulated environments is the next evolution. These "playgrounds" teach models to handle messy, multi-step, real-world tasks where current models often fail catastrophically.

Reinforcement Learning with Human Feedback (RLHF) is a popular term, but it's just one method. The core concept is reinforcing desired model behavior using various signals. These can include AI feedback (RLAIF), where another AI judges the output, or verifiable rewards, like checking if a model's answer to a math problem is correct.

The choice between simulation and real-world data depends on a task's core difficulty. For locomotion, complex reactive behavior is harder to capture than simple ground physics, favoring simulation. For manipulation, complex object physics are harder to simulate than simple grasping behaviors, favoring real-world data.

Rather than just replacing physics-based models, AI can be used to select the *correct* physics model. Heather Kulik's team uses the quantum wave function itself as an input to a neural network to predict which quantum mechanical approximation will be most accurate for a specific material, a complex task that defies simple heuristics.

Instead of relying on digital proxies like code graders, Periodic Labs uses real-world lab experiments as the ultimate reward function. Nature itself becomes the reinforcement learning environment, ensuring the AI is optimized against physical reality, not flawed simulations.

OpenPipe's 'Ruler' library leverages a key insight: GRPO only needs relative rankings, not absolute scores. By having an LLM judge stack-rank a group of agent runs, one can generate effective rewards. This approach works phenomenally well, even with weaker judge models, effectively solving the reward assignment problem.

Creating realistic training environments isn't blocked by technical complexity—you can simulate anything a computer can run. The real bottleneck is the financial and computational cost of the simulator. The key skill is strategically mocking parts of the system to make training economically viable.