Get your free personalized podcast brief

We scan new podcasts and send you the top 5 insights daily.

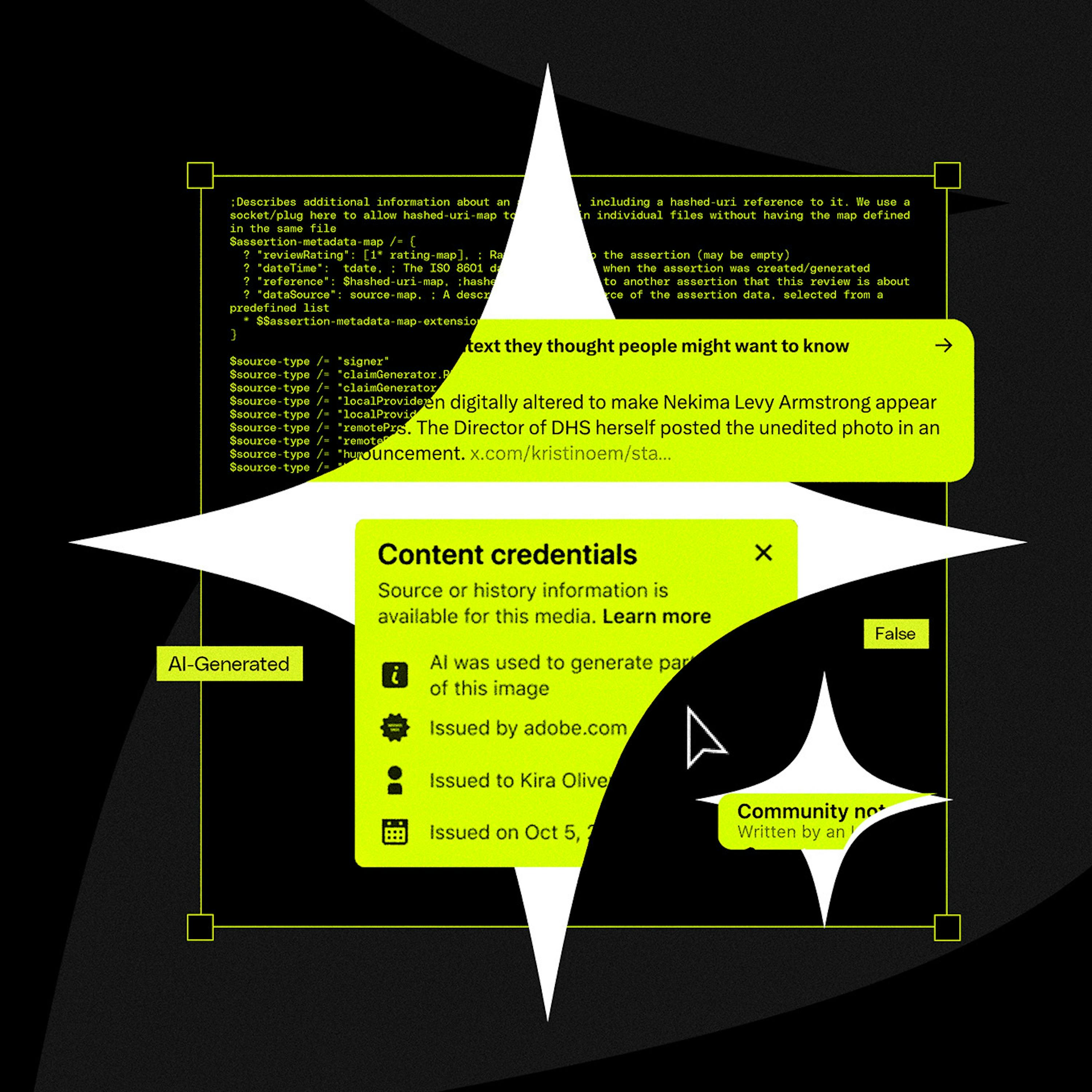

Instead of building a resource-intensive AI image classifier, the developer discovered major AI tools embed provenance data using open standards like C2PA and XMP. A simple metadata parser was sufficient, eliminating the need for a complex ML pipeline and delivering a zero-cost solution.

Related Insights

Faced with rising costs from proprietary labs, sophisticated enterprise clients are building internal evaluation and routing systems. This allows them to use cheaper, open-source models for less complex tasks, optimizing for both cost and performance.

AI models are commoditized, but the ecosystem of tools, services, and compliance standards is increasingly complex. The example of needing nine Azure services for only 39% NIST compliance highlights this. Companies offering a consolidated, simplified path to value will hold a significant competitive advantage.

Politician Alex Boris argues that expecting humans to spot increasingly sophisticated deepfakes is a losing battle. The real solution is a universal metadata standard (like C2PA) that cryptographically proves if content is real or AI-generated, making unverified content inherently suspect, much like an unsecure HTTP website today.

The official C2PA library offered full cryptographic verification of AI image origins. However, for a simple transparency badge, simply checking for the existence of a metadata field was sufficient. This avoided a large 1.5MB library and unnecessary processing for the specific product use case.

Instead of detecting AI fakes, a new approach focuses on proving authenticity at the source. Organizations like C2PA work with hardware makers to embed cryptographic signatures into photos and videos, creating a verifiable chain of "content provenance" that proves an asset was captured by a real device.

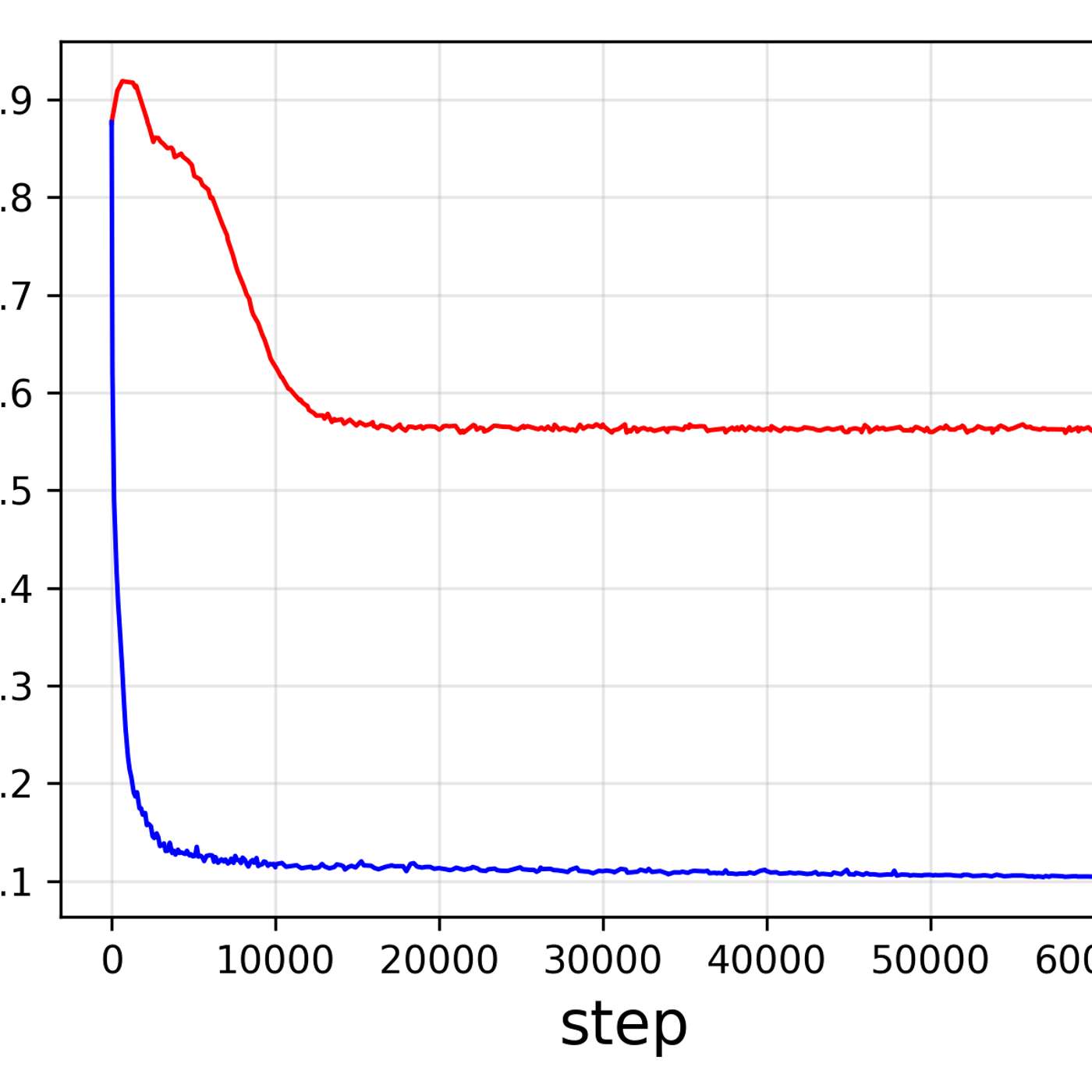

Resist the urge to apply LLMs to every problem. A better approach is using a 'first principles' decision tree. Evaluate if the task can be solved more simply with data visualization or traditional machine learning before defaulting to a complex, probabilistic, and often overkill GenAI solution.

Instead of storing AI-generated descriptions in a separate database, Tim McLear's "Flip Flop" app embeds metadata directly into each image file's EXIF data. This makes each file a self-contained record where rich context travels with the image, accessible by any system or person, regardless of access to the original database.

C2PA was designed to track a file's provenance (creation, edits), not specifically to detect AI. This fundamental mismatch in purpose is why it's an ineffective solution for the current deepfake crisis, as it wasn't built to be a simple binary validator of reality.

Instead of waiting for formal bodies, Google DeepMind is developing and open-sourcing its own technical standards for AI agents. This strategy aims to solve immediate interoperability problems and establish a market-wide de facto standard through rapid, widespread adoption, bypassing slower, formal channels.

Adopting a single, unified architecture for both vision and generation tasks simplifies the engineering lifecycle. This approach reduces the cost and complexity of maintaining, updating, and deploying multiple specialized models, accelerating development.