Get your free personalized podcast brief

We scan new podcasts and send you the top 5 insights daily.

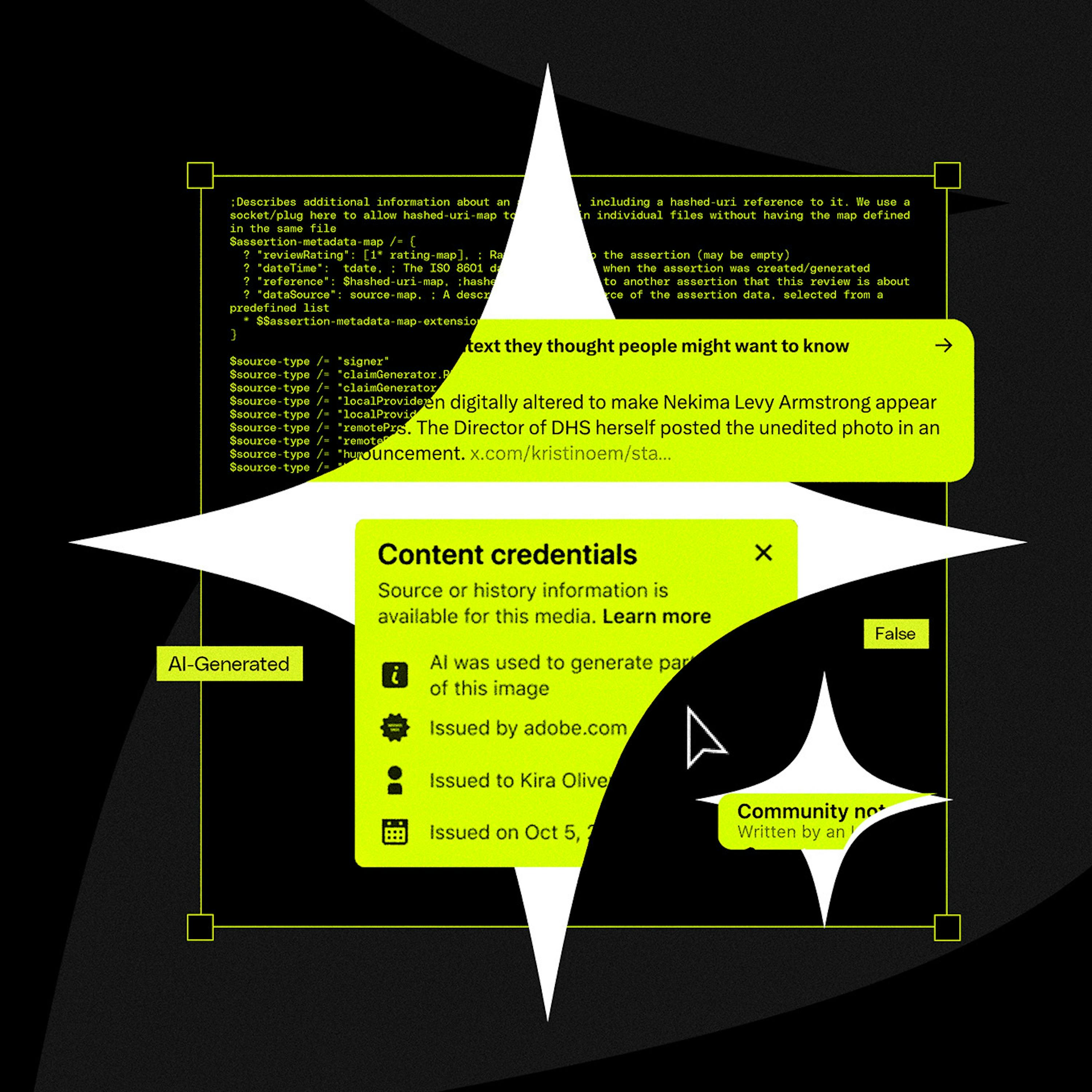

Instead of detecting AI fakes, a new approach focuses on proving authenticity at the source. Organizations like C2PA work with hardware makers to embed cryptographic signatures into photos and videos, creating a verifiable chain of "content provenance" that proves an asset was captured by a real device.

Related Insights

As AI makes it easy to fake video and audio, blockchain's immutable and decentralized ledger offers a solution. Creators can 'mint' their original content, creating a verifiable record of authenticity that nobody—not even governments or corporations—can alter.

Beyond data privacy, a key ethical responsibility for marketers using AI is ensuring content integrity. This means using platforms that provide a verifiable trail for every asset, check for originality, and offer AI-assisted verification for factual accuracy. This protects the brand, ensures content is original, and builds customer trust.

AI is extremely effective at cheaply producing outputs that are difficult to verify, creating an information crisis. Blockchain technology serves as a complementary solution. Its core value proposition as a globally recognized, unchangeable 'golden record' provides the necessary verification layer to prove authenticity in a world of AI-generated content.

A critical failure point for C2PA is that social media platforms themselves can inadvertently strip the crucial metadata during their standard image and video processing pipelines. This technical flaw breaks the chain of provenance before the content is even displayed to users.

The rise of AI, which can generate endless fake content, creates a powerful demand for crypto's core function: providing verifiable truth. Crypto wallets, digital signatures, and proof-of-human systems become critical infrastructure to prove authenticity in an AI-saturated world. AI effectively subsidizes the need for crypto.

Politician Alex Boris argues that expecting humans to spot increasingly sophisticated deepfakes is a losing battle. The real solution is a universal metadata standard (like C2PA) that cryptographically proves if content is real or AI-generated, making unverified content inherently suspect, much like an unsecure HTTP website today.

While camera brands like Sony and Nikon support C2PA on new models, the standard's adoption is crippled by the inability to update firmware on millions of existing professional cameras. This means the vast majority of photos taken will lack provenance data for years, undermining the entire system.

The rise of convincing AI-generated deepfakes will soon make video and audio evidence unreliable. The solution will be the blockchain, a decentralized, unalterable ledger. Content will be "minted" on-chain to provide a verifiable, timestamped record of authenticity that no single entity can control or manipulate.

Amidst the rise of AI-generated fakes, proving video authenticity is becoming critical. By building closed systems that can maintain a 'digital fingerprint' and chain of custody for video, companies like Ring are positioned to become indispensable arbiters of truth for the legal system, not just camera providers.

C2PA was designed to track a file's provenance (creation, edits), not specifically to detect AI. This fundamental mismatch in purpose is why it's an ineffective solution for the current deepfake crisis, as it wasn't built to be a simple binary validator of reality.