Get your free personalized podcast brief

We scan new podcasts and send you the top 5 insights daily.

The official C2PA library offered full cryptographic verification of AI image origins. However, for a simple transparency badge, simply checking for the existence of a metadata field was sufficient. This avoided a large 1.5MB library and unnecessary processing for the specific product use case.

Related Insights

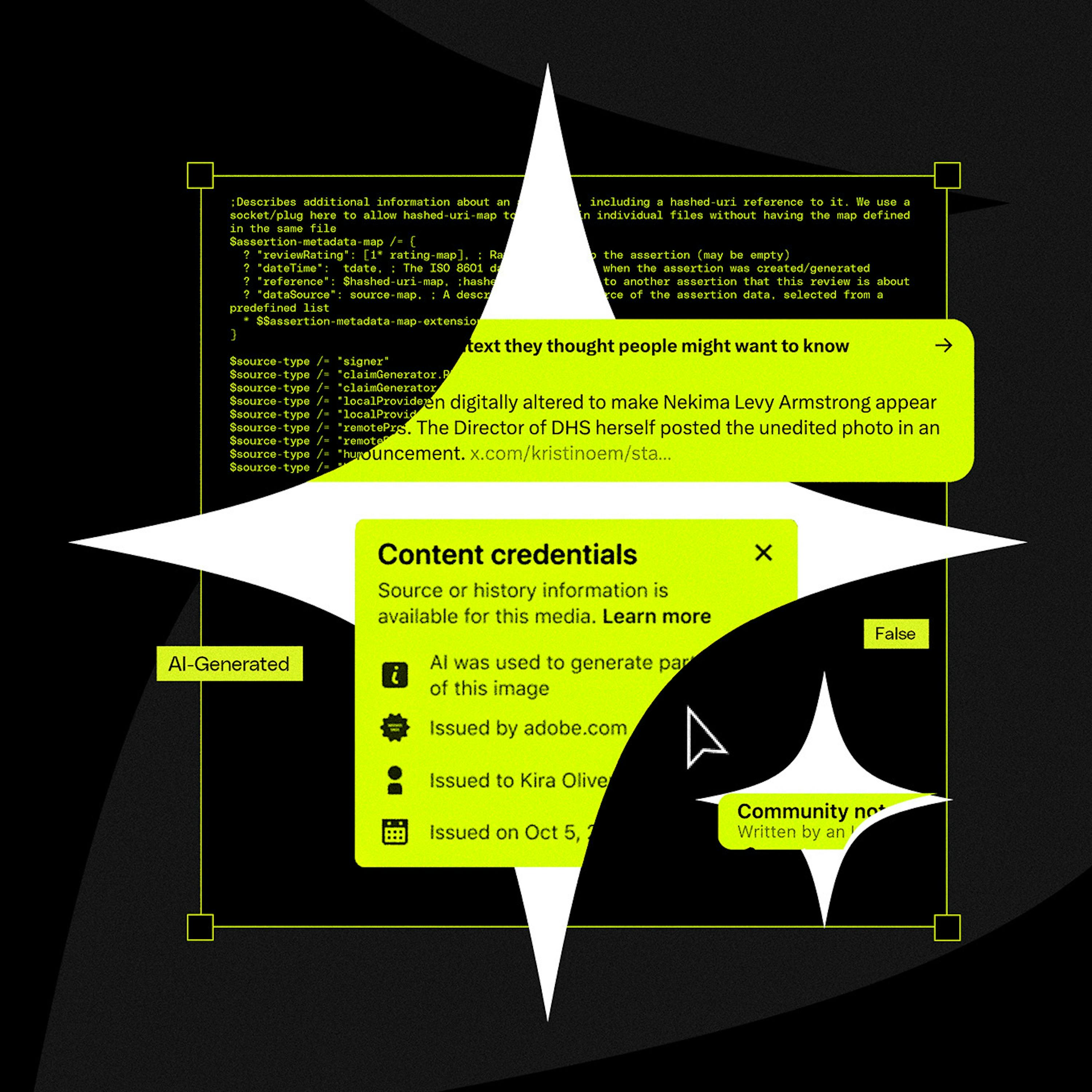

Major tech companies publicly champion their support for the C2PA standard to appear proactive about the deepfake problem. However, this support is often superficial, serving as a "meritless badge" or PR move while they avoid the hard work of robust implementation and ecosystem-wide collaboration.

Instead of building a resource-intensive AI image classifier, the developer discovered major AI tools embed provenance data using open standards like C2PA and XMP. A simple metadata parser was sufficient, eliminating the need for a complex ML pipeline and delivering a zero-cost solution.

Beyond data privacy, a key ethical responsibility for marketers using AI is ensuring content integrity. This means using platforms that provide a verifiable trail for every asset, check for originality, and offer AI-assisted verification for factual accuracy. This protects the brand, ensures content is original, and builds customer trust.

A critical failure point for C2PA is that social media platforms themselves can inadvertently strip the crucial metadata during their standard image and video processing pipelines. This technical flaw breaks the chain of provenance before the content is even displayed to users.

Politician Alex Boris argues that expecting humans to spot increasingly sophisticated deepfakes is a losing battle. The real solution is a universal metadata standard (like C2PA) that cryptographically proves if content is real or AI-generated, making unverified content inherently suspect, much like an unsecure HTTP website today.

Instead of detecting AI fakes, a new approach focuses on proving authenticity at the source. Organizations like C2PA work with hardware makers to embed cryptographic signatures into photos and videos, creating a verifiable chain of "content provenance" that proves an asset was captured by a real device.

By understanding that XMP metadata resides at the beginning of an image file, the solution reads only the first 64KB. This avoids processing the entire multi-megabyte file, creating a near-instantaneous check with minimal resource usage, even for very large images.

The AI detection logic is only loaded when a user interacts with the image uploader, keeping the initial app bundle small and fast. Furthermore, if the detection process fails, it does so silently without impacting the user experience—a robust pattern for non-essential enhancements.

The goal for trustworthy AI isn't simply open-source code, but verifiability. This means having mathematical proof, like attestations from secure enclaves, that the code running on a server exactly matches the public, auditable code, ensuring no hidden manipulation.

C2PA was designed to track a file's provenance (creation, edits), not specifically to detect AI. This fundamental mismatch in purpose is why it's an ineffective solution for the current deepfake crisis, as it wasn't built to be a simple binary validator of reality.