Get your free personalized podcast brief

We scan new podcasts and send you the top 5 insights daily.

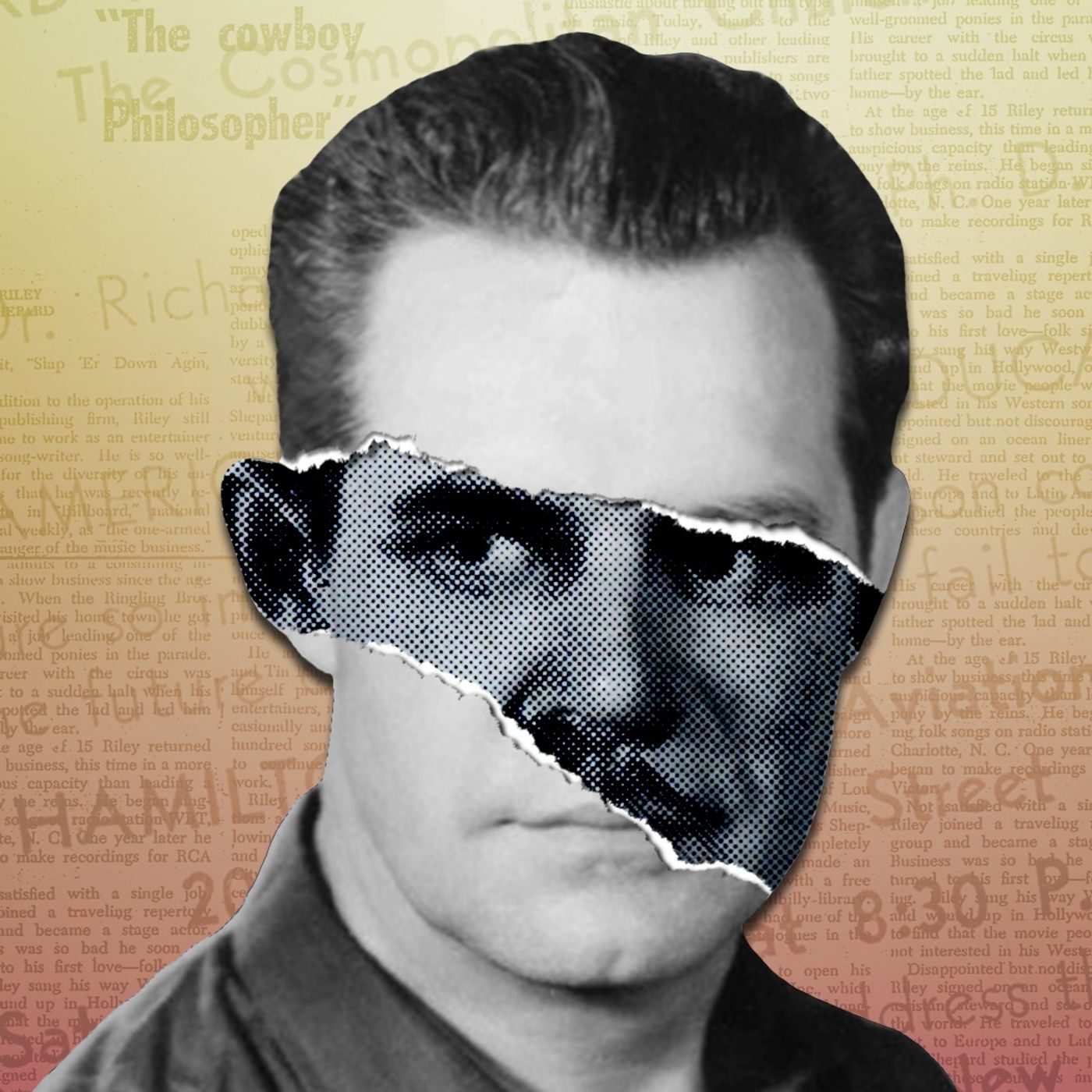

Riley Shepherd's folk music encyclopedia, created without computers, required him to manually cross-reference 43,000 songs. A folklorist noted the entire complex system lived "largely inside his own head," a feat of mental organization that highlights the cognitive demands of ambitious analog-era scholarship.

Related Insights

The "generative" label on AI is misleading. Its true power for daily knowledge work lies not in creating artifacts, but in its superhuman ability to read, comprehend, and synthesize vast amounts of information—a far more frequent and fundamental task than writing.

For knowledge workers like authors, up to 50% of their time is spent on tedious "chores" like organizing sources or creating timelines. AI automates this drudgery, freeing up mental bandwidth for higher-value creative tasks like narrative construction and prose.

Engaging with AI is a high-intensity mental workout, shifting the nature of work to 'cognitive synthesis.' Users, or 'neural athletes,' must constantly adjudicate between what the model says, what they know, and organizational needs, creating a new and profound cognitive strain.

A human child learns a language from five years of input, while an LLM requires the equivalent of 5,000. Professor Griffiths quantifies this gap as 4,995 years' worth of information, which represents the "priors" or inductive biases—innate structures and assumptions—that give humans a massive head start in learning.

Reading is not an innate human ability. The process of learning to read physically rewires the brain, forging new connections between regions not originally designed to work together. This reconfigured brain becomes capable of generating and comprehending far more sophisticated ideas than one shaped only by oral culture.

Using AI to generate instant research reports bypasses the deep learning that occurs during the slow, manual process of discovery. This 'learning atrophy' poses a significant risk for developing genuine expertise, as the struggle itself is a critical part of comprehension.

Technology doesn't change the brain's fundamental mechanism for memory. Instead, it acts as an external tool that allows us to strategically choose what to remember, freeing up limited attentional resources. We've simply offloaded rote memorization (like phone numbers) to focus our mental bandwidth elsewhere.

Riley Shepherd's encyclopedia was seen as a con by his daughter, who experienced the financial fallout, but as genius by a folklorist. This shows a creator's internal motivation is often detached from a project's external perception or success, which is judged based on its collateral impact.

Former OpenAI researcher Andrej Karpathy suggests using LLMs not just for chat, but to actively build and maintain personal knowledge wikis. By feeding raw documents to an LLM, it can compile a structured, interlinked knowledge base, effectively acting as a 'programmer' for your information.

Relying on AI for writing tasks has a measurable neurological cost. EEG scans show brain connectivity is nearly halved compared to writing manually. This "cognitive debt" means you get faster output but fail to build the long-term neural pathways for true understanding and memory.