Get your free personalized podcast brief

We scan new podcasts and send you the top 5 insights daily.

According to Comma AI's CTO, the next frontier in robotics isn't just bigger models, but solving three fundamental challenges: 1) using ML for low-level controls, 2) making reinforcement learning (RL) practical for noisy environments, and 3) enabling continual, on-device learning to adapt to changing conditions.

Related Insights

In robotics, purely imitating human actions is insufficient. A model trained this way doesn't learn how to recover from inevitable errors. Comma AI solves this by training its models in a simulator where they are forced to learn recovery paths from off-course situations, a critical step for real-world deployment.

Pre-training on internet text data is hitting a wall. The next major advancements will come from reinforcement learning (RL), where models learn by interacting with simulated environments (like games or fake e-commerce sites). This post-training phase is in its infancy but will soon consume the majority of compute.

Many AI projects fail to reach production because of reliability issues. The vision for continual learning is to deploy agents that are 'good enough,' then use RL to correct behavior based on real-world errors, much like training a human. This solves the final-mile reliability problem and could unlock a vast market.

Beyond supervised fine-tuning (SFT) and human feedback (RLHF), reinforcement learning (RL) in simulated environments is the next evolution. These "playgrounds" teach models to handle messy, multi-step, real-world tasks where current models often fail catastrophically.

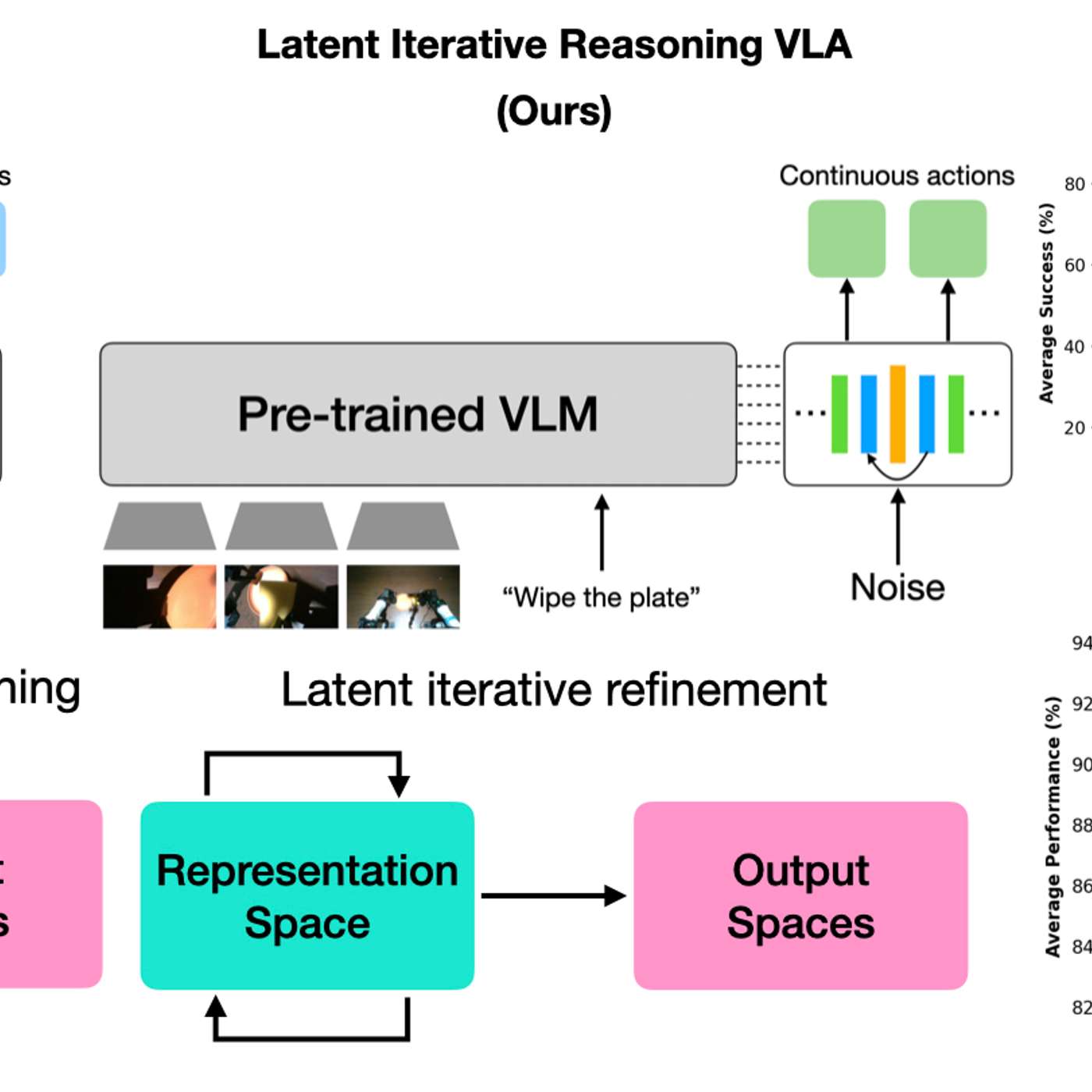

A new model architecture allows robots to vary their internal 'thinking' iterations at test time. This lets practitioners trade response speed for decision accuracy on a case-by-case basis, boosting performance on complex tasks without needing to retrain the model.

Ken Goldberg quantifies the challenge: the text data used to train LLMs would take a human 100,000 years to read. Equivalent data for robot manipulation (vision-to-control signals) doesn't exist online and must be generated from scratch, explaining the slower progress in physical AI.

Robots have become so capable at low-level physical tasks that the primary bottleneck has shifted to "mid-level reasoning"—interpreting a scene and choosing the correct next action. This means improvement can come from high-level language-based coaching, not just more physical demonstration data, which is a major breakthrough.

As reinforcement learning (RL) techniques mature, the core challenge shifts from the algorithm to the problem definition. The competitive moat for AI companies will be their ability to create high-fidelity environments and benchmarks that accurately represent complex, real-world tasks, effectively teaching the AI what matters.

Self-driving cars, a 20-year journey so far, are relatively simple robots: metal boxes on 2D surfaces designed *not* to touch things. General-purpose robots operate in complex 3D environments with the primary goal of *touching* and manipulating objects. This highlights the immense, often underestimated, physical and algorithmic challenges facing robotics.

Unlike older robots requiring precise maps and trajectory calculations, new robots use internet-scale common sense and learn motion by mimicking humans or simulations. This combination has “wiped the slate clean” for what is possible in the field.

![Dylan Patel - Inside the Trillion-Dollar AI Buildout - [Invest Like the Best, EP.442] thumbnail](https://megaphone.imgix.net/podcasts/799253cc-9de9-11f0-8661-ab7b8e3cb4c1/image/d41d3a6f422989dc957ef10da7ad4551.jpg?ixlib=rails-4.3.1&max-w=3000&max-h=3000&fit=crop&auto=format,compress)

![Sergey Levine - Building LLMs for the Physical World - [Invest Like the Best, EP.465] thumbnail](https://megaphone.imgix.net/podcasts/fdcd4328-2c77-11f1-a72b-977309fd08f1/image/b1bb4368d6e13a4a804924681ffe3ab1.jpg?ixlib=rails-4.3.1&max-w=3000&max-h=3000&fit=crop&auto=format,compress)