Get your free personalized podcast brief

We scan new podcasts and send you the top 5 insights daily.

The rationale for Russia's automated nuclear retaliation system isn't about gaining a strategic edge. It's an internal hedge against the perceived unreliability of their own military, born from fear that human commanders might not follow a launch order, especially after a decapitation strike.

Related Insights

While modernizing nuclear command and control systems seems logical, their current antiquated state offers a paradoxical security benefit. Sam Harris suggests this technological obsolescence makes them less vulnerable to modern hacking techniques, creating an unintentional layer of safety against cyber-initiated launches.

The joint statement on keeping humans in control of nuclear weapons is a significant diplomatic achievement demonstrating shared intent. However, it's not a binding agreement, and the real challenge is verifying this commitment, which is difficult given the secrecy surrounding military AI integration.

While fears focus on tactical "killer robots," the more plausible danger is automation bias at the strategic level. Senior leaders, lacking deep technical understanding, might overly trust AI-generated war plans, leading to catastrophic miscalculations about a war's ease or outcome.

While the US military opposes bans on autonomous 'killer robots' for conventional warfare, it maintains a firm 'human-in-the-loop' policy for nuclear launch decisions. This reveals a strategic calculation: the normative value of preventing autonomous nuclear use outweighs any marginal benefit, a line not drawn for conventional systems.

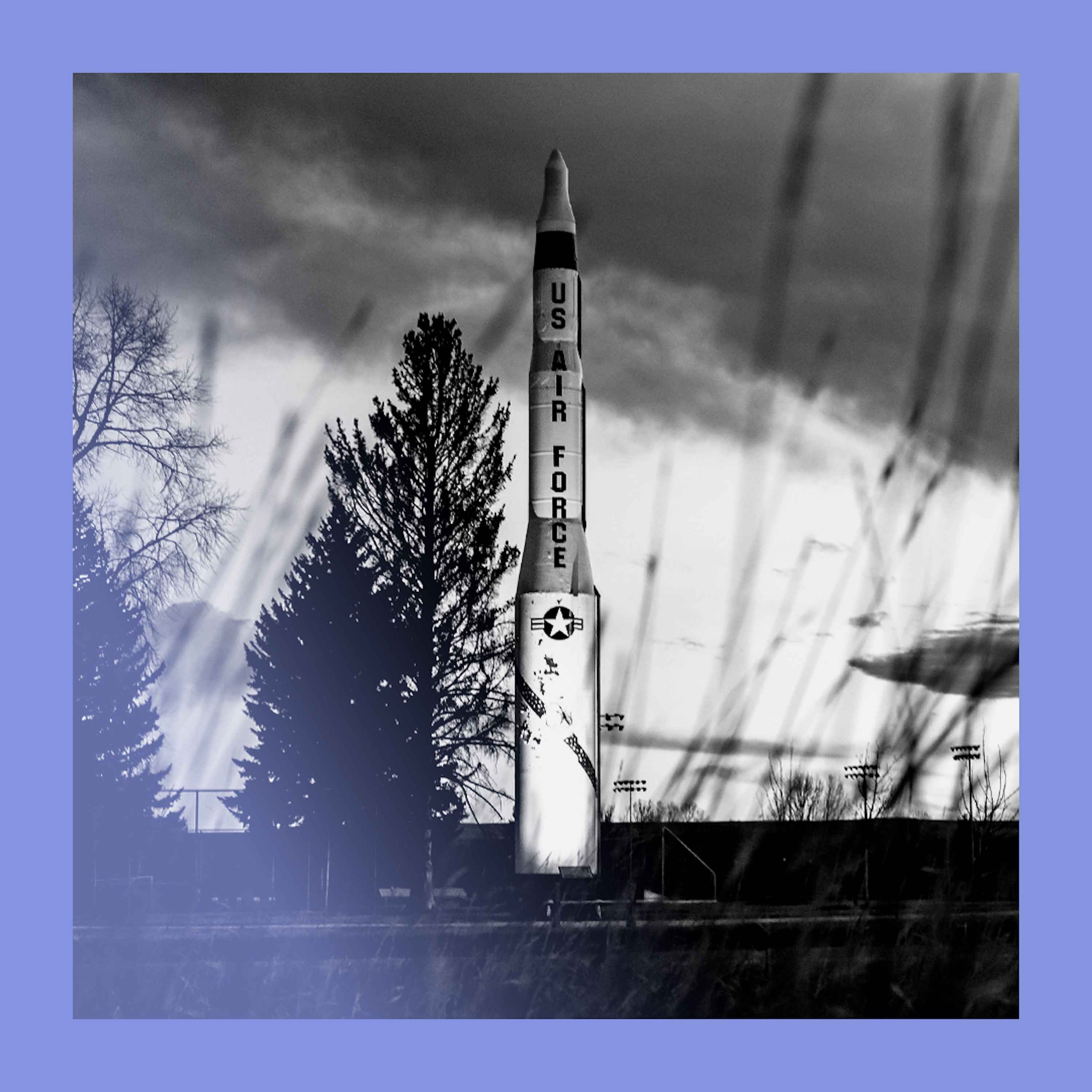

The popular scenario of an AI taking control of nuclear arsenals is less plausible than imagined. Nuclear Command, Control, and Communication (NC3) systems are profoundly classified and intentionally analog, precisely to prevent the kind of digital takeover an AI would require.

Even if an attacker successfully destroys an adversary's entire command and control structure, retaliation is not prevented. Failsafe systems like Russia's 'Perimeter' or the UK's 'letters of last resort' are designed to automatically trigger a nuclear response, ensuring a second strike still occurs.

The debate around AI in warfare often misses that significant autonomy already exists. Systems like the Phalanx Gatling gun and "fire-and-forget" missiles, which operate without human supervision after launch, have been standard for decades, representing a baseline of existing automation.

Public fear focuses on AI hypothetically creating new nuclear weapons. The more immediate danger is militaries trusting highly inaccurate AI systems for critical command and control decisions over existing nuclear arsenals, where even a small error rate could be catastrophic.

When the White House first proposed a policy against using AI for nuclear launch decisions in 2021, DOD officials found it strange. This highlights the incredible speed at which AI's strategic risks have moved from fringe concerns to central policy debates in just a few years.

Unlike China's historical "minimal deterrence" (surviving a first strike to retaliate), the US and Russia operate on "damage limitation"—using nukes to destroy the enemy's arsenal. This logic inherently drives a numbers game, fueling an arms race as each side seeks to counter the other's growing stockpile.