Get your free personalized podcast brief

We scan new podcasts and send you the top 5 insights daily.

Even if an attacker successfully destroys an adversary's entire command and control structure, retaliation is not prevented. Failsafe systems like Russia's 'Perimeter' or the UK's 'letters of last resort' are designed to automatically trigger a nuclear response, ensuring a second strike still occurs.

Related Insights

While modernizing nuclear command and control systems seems logical, their current antiquated state offers a paradoxical security benefit. Sam Harris suggests this technological obsolescence makes them less vulnerable to modern hacking techniques, creating an unintentional layer of safety against cyber-initiated launches.

A state cannot test its systems for eliminating an adversary's entire nuclear arsenal without the test itself being mistaken for the start of a real war. This inability to rehearse creates fundamental, irreducible uncertainty about the plan's effectiveness for any potential attacker.

The catastrophic consequence of even a single nuclear submarine escaping a first strike creates an incredibly high burden of proof. An attacker must be virtually 100% confident in eliminating all retaliatory forces simultaneously, a level of certainty that is practically unattainable.

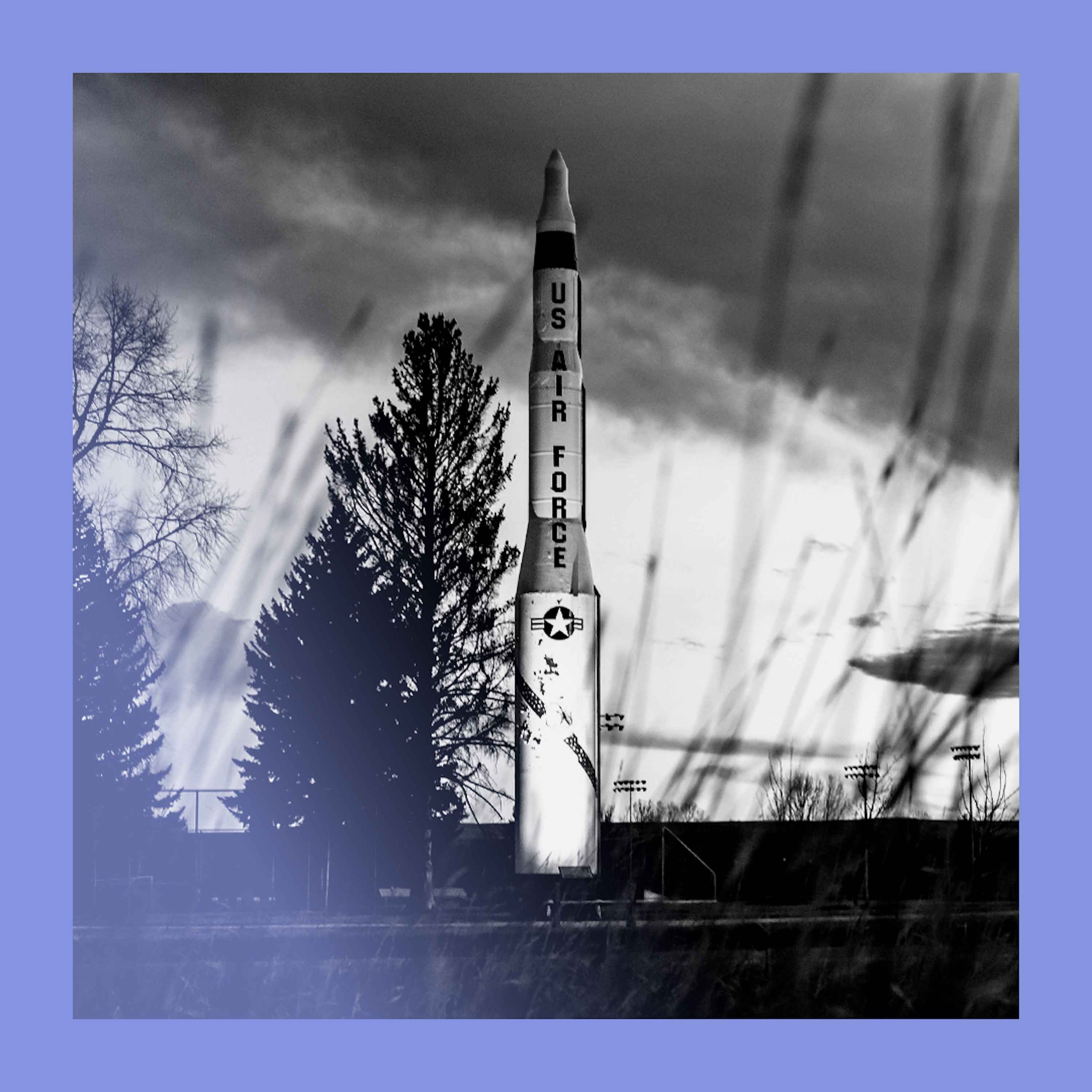

The popular scenario of an AI taking control of nuclear arsenals is less plausible than imagined. Nuclear Command, Control, and Communication (NC3) systems are profoundly classified and intentionally analog, precisely to prevent the kind of digital takeover an AI would require.

The doctrine of mutually assured destruction (MAD) relies on the threat of retaliation. However, once an enemy's nuclear missiles are in the air, that threat has failed. Sam Harris argues that launching a counter-strike at that point serves no strategic purpose and is a morally insane act of mass murder.

Iran has anticipated leadership decapitation strikes for decades, building a resilient and distributed command and control infrastructure. This allows its forces, particularly the IRGC, to continue operating and launching attacks even without direct contact with headquarters.

Public fear focuses on AI hypothetically creating new nuclear weapons. The more immediate danger is militaries trusting highly inaccurate AI systems for critical command and control decisions over existing nuclear arsenals, where even a small error rate could be catastrophic.

Unlike 20th-century bombing campaigns, modern precision-strike capabilities allow for targeting a country's entire leadership from a distance. This strategy, lacking a plan for subsequent governance, represents a largely untested and rare event in military history.

Unlike China's historical "minimal deterrence" (surviving a first strike to retaliate), the US and Russia operate on "damage limitation"—using nukes to destroy the enemy's arsenal. This logic inherently drives a numbers game, fueling an arms race as each side seeks to counter the other's growing stockpile.

To maintain a second-strike capability, a country doesn't need equally advanced AI. Low-tech countermeasures like decoys, covering roads with netting, or simply moving missile launchers more frequently can create enough uncertainty to thwart a sophisticated, AI-driven first strike.