Get your free personalized podcast brief

We scan new podcasts and send you the top 5 insights daily.

Success on constraint-satisfaction puzzles like Sudoku signals a shift from current AI that summarizes existing information to a new class capable of 'generative strategy.' These models can analyze constraints and creatively propose novel solutions, tackling real-world planning problems in medicine, law, and operations rather than just describing what's already known.

Related Insights

The AI industry is hitting data limits for training massive, general-purpose models. The next wave of progress will likely come from creating highly specialized models for specific domains, similar to DeepMind's AlphaFold, which can achieve superhuman performance on narrow tasks.

AI agents have become proficient at following a pre-defined strategy to execute tasks. The next major frontier, and a significant bottleneck, is the ability to explore open-ended environments and generate novel strategies independently. This is the core capability that benchmarks like ARC AGI v3 are designed to test.

The next major AI breakthrough will come from applying generative models to complex systems beyond human language, such as biology. By treating biological processes as a unique "language," AI could discover novel therapeutics or research paths, leading to a "Move 37" moment in science.

Hassabis argues AGI isn't just about solving existing problems. True AGI must demonstrate the capacity for breakthrough creativity, like Einstein developing a new theory of physics or Picasso creating a new art genre. This sets a much higher bar than current systems.

Top LLMs like Claude 3 and DeepSeek score 0% on complex Sudoku puzzles, a task humans can solve. This isn't a minor flaw but a categorical failure, exposing the transformer architecture's inability to handle constraint satisfaction problems that require backtracking and parallel reasoning, unlike its sequential, token-by-token processing.

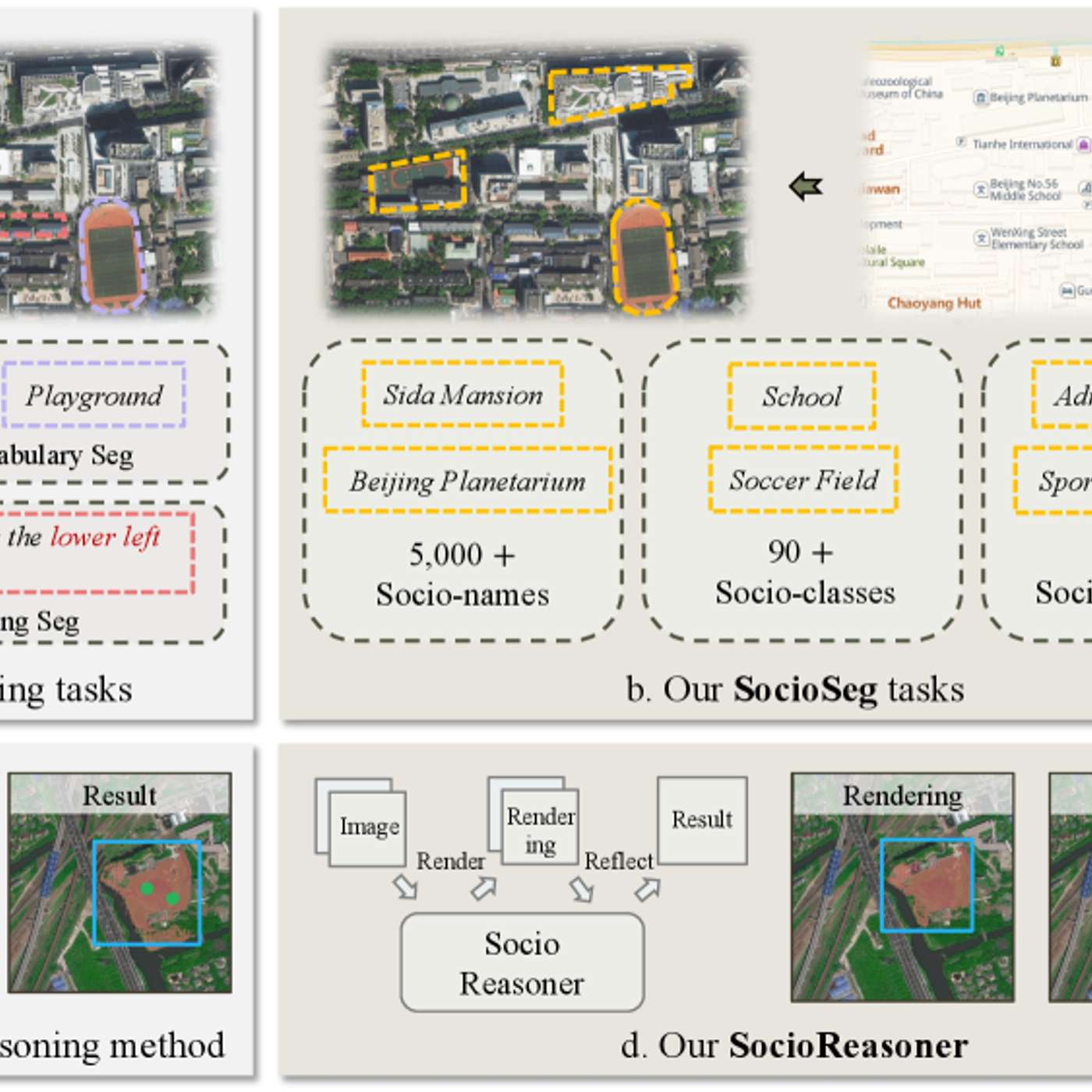

The featured AI model succeeds by reframing urban analysis as a reasoning problem. It uses a two-stage process—generating broad hypotheses then refining with detailed evidence—which mimics human cognition and outperforms traditional single-pass pattern recognition systems.

The key difference between modern AI and older tech like Google Search is its ability to reason about hypotheticals. It doesn't just retrieve existing information; it synthesizes knowledge to "think for itself" and generate entirely new content.

Google DeepMind CEO Demis Hassabis argues that today's large models are insufficient for AGI. He believes progress requires reintroducing algorithmic techniques from systems like AlphaGo, specifically planning and search, to enable more robust reasoning and problem-solving capabilities beyond simple pattern matching.

While GenAI continues the "learn by example" paradigm of machine learning, its ability to create novel content like images and language is a fundamental step-change. It moves beyond simply predicting patterns to generating entirely new outputs, representing a significant evolution in computing.

We have formal languages like Lean for deductive proofs, which AI can be trained on. The next frontier is developing a language to capture mathematical *strategy*—how to assess a conjecture's plausibility or choose a promising path. This would help automate the intuitive, creative part of mathematical discovery.