Get your free personalized podcast brief

We scan new podcasts and send you the top 5 insights daily.

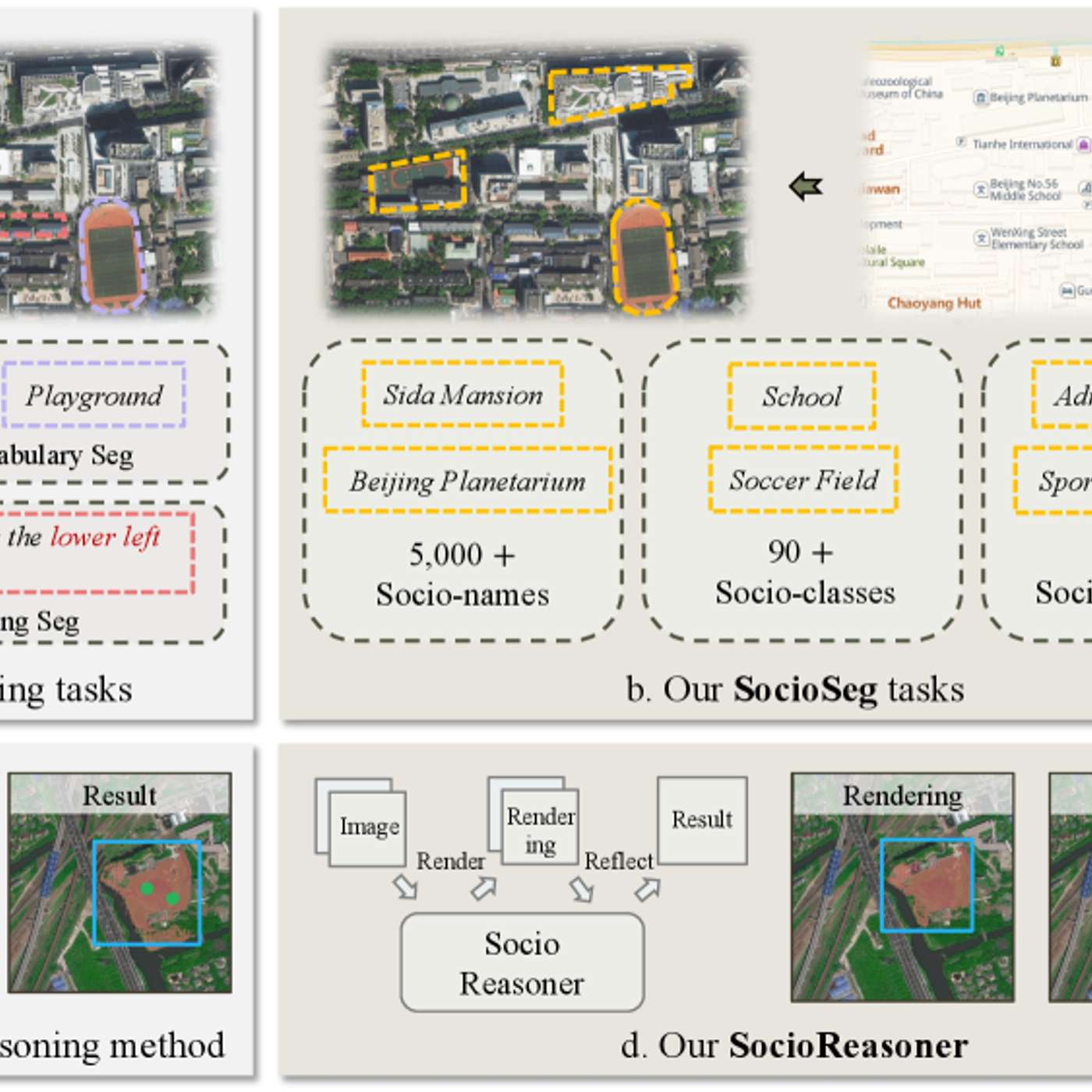

The featured AI model succeeds by reframing urban analysis as a reasoning problem. It uses a two-stage process—generating broad hypotheses then refining with detailed evidence—which mimics human cognition and outperforms traditional single-pass pattern recognition systems.

Related Insights

Unlike simple chatbots, AI agents tackle complex requests by first creating a detailed, transparent plan. The agent can even adapt this plan mid-process based on initial findings, demonstrating a more autonomous approach to problem-solving.

Reinforcement learning incentivizes AIs to find the right answer, not just mimic human text. This leads to them developing their own internal "dialect" for reasoning—a chain of thought that is effective but increasingly incomprehensible and alien to human observers.

Anthropic strategically focuses on "vision in" (AI understanding visual information) over "vision out" (image generation). This mimics a real developer who needs to interpret a user interface to fix it, but can delegate image creation to other tools or people. The core bet is that the primary bottleneck is reasoning, not media generation.

Generating truly novel and valid scientific hypotheses requires a specialized, multi-stage AI process. This involves using a reasoning model for idea generation, a literature-grounded model for validation, and a third system for checking originality against existing research. This layered approach overcomes the limitations of a single, general-purpose LLM.

Solving key AI weaknesses like continual learning or robust reasoning isn't just a matter of bigger models or more data. Shane Legg argues it requires fundamental algorithmic and architectural changes, such as building new processes for integrating information over time, akin to an episodic memory.

The AI's ability to handle novel situations isn't just an emergent property of scale. Waive actively trains "world models," which are internal generative simulators. This enables the AI to reason about what might happen next, leading to sophisticated behaviors like nudging into intersections or slowing in fog.

To make genuine scientific breakthroughs, an AI needs to learn the abstract reasoning strategies and mental models of expert scientists. This involves teaching it higher-level concepts, such as thinking in terms of symmetries, a core principle in physics that current models lack.

Go beyond using AI for simple efficiency gains. Engage with advanced reasoning models as if they were expert business consultants. Ask them deep, strategic questions to fundamentally innovate and reimagine your business, not just incrementally optimize current operations.

The most significant recent AI advance is models' ability to use chain-of-thought reasoning, not just retrieve data. However, most business users are unaware of this 'deep research' capability and continue using AI as a simple search tool, missing its transformative potential for complex problem-solving.

Asking an AI to 'predict' or 'evaluate' for a large sample size (e.g., 100,000 users) fundamentally changes its function. The AI automatically switches from generating generic creative options to providing a statistical simulation. This forces it to go deeper in its research and thinking, yielding more accurate and effective outputs.