Get your free personalized podcast brief

We scan new podcasts and send you the top 5 insights daily.

According to Anna Patterson, vector databases struggle with scale, as distinguishing between billions of items requires increasingly long vectors. Their "soft match" functionality also creates relevancy challenges, forcing enterprises to become search experts to tune results, unlike more traditional keyword-based systems.

Related Insights

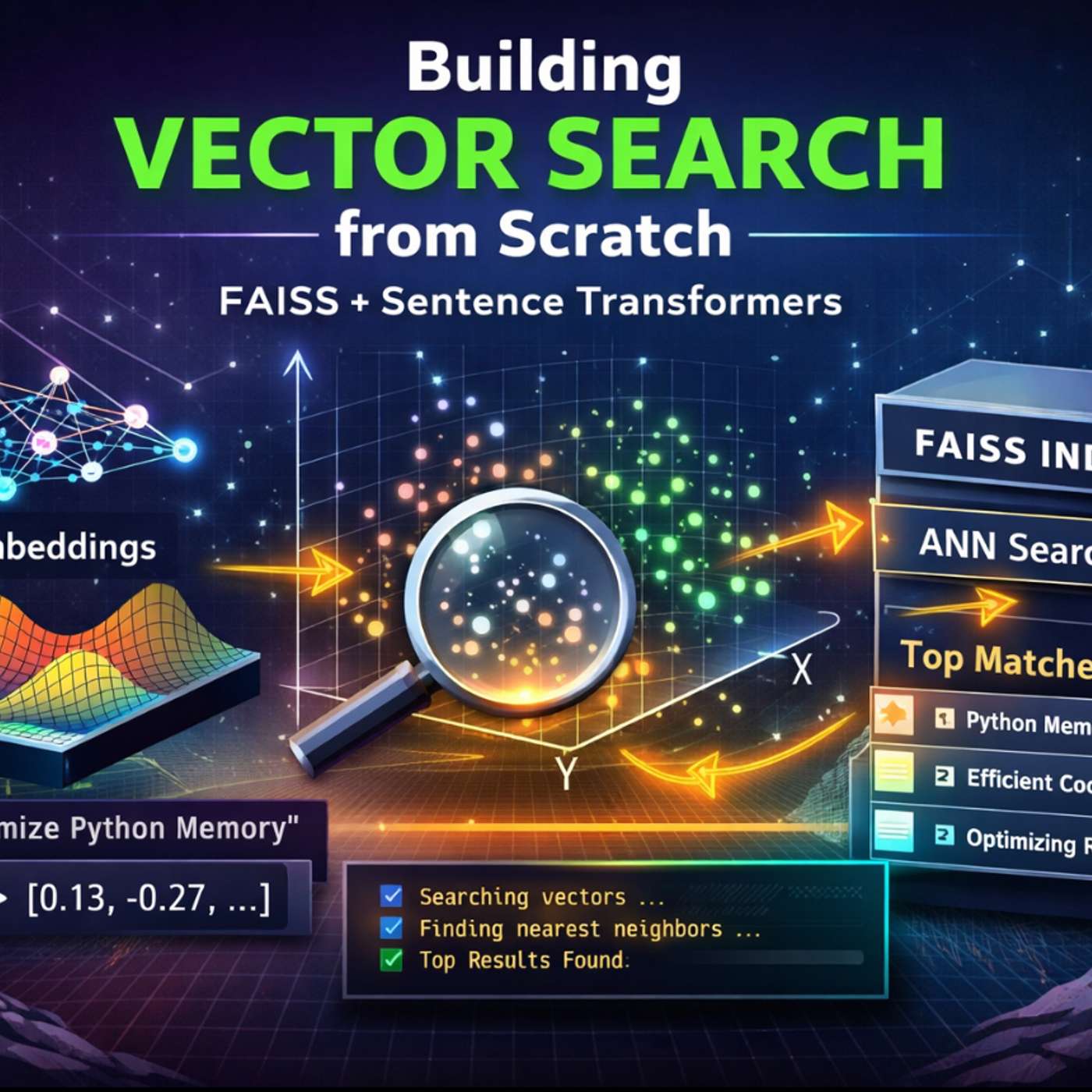

Systems like FAISS are optimized for vector similarity search and do not store the original data. Engineers must build and maintain a separate system to map the returned vector IDs back to the actual documents or metadata, a crucial step for production applications.

Ceramic AI founder Anna Patterson explains their pivot from training to search was driven by a key insight: providing models with live data via low-cost search is far more efficient and timely than the expensive, slow process of continuous retraining.

For enterprise AI, standard RAG struggles with granular permissions and relationship-based questions. Atlassian's "teamwork graph" maps entities like teams, tasks, and documents. This allows it to answer complex queries like "What did my team do last week?"—a task where simple vector search would fail by just returning top documents.

For millions of vectors, exact search (like a FAISS flat index) is too slow. Production systems use Approximate Nearest Neighbor (ANN) algorithms which trade a small amount of accuracy for orders-of-magnitude faster search performance, making large-scale applications feasible.

Managed vector databases are convenient, but building a search engine from scratch using a library like FAISS provides a deeper understanding of index types, latency tuning, and memory trade-offs, which is crucial for optimizing AI systems.

AI's hunger for context is making search a critical but expensive component. As illustrated by Turbo Puffer's origin, a single recommendation feature using vector embeddings can cost tens of thousands per month, forcing companies to find cheaper solutions to make AI features economically viable at scale.

Unlike humans who type 2-3 words, LLMs generate long, sentence-like queries (e.g., eight words or more) to gather comprehensive context. This shift in user behavior from human to AI requires search engines to be optimized for these detailed, descriptive inputs.

Teams often agonize over which vector database to use for their Retrieval-Augmented Generation (RAG) system. However, the most significant performance gains come from superior data preparation, such as optimizing chunking strategies, adding contextual metadata, and rewriting documents into a Q&A format.

Vector search excels at semantic meaning but fails on precise keywords like product SKUs. Effective enterprise search requires a hybrid system combining the strengths of lexical search (e.g., BM25) for keywords and vector search for concepts to serve all user needs accurately.

While AI proofs-of-concept are easy, SAP's CTO states the real engineering hurdle is scaling reliably. The complexity lies in managing thousands of APIs, handling massive document volumes, and applying granular, user-specific context (like regional policies) consistently and accurately.