Get your free personalized podcast brief

We scan new podcasts and send you the top 5 insights daily.

Unlike humans who type 2-3 words, LLMs generate long, sentence-like queries (e.g., eight words or more) to gather comprehensive context. This shift in user behavior from human to AI requires search engines to be optimized for these detailed, descriptive inputs.

Related Insights

SEMrush data shows that search queries containing eight or more words have a sevenfold higher likelihood of triggering a Google AI Overview. This means marketers must shift from short keywords to long, human-toned questions, a strategy called "scenario marketing," to gain visibility in these AI-driven results.

Google's VP of Search notes that AI enables users to state their complex needs in natural language, rather than translating them into keywords. Users now "tell you the real problem," providing Google with richer intent data to deliver more helpful and specific results.

SEO is evolving beyond search engines to include Large Language Models (LLMs) like ChatGPT. Brands must now practice "Generative Engine Optimization" (GEO), ensuring their site is properly coded and marked up so AI can accurately crawl, understand, and recommend their products in generative responses.

As search behavior evolves from simple keywords to complex, conversational queries, the goal is no longer just ranking on a results page. The new metric for success is the "AI citation rate"—how often a brand's content is surfaced as the trusted, direct answer by Large Language Models (LLMs), fundamentally changing the nature of SEO.

Users now ask AI models highly specific, long-form questions, not short search terms. HubSpot's CEO advises creating more detailed content with better citations and case studies to provide authoritative answers for these complex queries and remain visible.

While Google SEO relies heavily on placing keywords in specific technical elements like title tags, AI search engines care less about keywords. They prioritize content that directly and comprehensively answers a user's question. The strategy shifts from keyword density to providing the best possible solution.

The future of search isn't just about Google; it's about being found in AI tools like ChatGPT. This shift to Generative Engine Optimization (GEO) requires creating helpful, Q&A-formatted content that AI models can easily parse and present as answers, ensuring your visibility in the new search landscape.

Unlike chatbots that rely solely on their training data, Google's AI acts as a live researcher. For a single user query, the model executes a 'query fanout'—running multiple, targeted background searches to gather, synthesize, and cite fresh information from across the web in real-time.

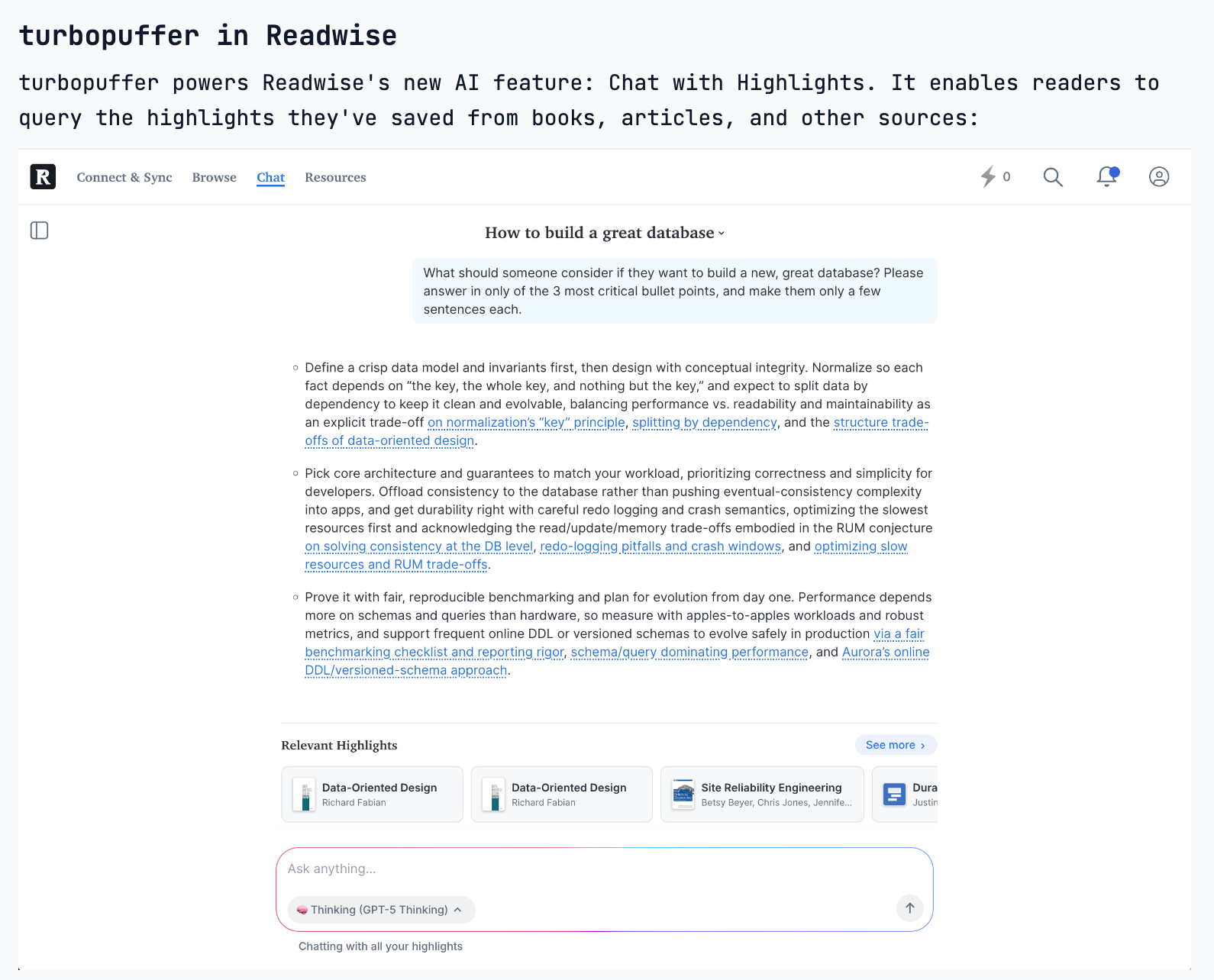

The nature of Retrieval-Augmented Generation (RAG) is evolving. Instead of a single search to populate an initial context window, AI agents are now performing numerous concurrent queries in a single turn. This allows them to explore diverse information paths simultaneously, driving new database requirements.

Initially, users spoke to chatbots in clipped keywords. As they've become familiar with capable LLMs, they've learned that providing rich, natural language context yields better results. This user adaptation is critical for maximizing AI effectiveness.