Get your free personalized podcast brief

We scan new podcasts and send you the top 5 insights daily.

A new academic framework, ArbiterK, challenges the standard model of an LLM acting as the central controller. It inverts the paradigm by embedding the LLM within a deterministic execution system, demoting it to a suggestion engine. This ensures the system, not the probabilistic LLM, retains final control and enforces rules.

Related Insights

Generative AI is predictive and imperfect, unable to self-correct. A 'guardian agent'—a separate AI system—is required to monitor, score, and rewrite content produced by other AIs to enforce brand, style, and compliance standards, creating a necessary system of checks and balances.

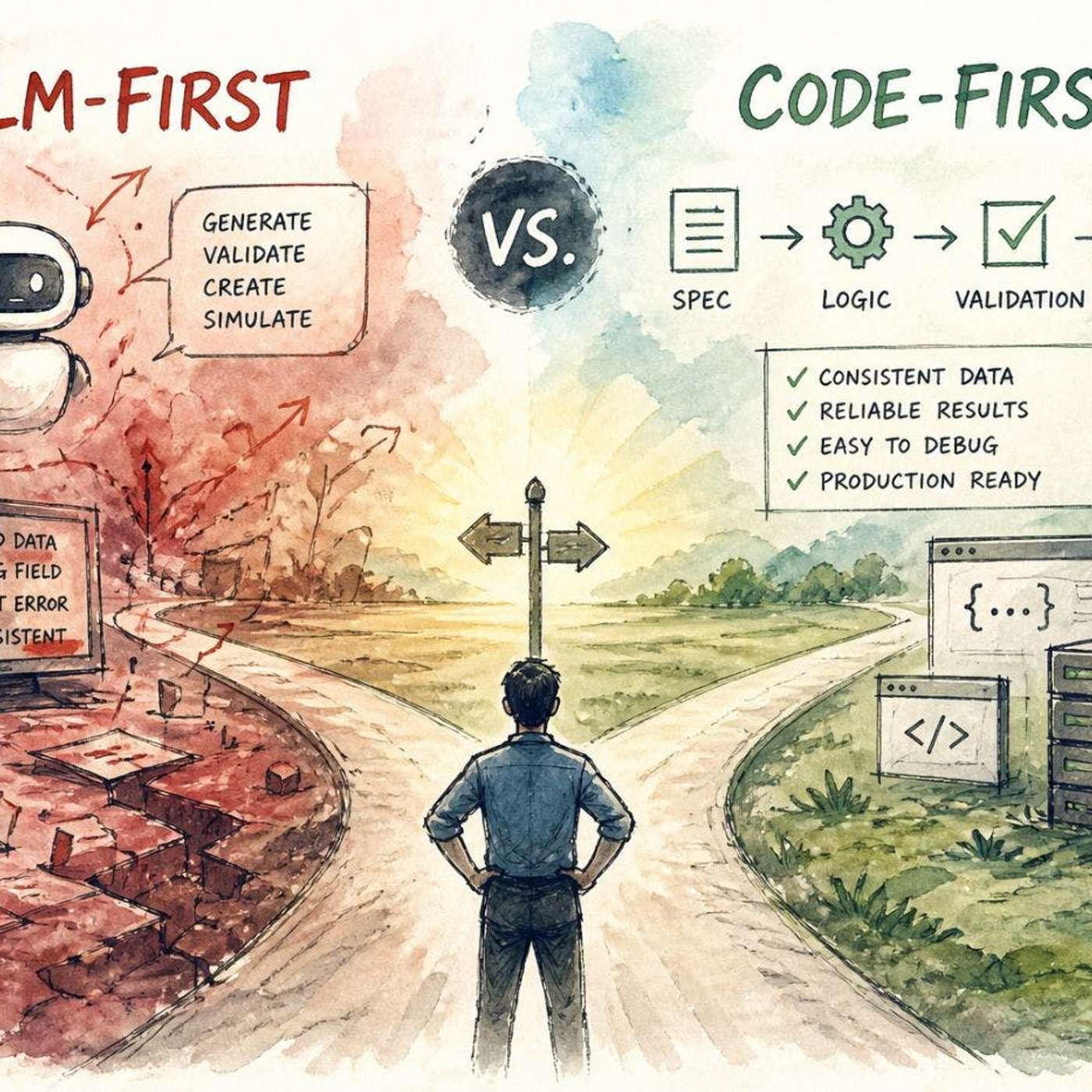

Don't give LLMs full control. Use deterministic code for core logic, validation, and enforcing rules. Delegate only tasks requiring flexibility or understanding of unstructured input to the LLM, treating it as a specialized component, not the entire system.

Relying on prompt engineering for safety is insufficient and easily bypassed. The expert consensus is to build safeguards directly into the system's architecture. Architectural controls are immutable during runtime, whereas prompt-level controls can be manipulated or overridden by clever user inputs.

Purely agentic systems can be unpredictable. A hybrid approach, like OpenAI's Deep Research forcing a clarifying question, inserts a deterministic workflow step (a "speed bump") before unleashing the agent. This mitigates risk, reduces errors, and ensures alignment before costly computation.

Traditional systems can be controlled with simple, deterministic rules. Because modern AI agents are inherently unpredictable, effective governance requires using another layer of AI. A specialized AI must monitor, interpret, and block the actions of other agents in real-time.

The conversation around Agentic AI has matured beyond abstract policies. The consensus among consultancies, tech firms, and academics is that effective governance requires embedding controls, like access management and validation, directly into the system's architecture as a core design principle.

For critical enterprise functions like financial modeling, 99.9% accuracy from a probabilistic LLM is unacceptable. Platforms like Salesforce's Agent Force 360 solve this by layering deterministic logic and guardrails on top of the AI, ensuring compliance and preventing costly errors where even a 0.1% failure rate is too high.

Instead of relying solely on human oversight, AI governance will evolve into a system where higher-level "governor" agents audit and regulate other AIs. These specialized agents will manage the core programming, permissions, and ethical guidelines of their subordinates.

The most effective AI architecture for complex tasks involves a division of labor. An LLM handles high-level strategic reasoning and goal setting, providing its intent in natural language. Specialized, efficient algorithms then translate that strategic intent into concrete, tactical actions.

As AI models execute tasks via function calling, their internal state is insufficient for reliable, repeatable business outcomes. They must integrate with external systems (like BPMS) to become predictable "runtimes," ensuring consistent results despite prompt failures or hallucinations.