Get your free personalized podcast brief

We scan new podcasts and send you the top 5 insights daily.

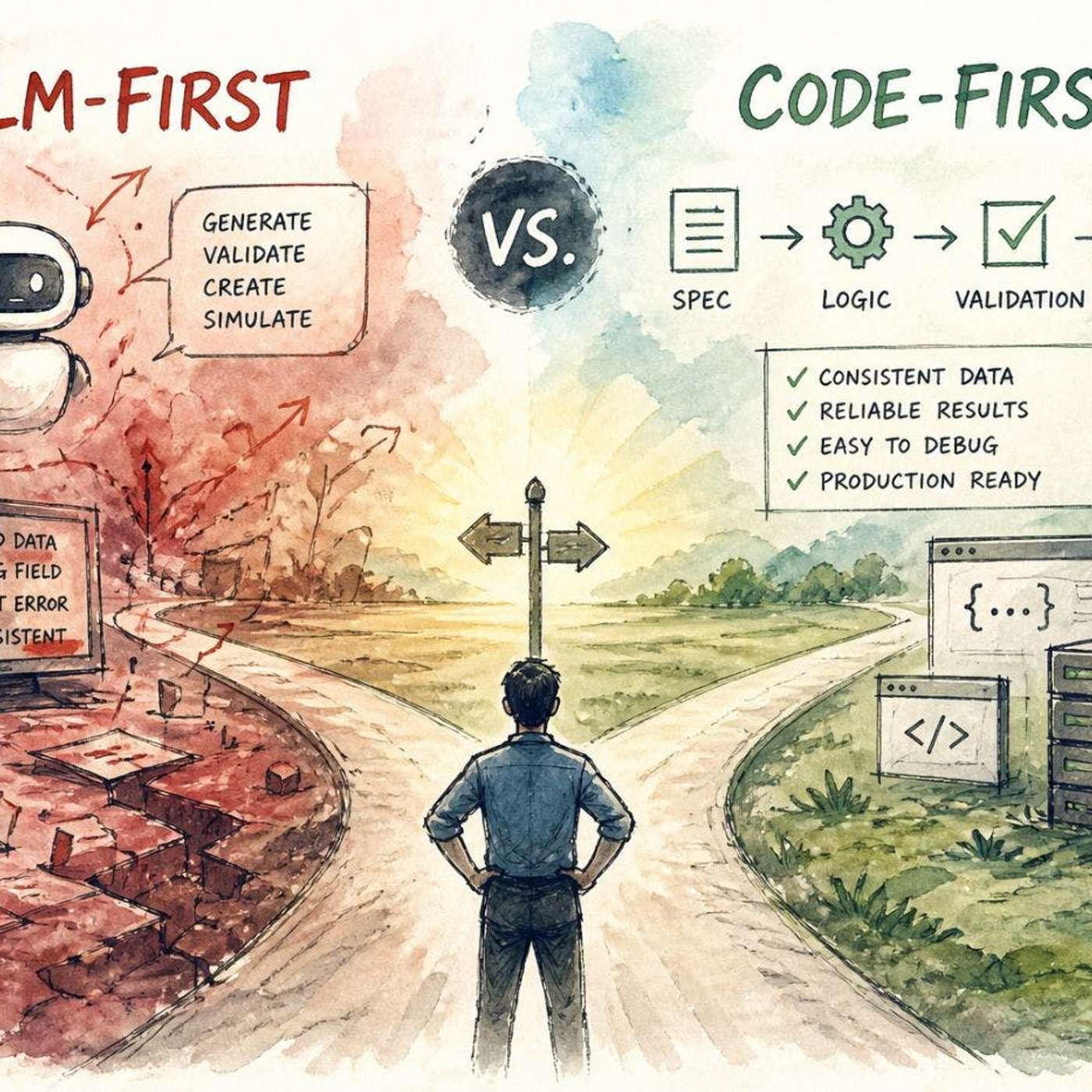

Don't give LLMs full control. Use deterministic code for core logic, validation, and enforcing rules. Delegate only tasks requiring flexibility or understanding of unstructured input to the LLM, treating it as a specialized component, not the entire system.

Related Insights

An LLM shouldn't do math internally any more than a human would. The most intelligent AI systems will be those that know when to call specialized, reliable tools—like a Python interpreter or a search API—instead of attempting to internalize every capability from first principles.

An 'LLM-first' approach, where the model handles core logic, creates impressive demos but lacks production reliability. A 'code-first' approach, using code for structure and LLMs for specific tasks, is less flashy but proves robust and debuggable in real-world applications.

High productivity isn't about using AI for everything. It's a disciplined workflow: breaking a task into sub-problems, using an LLM for high-leverage parts like scaffolding and tests, and reserving human focus for the core implementation. This avoids the sunk cost of forcing AI on unsuitable tasks.

To ensure reliability in healthcare, ZocDoc doesn't give LLMs free rein. It wraps them in a hybrid system where traditional, deterministic code orchestrates the AI's tasks, sets firm boundaries, and knows when to hand off to a human, preventing the 'praying for the best' approach common with direct LLM use.

For critical enterprise functions like financial modeling, 99.9% accuracy from a probabilistic LLM is unacceptable. Platforms like Salesforce's Agent Force 360 solve this by layering deterministic logic and guardrails on top of the AI, ensuring compliance and preventing costly errors where even a 0.1% failure rate is too high.

Separate AI's role. Use an AI assistant to write reliable, deterministic code for structuring data (e.g., pulling Slack messages via API). Then, apply a live AI model only for the subjective task, like categorizing message urgency. This hybrid approach creates a more robust and controllable system.

Building reliable AI agents for finance, where accuracy is critical, requires moving beyond pure LLMs. Xero uses a hybrid system combining LLM-driven workflows with programmatic code and deep domain knowledge to ensure control and reliability that LLMs inherently lack.

Relying solely on natural language prompts like 'always do this' is unreliable for enterprise AI. LLMs struggle with deterministic logic. Salesforce developed 'AgentForce Script,' a dedicated language to enforce rules and ensure consistent, repeatable performance for critical business workflows, blending it with LLM reasoning.

To deploy LLMs in high-stakes environments like finance, combine them with deterministic checks. For example, use a traditional algorithm to calculate cash flow and only surface the LLM's answer if it falls within an acceptable range. This prevents hallucinations and ensures reliability.

Instead of treating a complex AI system like an LLM as a single black box, build it in a componentized way by separating functions like retrieval, analysis, and output. This allows for isolated testing of each part, limiting the surface area for bias and simplifying debugging.