To avoid frantic, high-pressure migrations when an embedding model is deprecated, teams should treat model selection as a dependency that requires planned updates, like any other software library. This mindset shifts the process from an emergency scramble to routine, planned maintenance, making upgrades predictable and manageable.

The most common and robust method for migrating embedding models is to build a completely new vector index in parallel using the new model. While the old index serves live traffic, the new one is built, validated via shadow scoring, and then traffic is cut over with an alias swap, ensuring zero downtime.

A typical A/B test re-ranks the same set of results. However, changing the embedding model alters the fundamental retrieval step, meaning the two versions return entirely different sets of documents for the same query. This complicates analysis, as performance differences reflect both model quality and the content of the newly retrieved documents.

For systems where a full parallel index is too expensive, a gradual migration is possible. By using two vector fields in each document (one for the old model, one for the new), queries can be run against both fields simultaneously. Results are then merged using Reciprocal Rank Fusion (RRF), which works even though the models' similarity scores are incomparable.

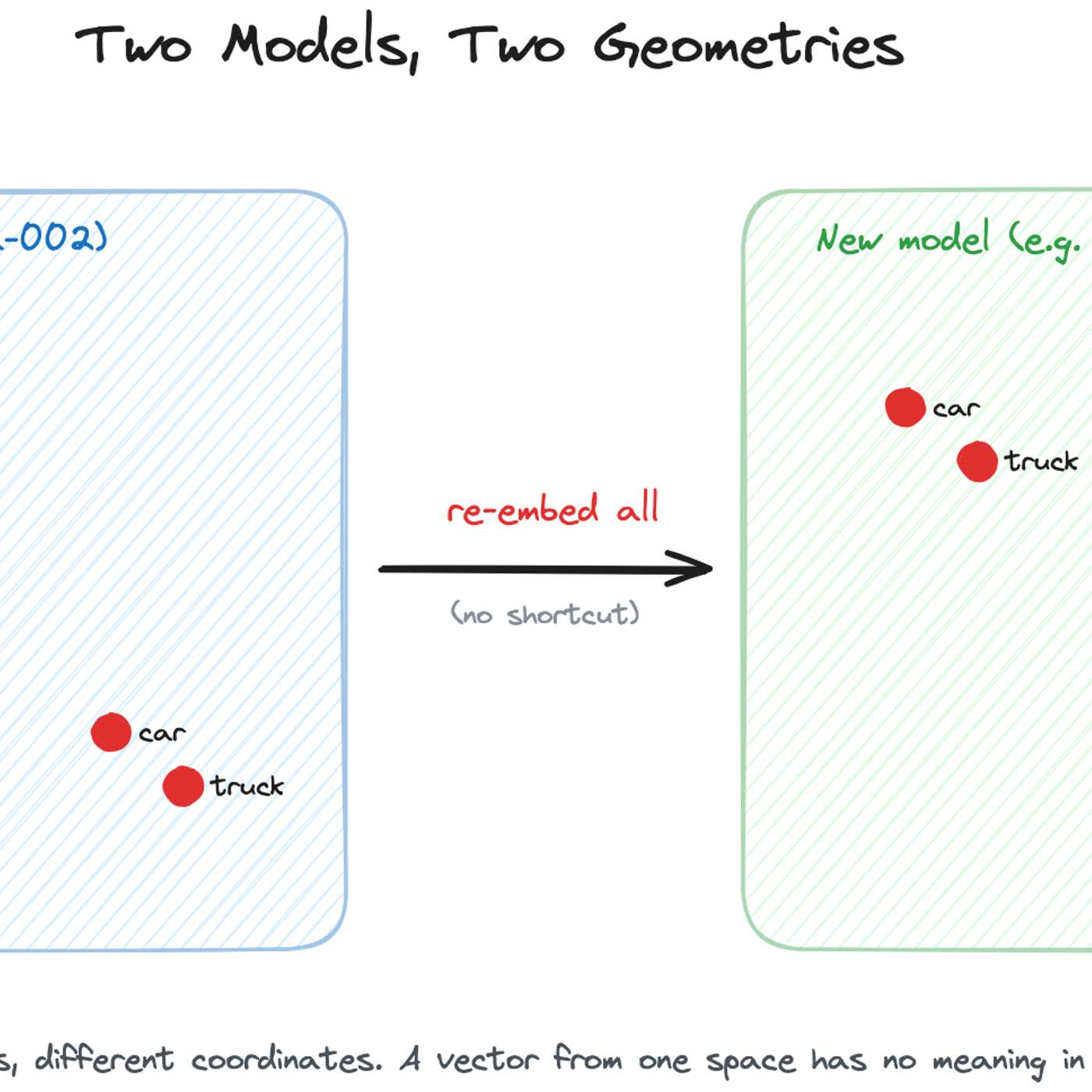

While academic research explores techniques like 'embedding space alignment' to avoid costly re-embeddings, no major company has publicly confirmed using them in production. Industry accounts from Uber, Pinterest, and Google all describe full, parallel re-embedding as the current, practical standard, highlighting a significant gap between research and real-world adoption.