Get your free personalized podcast brief

We scan new podcasts and send you the top 5 insights daily.

To avoid frantic, high-pressure migrations when an embedding model is deprecated, teams should treat model selection as a dependency that requires planned updates, like any other software library. This mindset shifts the process from an emergency scramble to routine, planned maintenance, making upgrades predictable and manageable.

Related Insights

Instead of committing to a single AI tool, manage them like a team. Maintain a spreadsheet of the best-performing models for specific tasks (coding, images, etc.) and update it monthly. This approach, where 'AI takes the job of the previous AI,' ensures you're always using the best tool on the market.

Instead of chasing the latest hyped AI model, focus on building modular, system-based workflows. This allows you to easily plug in new, better models as they are released, instantly upgrading your capabilities without having to start over.

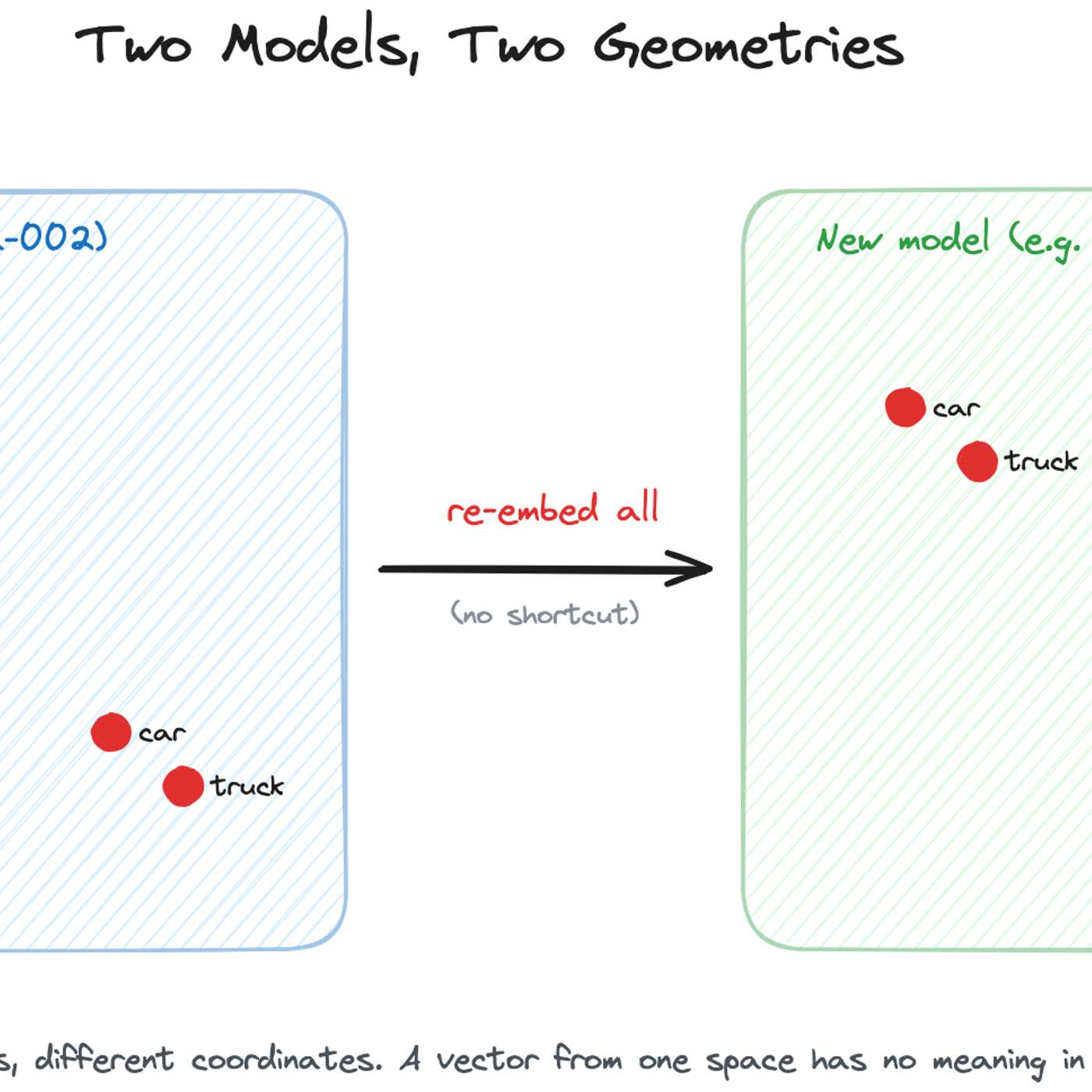

While academic research explores techniques like 'embedding space alignment' to avoid costly re-embeddings, no major company has publicly confirmed using them in production. Industry accounts from Uber, Pinterest, and Google all describe full, parallel re-embedding as the current, practical standard, highlighting a significant gap between research and real-world adoption.

AI companies like OpenAI have shifted to monthly, incremental model updates. This frequent but less impactful release cadence means developers no longer feel strong loyalty to any specific model and simply switch to the newest version available, treating major AI models like commodities.

Enterprises will shift from relying on a single large language model to using orchestration platforms. These platforms will allow them to 'hot swap' various models—including smaller, specialized ones—for different tasks within a single system, optimizing for performance, cost, and use case without being locked into one provider.

With new foundation models launching constantly, end-users don't care about the specific model name. A durable AI application should be model-agnostic, using an intelligent agent to select the best model for a given task. This focuses the product on the user's desired outcome, not the underlying tech.

The underlying infrastructure for AI agents ('harnesses') becomes obsolete roughly every six months due to rapid advances in AI models. At Notion, this means completely rewriting the harness multiple times a year, demanding a culture comfortable with constantly rebuilding core systems and discarding previous assumptions.

The most common and robust method for migrating embedding models is to build a completely new vector index in parallel using the new model. While the old index serves live traffic, the new one is built, validated via shadow scoring, and then traffic is cut over with an alias swap, ensuring zero downtime.

To fully leverage rapidly improving AI models, companies cannot just plug in new APIs. Notion's co-founder reveals they completely rebuild their AI system architecture every six months, designing it around the specific capabilities of the latest models to avoid being stuck with suboptimal implementations.

Despite constant new model releases, enterprises don't frequently switch LLMs. Prompts and workflows become highly optimized for a specific model's behavior, creating significant switching costs. Performance gains of a new model must be substantial to justify this re-engineering effort.