Get your free personalized podcast brief

We scan new podcasts and send you the top 5 insights daily.

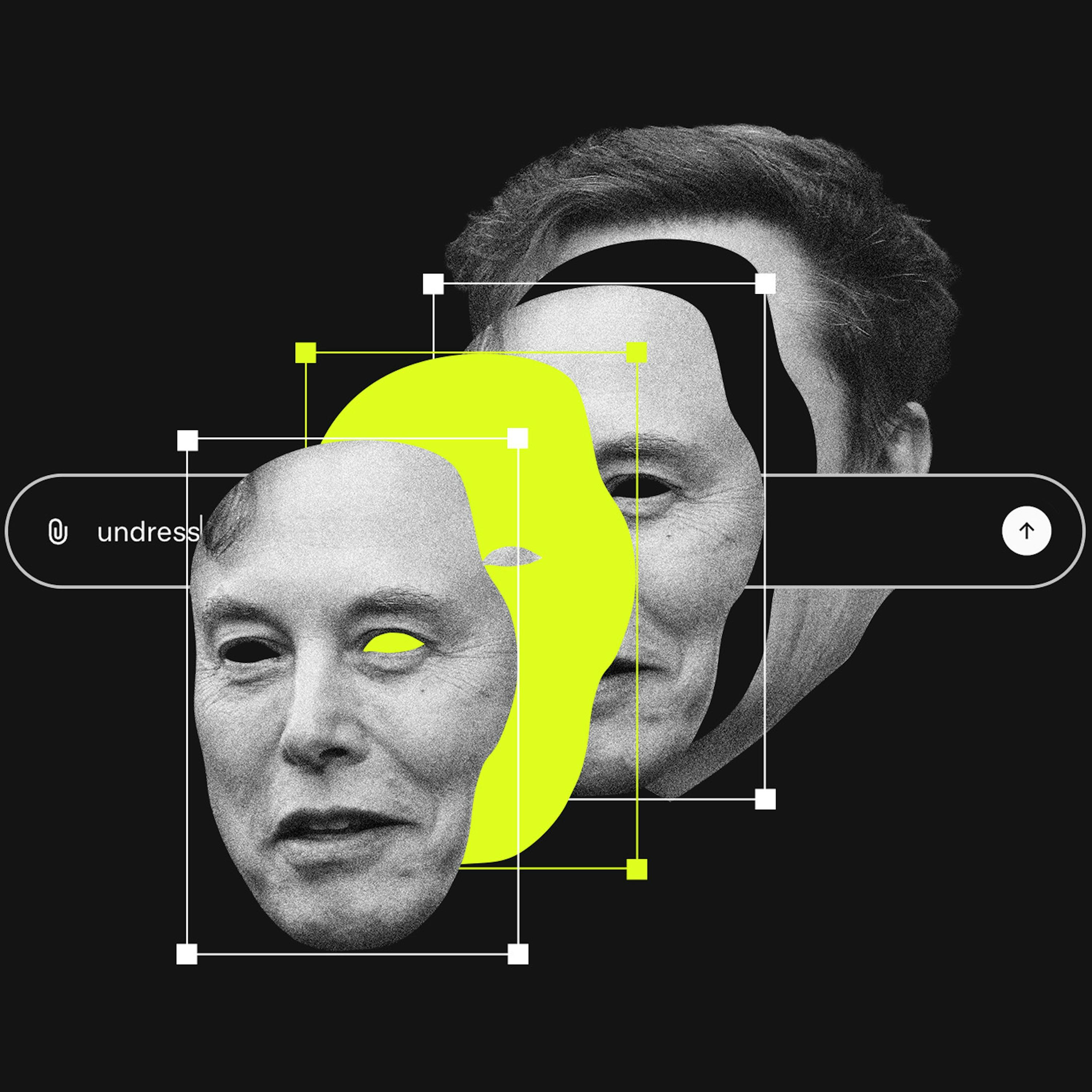

The Trust & Safety field, once a powerful internal voice for user rights and ethical principles, has been systematically weakened. To appease political pressures, tech companies have pushed out vocal advocates, reducing the role to a mere compliance function and leaving platform governance to the whims of their leaders.

Related Insights

Platform decay isn't inevitable; it occurred because four historical checks and balances were removed. These were: robust antitrust enforcement preventing monopolies, regulation imposing penalties for bad behavior, a powerful tech workforce that could refuse unethical tasks, and technical interoperability that gave users control via third-party tools.

Similar to the financial sector, tech companies are increasingly pressured to act as a de facto arm of the government, particularly on issues like censorship. This has led to a power struggle, with some tech leaders now publicly pre-committing to resist future government requests.

As major platforms abdicate trust and safety responsibilities, demand grows for user-centric solutions. This fuels interest in decentralized networks and "middleware" that empower communities to set their own content standards, a move away from centralized, top-down platform moderation.

Tech leaders, while extraordinary technologists and entrepreneurs, are not relationship experts, philosophers, or ethicists. Society shouldn't expect them to arrive at the correct ethical judgments on complex issues, highlighting the need for democratic, regulatory input.

Departures of senior safety staff from top AI labs highlight a growing internal tension. Employees cite concerns that the pressure to commercialize products and launch features like ads is eroding the original focus on safety and responsible development.

Platforms designed for frictionless speed prevent users from taking a "trust pause"—a moment to critically assess if a person, product, or piece of information is worthy of trust. By removing this reflective step in the name of efficiency, technology accelerates poor decision-making and makes users more vulnerable to misinformation.

The existence of internal teams like Anthropic's "Societal Impacts Team" serves a dual purpose. Beyond their stated mission, they function as a strategic tool for AI companies to demonstrate self-regulation, thereby creating a political argument that stringent government oversight is unnecessary.

Previously, scarce and mission-driven tech workers could refuse to build features that harmed users. Mass layoffs created a labor surplus, removing workers' leverage and allowing companies to push through user-hostile changes without internal resistance.

By employing or bankrolling a majority of AI researchers, large tech firms dictate the research agenda. They also censor or fire researchers, like Dr. Timnit Gebru at Google, whose work exposes the harms and limitations of their commercial models.

The intense state interest in regulating tech like crypto and AI is a response to the tech sector's rise to a power level that challenges the state. The public narrative is safety, but the underlying motivation is maintaining control over money, speech, and ultimately, the population.