Get your free personalized podcast brief

We scan new podcasts and send you the top 5 insights daily.

Contrary to conventional UX wisdom, introducing friction in a security product can be beneficial. A confirmation step, for instance, isn't bad UX but 'governance made visible.' This friction builds user confidence and trust by demonstrating that the security system is actively working.

Related Insights

The obsession with removing friction is often wrong. When users have low intent or understanding, the goal isn't to speed them up but to build their comprehension of your product's value. If software asks you to make a decision you don't understand, it makes you feel stupid, which is the ultimate failure.

To build trust, users need Awareness (know when AI is active), Agency (have control over it), and Assurance (confidence in its outputs). This framework, from a former Google DeepMind PM, provides a clear model for designing trustworthy AI experiences by mimicking human trust signals.

To trust an agentic AI, users need to see its work, just as a manager would with a new intern. Design patterns like "stream of thought" (showing the AI reasoning) or "planning mode" (presenting an action plan before executing) make the AI's logic legible and give users a chance to intervene, building crucial trust.

Contrary to the 'minimize steps to value' mantra, adding friction like user questionnaires to onboarding often boosts conversion. By asking users about their goals, you can personalize their experience, make them feel the product is for them, and guide them to the right features, improving funnel completion.

Meticulously crafted design details, even small ones, signal to users that you value their time and experience. This fosters trust, increases perceived value, and builds a stronger affinity for the product, as it works slightly better or differently than expected.

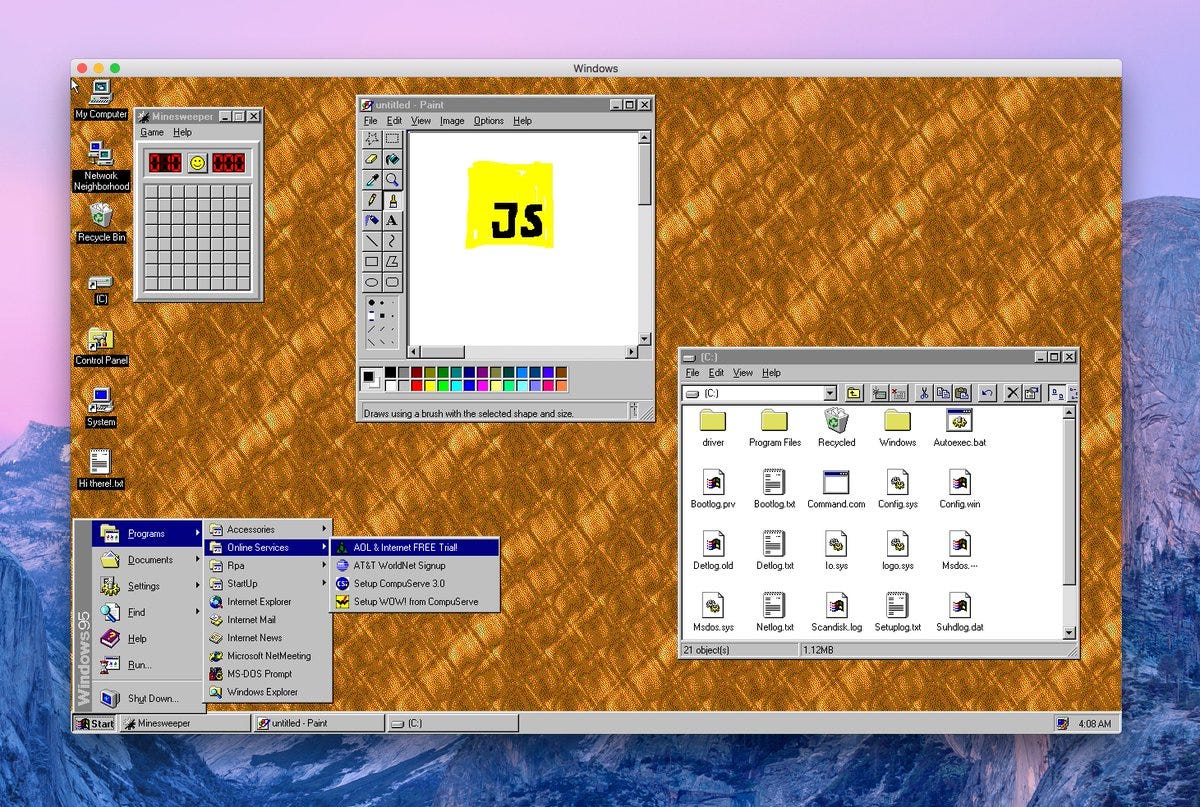

Low-code platforms have a massive opportunity to solve a decades-old security challenge by embedding "secure by default" guardrails. The key is transforming security from a technical hurdle into a configurable UI problem, making it digestible and manageable for the non-technical users who now build applications.

When fintech bank N26 made its login process incredibly fast, users felt it was unsafe. To build trust, the product team had to artificially slow the login down and add visual cues, like a lock animation, demonstrating that sometimes perceived security is more valuable than raw speed.

Drawing from service dog training, building trust requires designing for the edge scenario, not the average use case. A system's value is proven by its ability to handle what goes wrong, not just what goes right. This is where user confidence is truly forged.

AI agents present a UX problem: either grant risky, sweeping permissions or suffer "approval fatigue" by confirming every action. Sandboxing creates a middle ground. The agent can operate autonomously within a secure environment, making it powerful without being dangerous to the host system.

Platforms designed for frictionless speed prevent users from taking a "trust pause"—a moment to critically assess if a person, product, or piece of information is worthy of trust. By removing this reflective step in the name of efficiency, technology accelerates poor decision-making and makes users more vulnerable to misinformation.