Get your free personalized podcast brief

We scan new podcasts and send you the top 5 insights daily.

Even for well-resourced languages like French and German, voice interaction model quality is poor compared to English. Users instinctively speak slower and articulate more carefully, revealing a significant gap in creating natural, conversational experiences for a global user base.

Related Insights

The primary reason voice assistants feel robotic is their failure to process audio while speaking. They get confused by simple interjections like "yeah" or attempts to interrupt. OpenAI's new "BIDI" model aims to solve this by listening and updating its response in real-time for a more natural conversation.

A one-size-fits-all AI voice fails. For a Japanese healthcare client, ElevenLabs' agent used quick, short responses for younger callers but a calmer, slower style for older callers. This personalization of delivery, not just content, based on demographic context was critical for success.

Voice-to-voice AI models promise more natural, low-latency conversations by processing audio directly. However, they are currently impractical for many high-stakes enterprise applications due to a hallucination rate that can be eight times higher than text-based systems.

While Genspark's calling agent can successfully complete a task and provide a transcript, its noticeable audio delays and awkward handling of interruptions highlight a key weakness. Current voice AI struggles with the subtle, real-time cadence of human conversation, which remains a barrier to broader adoption.

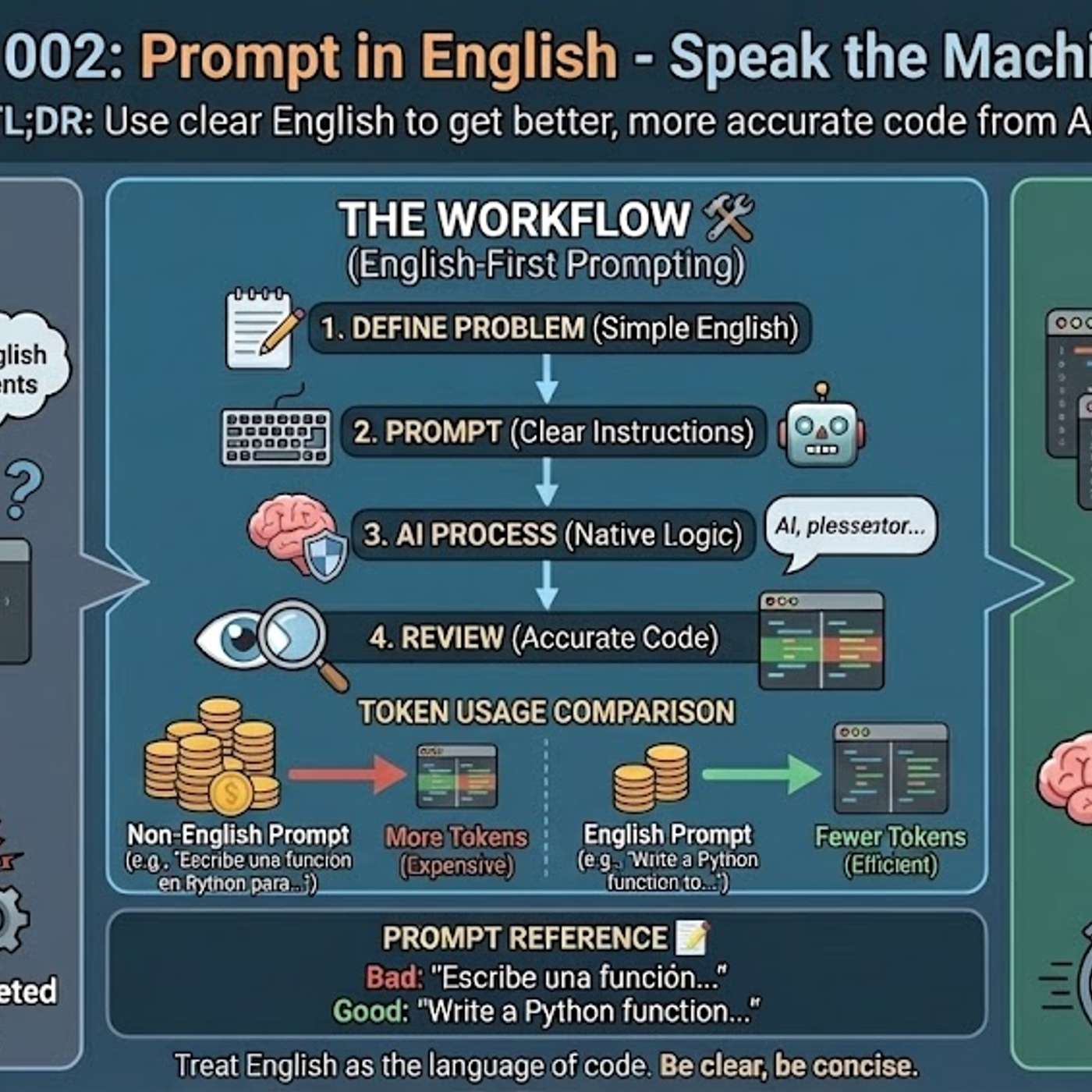

Using languages other than English for technical prompts is inefficient because it forces the AI to perform an intermediate translation. This translation step consumes valuable tokens from the context window, leaving less capacity for detailed instructions and increasing the risk of misinterpretation, which results in weaker solutions.

While most focus on human-to-computer interactions, Crisp.ai's founder argues that significant unsolved challenges and opportunities exist in using AI to improve human-to-human communication. This includes real-time enhancements like making a speaker's audio sound studio-quality with a single click, which directly boosts conversation productivity.

While text-based AI models struggle with non-English languages, the problem is exponentially worse for audio models. The lack of diverse, high-quality audio training data (across ages, genders, topics) in various languages is a critical bottleneck for companies aiming for global adoption of audio-first AI.

A non-obvious failure mode for voice AI is misinterpreting accented English. A user speaking English with a strong Russian accent might find their speech transcribed directly into Russian Cyrillic. This highlights a complex, and frustrating, challenge in building robust and inclusive voice models for a global user base.

The magic of ChatGPT's voice mode in a car is that it feels like another person in the conversation. Conversely, Meta's AI glasses failed when translating a menu because they acted like a screen reader, ignoring the human context of how people actually read menus. Context is everything for voice.

Poland's AI lead observes that frontier models like Anthropic's Claude are degrading in their Polish language and cultural abilities. As developers focus on lucrative use cases like coding, they trade off performance in less common languages, creating a major reliability risk for businesses in non-Anglophone regions who depend on these APIs.

![Jesse Zhang - Building Decagon - [Invest Like the Best, EP.443] thumbnail](https://megaphone.imgix.net/podcasts/2cc4da2a-a266-11f0-ac41-9f5060c6f4ef/image/7df6dc347b875d4b90ae98f397bf1cb1.jpg?ixlib=rails-4.3.1&max-w=3000&max-h=3000&fit=crop&auto=format,compress)