Get your free personalized podcast brief

We scan new podcasts and send you the top 5 insights daily.

The primary reason voice assistants feel robotic is their failure to process audio while speaking. They get confused by simple interjections like "yeah" or attempts to interrupt. OpenAI's new "BIDI" model aims to solve this by listening and updating its response in real-time for a more natural conversation.

Related Insights

Unlike old 'if-then' chatbots, modern conversational AI can handle unexpected user queries and tangents. It's programmed to be conversational, allowing it to 'riff' and 'vibe' with the user, maintaining a natural flow even when a conversation goes off-script, making the interaction feel more human and authentic.

Voice-to-voice AI models promise more natural, low-latency conversations by processing audio directly. However, they are currently impractical for many high-stakes enterprise applications due to a hallucination rate that can be eight times higher than text-based systems.

While Genspark's calling agent can successfully complete a task and provide a transcript, its noticeable audio delays and awkward handling of interruptions highlight a key weakness. Current voice AI struggles with the subtle, real-time cadence of human conversation, which remains a barrier to broader adoption.

OpenAI's update to make its model "less cringe" shows the fight for consumer AI has shifted. As model performance reaches a "good enough" threshold for many users, the personality, tone, and overall user experience—the "vibes"—are becoming the critical differentiators for adoption and loyalty.

While most focus on human-to-computer interactions, Crisp.ai's founder argues that significant unsolved challenges and opportunities exist in using AI to improve human-to-human communication. This includes real-time enhancements like making a speaker's audio sound studio-quality with a single click, which directly boosts conversation productivity.

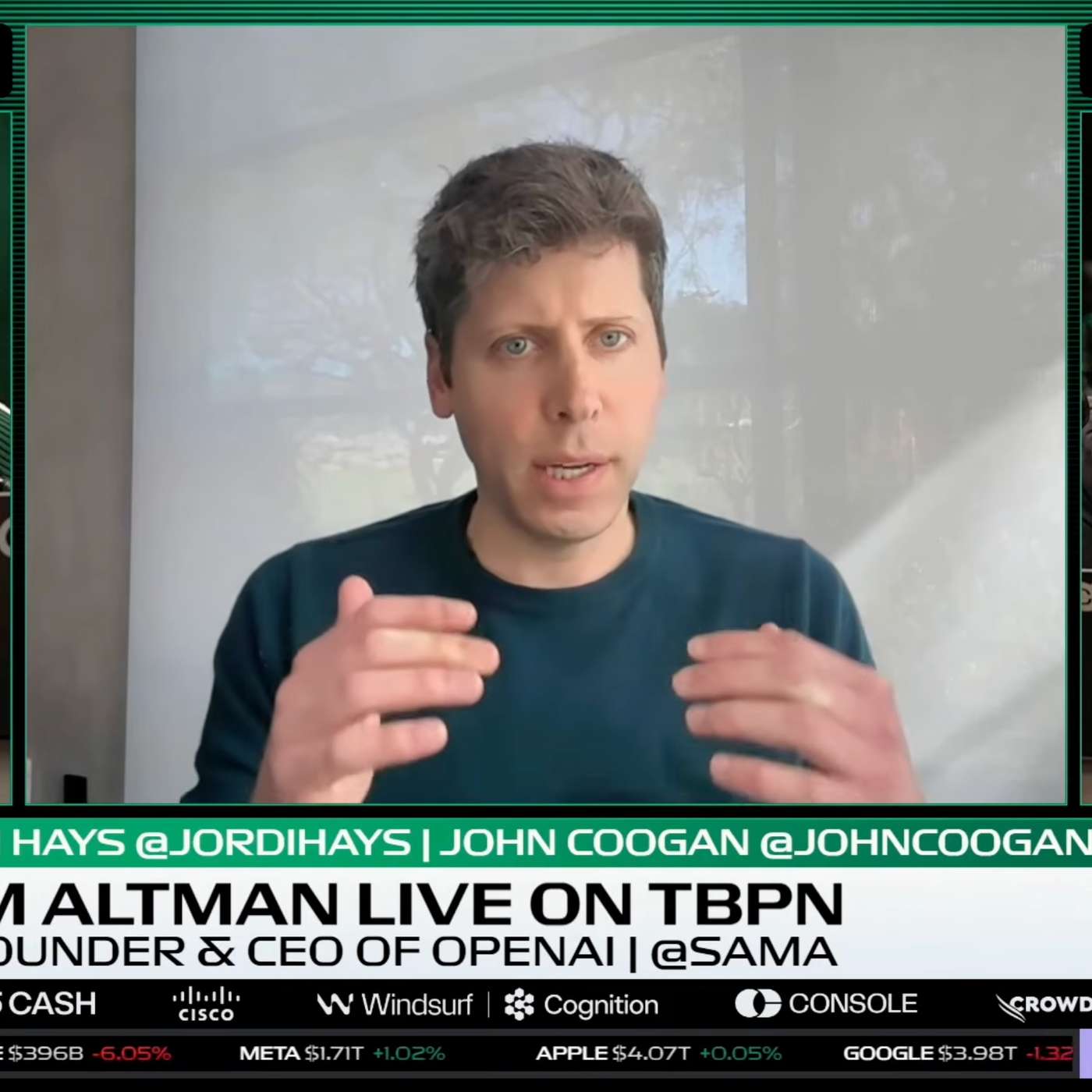

Sam Altman highlights that allowing users to correct an AI model while it's working on a long task is a crucial new capability. This is analogous to correcting a coworker in real-time, preventing wasted effort and enabling more sophisticated outcomes than 'one-shot' generation.

The magic of ChatGPT's voice mode in a car is that it feels like another person in the conversation. Conversely, Meta's AI glasses failed when translating a menu because they acted like a screen reader, ignoring the human context of how people actually read menus. Context is everything for voice.

Advanced models are moving beyond simple prompt-response cycles. New interfaces, like in OpenAI's shopping model, allow users to interrupt the model's reasoning process (its "chain of thought") to provide real-time corrections, representing a powerful new way for humans to collaborate with AI agents.

A common objection to voice AI is its robotic nature. However, current tools can clone voices, replicate human intonation, cadence, and even use slang. The speaker claims that 97% of people outside the AI industry cannot tell the difference, making it a viable front-line tool for customer interaction.

Sam Altman highlights a key feature in new coding models: the ability for a user to interrupt and steer the AI while it's in the middle of a multi-hour task. This shifts the workflow from one-shot prompting to dynamic management, making the AI feel more like a true coworker you can course-correct in real time.

![Jesse Zhang - Building Decagon - [Invest Like the Best, EP.443] thumbnail](https://megaphone.imgix.net/podcasts/2cc4da2a-a266-11f0-ac41-9f5060c6f4ef/image/7df6dc347b875d4b90ae98f397bf1cb1.jpg?ixlib=rails-4.3.1&max-w=3000&max-h=3000&fit=crop&auto=format,compress)

![[State of Post-Training] From GPT-4.1 to 5.1: RLVR, Agent & Token Efficiency — Josh McGrath, OpenAI thumbnail](https://assets.flightcast.com/V2Uploads/nvaja2542wefzb8rjg5f519m/01K4D8FB4MNA071BM5ZDSMH34N/square.jpg)