Get your free personalized podcast brief

We scan new podcasts and send you the top 5 insights daily.

Instead of simply swapping a model behind a stable URL, Pega's platform enables a formal release process. Using Prediction Studio's champion/challenger slots and percentage-based rollouts, teams can safely deploy, monitor, and manage new model versions. This MLOps capability turns model updates into a governed, transparent activity.

Related Insights

The metadata file in Pega's Prediction Studio does more than describe a model. It defines the runtime contract, linking model inputs to Pega properties, dictating performance metrics (AUC, F-score), and ensuring correct response tracking. This file is critical for runtime correctness and monitoring, not just for setup.

Unlike mature tech products with annual releases, the AI model landscape is in a constant state of flux. Companies are incentivized to launch new versions immediately to claim the top spot on performance benchmarks, leading to a frenetic and unpredictable release schedule rather than a stable cadence.

To get scientists to adopt AI tools, simply open-sourcing a model is not enough. A real product must provide a full-stack solution, including managed infrastructure to run expensive models, optimized workflows, and a UI. This abstracts away the complexity of MLOps, allowing scientists to focus on research.

Fal treats every new model launch on its platform as a full-fledged marketing event. Rather than just a technical update, each release becomes an opportunity to co-market with research labs, create social buzz, and provide sales with a fresh reason to engage prospects. This strategy turns the rapid pace of AI innovation into a predictable and repeatable growth engine.

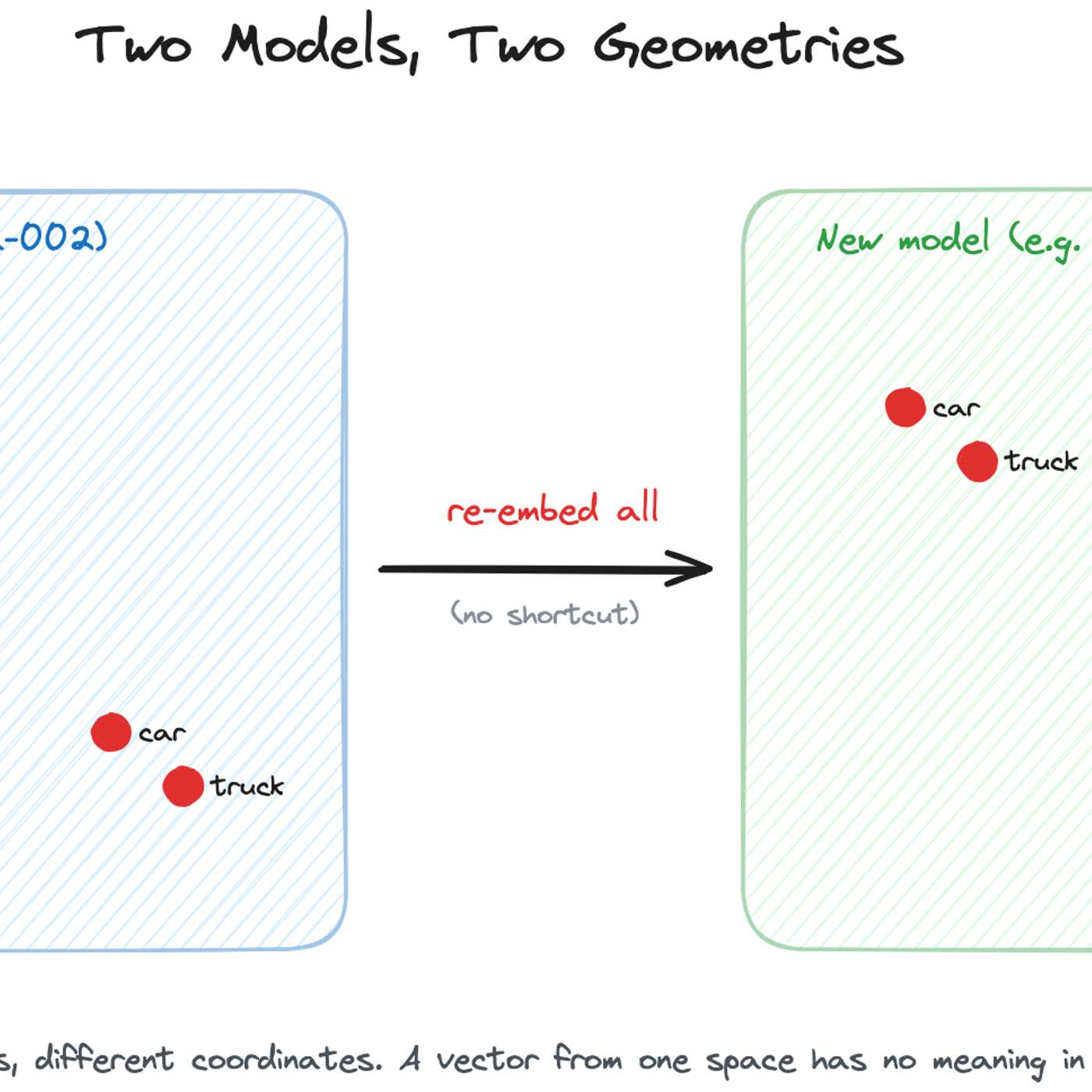

To avoid frantic, high-pressure migrations when an embedding model is deprecated, teams should treat model selection as a dependency that requires planned updates, like any other software library. This mindset shifts the process from an emergency scramble to routine, planned maintenance, making upgrades predictable and manageable.

Major AI labs will abandon monolithic, highly anticipated model releases for a continuous stream of smaller, iterative updates. This de-risks launches and manages public expectations, a lesson learned from the negative sentiment around GPT-5's single, high-stakes release.

AI companies like OpenAI have shifted to monthly, incremental model updates. This frequent but less impactful release cadence means developers no longer feel strong loyalty to any specific model and simply switch to the newest version available, treating major AI models like commodities.

MLOps pipelines manage model deployment, but scaling AI requires a broader "AI Operating System." This system serves as a central governance and integration layer, ensuring every AI solution across the business inherits auditable data lineage, compliance, and standardized policies.

The most common and robust method for migrating embedding models is to build a completely new vector index in parallel using the new model. While the old index serves live traffic, the new one is built, validated via shadow scoring, and then traffic is cut over with an alias swap, ensuring zero downtime.

Since true AI explainability is still elusive, a practical strategy for managing risk is benchmarking. By running a new AI model alongside the current one and comparing their outputs on a defined set of tests, companies can identify and address issues like bias or unexpected behavior before a full rollout.