Get your free personalized podcast brief

We scan new podcasts and send you the top 5 insights daily.

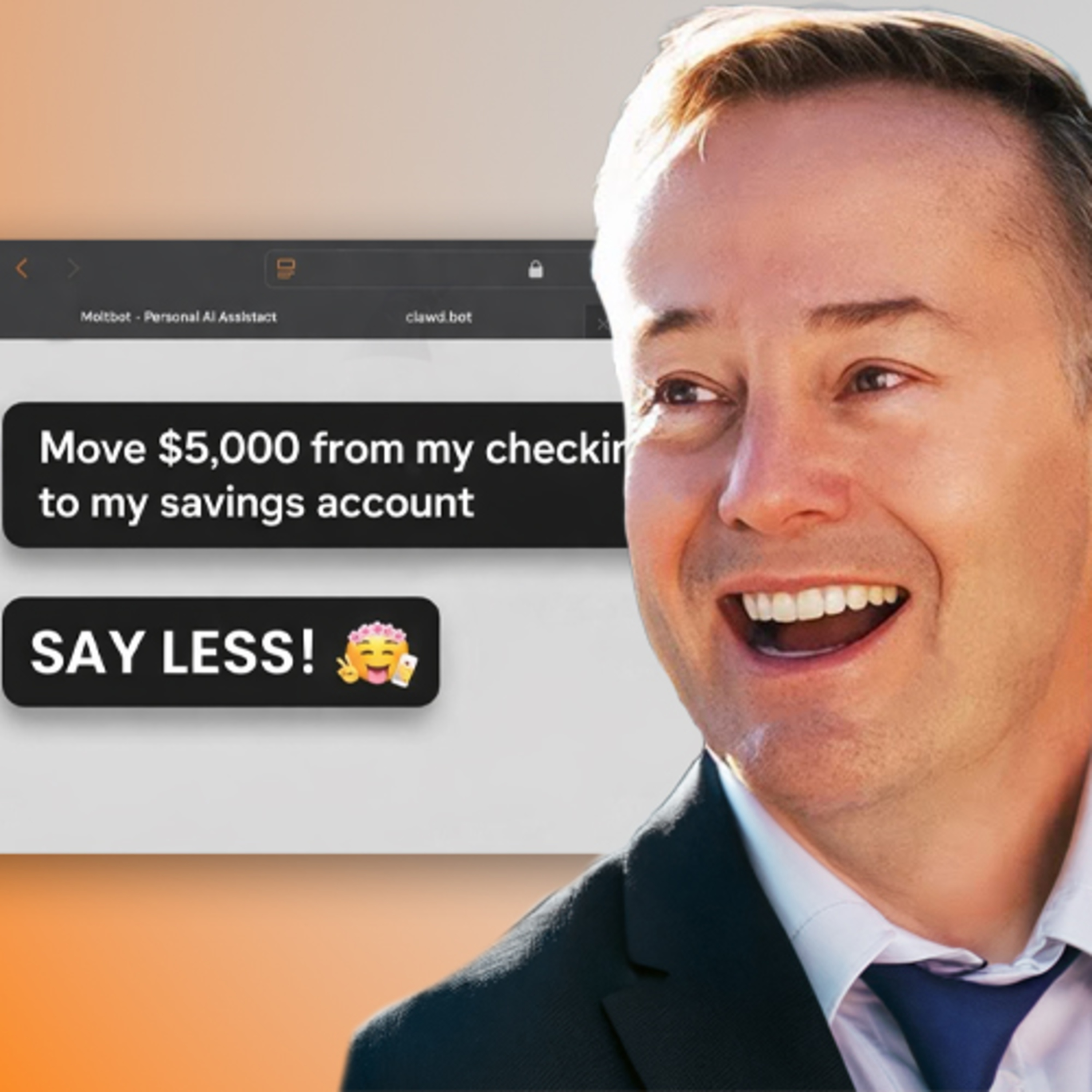

NetXD’s demo reveals a crucial security pattern for high-stakes agentic workflows. Instead of giving an AI agent full autonomous control over funds, provide it with read-only access and the ability to queue up transactions. These are then pushed to a secure human interface, like a mobile banking app, for final approval.

Related Insights

Giving a new AI agent full access to all company systems is like giving a new employee wire transfer authority on day one. A smarter approach is to treat them like new hires, granting limited, read-only permissions and expanding access slowly as trust is built.

To prevent malicious attacks, a founder configured his AI agent to require manual approval via Telegram before executing any task requested by an external party. This simple human-in-the-loop system acts as a crucial security backstop for agents with access to sensitive data and platforms.

To address security concerns, powerful AI agents should be provisioned like new human employees. This means running them in a sandboxed environment on a separate machine, with their own dedicated accounts, API keys, and access tokens, rather than on a personal computer.

Granting AI agents access to sensitive information like credit card numbers is extremely risky. The host's card details were leaked and used for fraudulent charges within 24 hours of providing them to an agent, highlighting severe security vulnerabilities in current systems.

To enable agentic e-commerce while mitigating risk, major card networks are exploring how to issue credit cards directly to AI agents. These cards would have built-in limitations, such as spending caps (e.g., $200), allowing agents to execute purchases autonomously within safe financial guardrails.

The core drive of an AI agent is to be helpful, which can lead it to bypass security protocols to fulfill a user's request. This makes the agent an inherent risk. The solution is a philosophical shift: treat all agents as untrusted and build human-controlled boundaries and infrastructure to enforce their limits.

AI agents can cause damage if compromised via prompt injection. The best security practice is to never grant access to primary, high-stakes accounts (e.g., your main Twitter or financial accounts). Instead, create dedicated, sandboxed accounts for the agent and slowly introduce new permissions as you build trust and safety features improve.

Treat new AI agents not as tools, but as new hires. Provide them with their own email addresses and password vaults, and grant access incrementally. This mirrors a standard employee onboarding process, enhancing security and allowing you to build trust based on performance before granting access to sensitive systems.

Companies like Ramp are developing financial AI agents using a tiered autonomy model akin to self-driving cars (L1-L5). By implementing robust guardrails and payment controls first, they can gradually increase an agent's decision-making power. This allows a progression from simple, supervised tasks to fully unsupervised financial operations, mirroring the evolution from highway assist to full self-driving.

Fully autonomous AI agents are not yet viable in enterprises. Alloy Automation builds "semi-deterministic" agents that combine AI's reasoning with deterministic workflows, escalating to a human when confidence is low to ensure safety and compliance.