Get your free personalized podcast brief

We scan new podcasts and send you the top 5 insights daily.

In systems like Kubernetes, most components like API servers and schedulers can be scaled out by adding more instances. The true bottleneck preventing an order-of-magnitude scale increase is the consistent storage layer (e.g., etcd). All major scaling efforts eventually focus on optimizing or replacing this single, critical component.

Related Insights

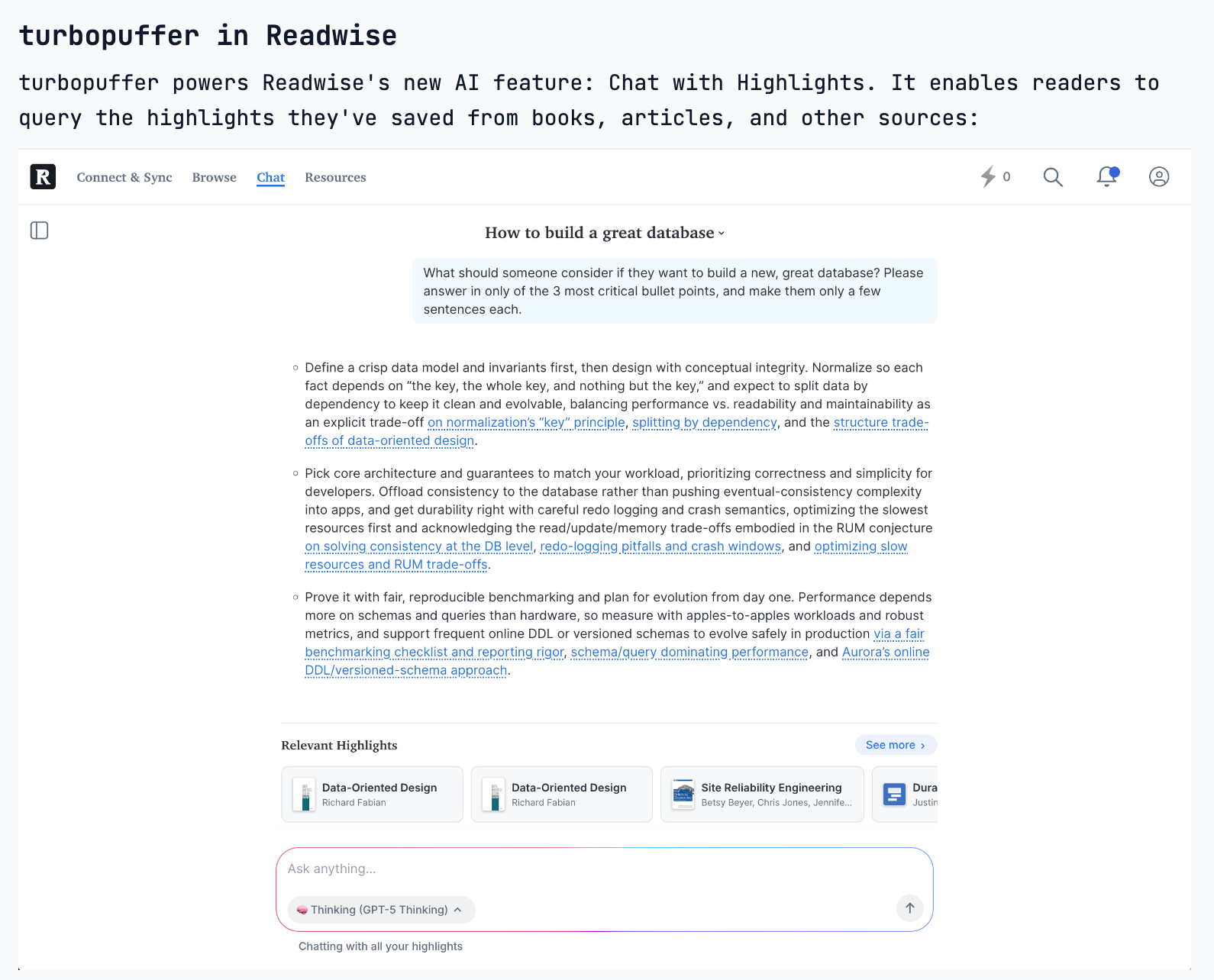

Turbopuffer's design avoids a complex consensus layer (like Zookeeper) by relying on two recent cloud primitive upgrades: S3's strong consistency (post-2020) and a compare-and-swap feature for metadata updates. This creates a simpler, more robust, and stateless system.

The MCP transport protocol requires holding state on the server. While fine for a single server, it becomes a problem at scale. When requests are distributed across multiple pods, a shared state layer (like Redis or Memcache) becomes necessary to ensure different servers can access the same session data.

Kubernetes’s architecture of independent, asynchronous control loops makes it highly resilient; it can always drive toward its desired state regardless of failures. The deliberate trade-off is that this design makes debugging extremely difficult, as the root cause of an issue is often spread across multiple processes without a clear, unified log.

The evolution from physical servers to virtualization and containers adds layers of abstraction. These layers don't make the lower levels obsolete; they create a richer stack with more places to innovate and add value. Whether it's developer tools at the top or kernel optimization at the bottom, each layer presents a distinct business opportunity.

To manage its enormous monorepo, Meta developed 'Eden,' a virtual file system. Instead of downloading all files, it only fetches them when an operation requires them. This decouples the performance of common developer actions, like switching branches, from the ever-increasing size of the repository, enabling scalability.

The focus in AI has evolved from rapid software capability gains to the physical constraints of its adoption. The demand for compute power is expected to significantly outstrip supply, making infrastructure—not algorithms—the defining bottleneck for future growth.

Simply "scaling up" (adding more GPUs to one model instance) hits a performance ceiling due to hardware and algorithmic limits. True large-scale inference requires "scaling out" (duplicating instances), creating a new systems problem of managing and optimizing across a distributed fleet.

The common belief is that AI decisions are driven by compute hardware. However, NetApp's Keith Norbie argues the critical success factor is the underlying data platform. Since most enterprise data already resides on platforms like NetApp, preparing this data structure for training and deployment is more crucial than the choice of server.

To operate thousands of GPUs across multiple clouds and data centers, Fal found Kubernetes insufficient. They had to build their own proprietary stack, including a custom orchestration layer, distributed file system, and container runtimes to achieve the necessary performance and scale.

Dell's CTO identifies a new architectural component: the "knowledge layer" (vector DBs, knowledge graphs). Unlike traditional data architectures, this layer should be placed near the dynamic AI compute (e.g., on an edge device) rather than the static primary data, as it's perpetually hot and used in real-time.