Get your free personalized podcast brief

We scan new podcasts and send you the top 5 insights daily.

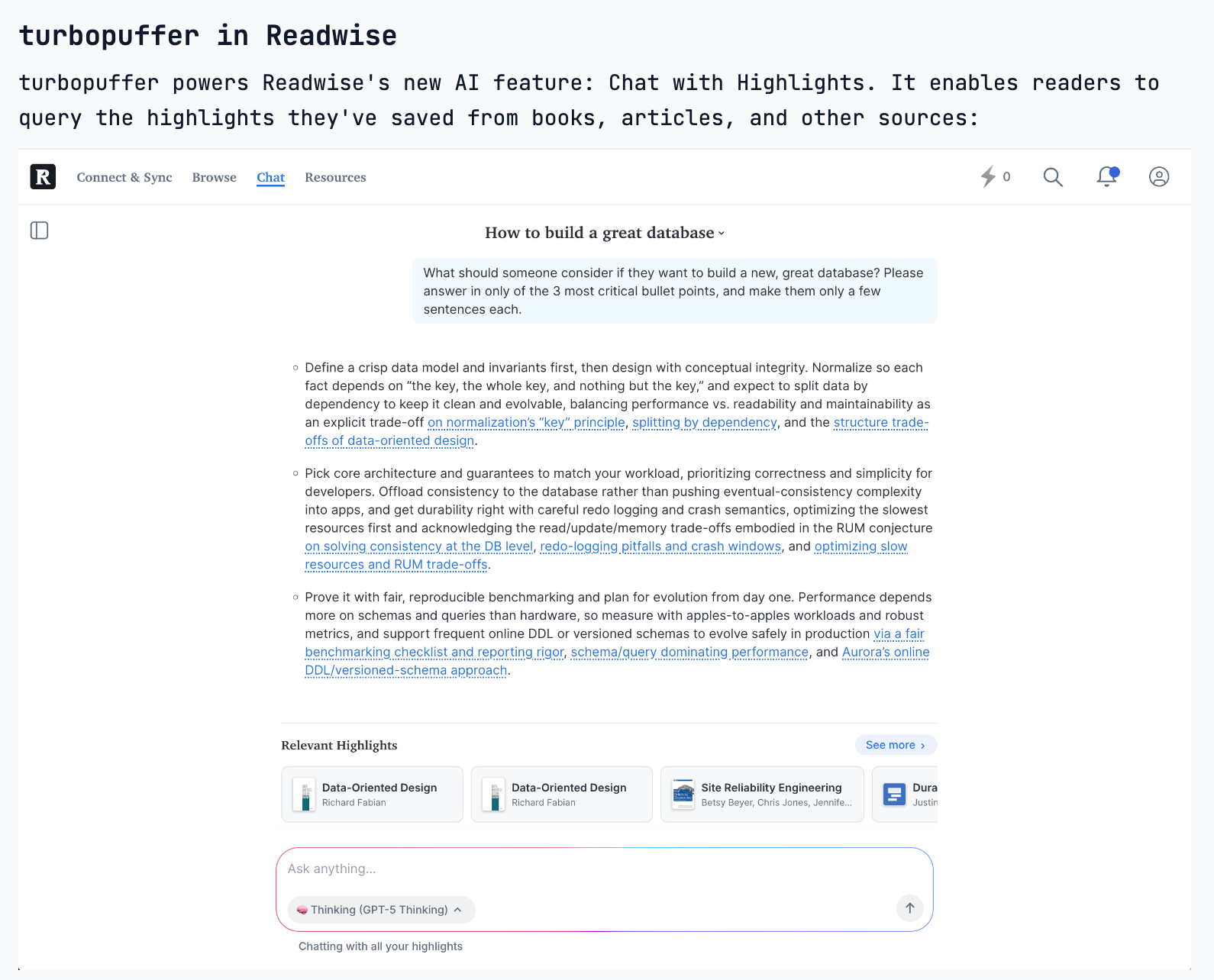

Turbopuffer's design avoids a complex consensus layer (like Zookeeper) by relying on two recent cloud primitive upgrades: S3's strong consistency (post-2020) and a compare-and-swap feature for metadata updates. This creates a simpler, more robust, and stateless system.

Related Insights

TurboPuffer achieved its massive cost savings by building on slow S3 storage. While this increased write latency by 1000x—unacceptable for transactional systems—it was a perfectly acceptable trade-off for search and AI workloads, which prioritize fast reads over fast writes.

The MCP transport protocol requires holding state on the server. While fine for a single server, it becomes a problem at scale. When requests are distributed across multiple pods, a shared state layer (like Redis or Memcache) becomes necessary to ensure different servers can access the same session data.

The popular concept of multiple specialized agents collaborating in a "gossip protocol" is a misunderstanding of what currently works. A more practical and successful pattern for multi-agent systems is a hierarchical structure where a single supervisor agent breaks down a task and orchestrates multiple sub-agents to complete it.

To serve Notion on AWS while its core infra was on GCP, Turbopuffer bought dark fiber to reduce cross-cloud latency. This extreme measure was preferable to compromising their core architectural principle of avoiding a stateful consensus layer, showcasing deep product conviction.

Arista's core innovation was its Extensible Operating System (EOS), built on a single binary image and a state-driven model. This allowed any failing software process to restart independently without crashing the entire system, offering a level of resilience that competitors' complex, multi-image systems could not match.

A key defensibility for Replit is its proprietary, transactional file system that allows for immutable, ledger-based actions. This enables cheap 'forking' of the entire system, allowing them to sample an LLM's output hundreds of times to pick the best result—a hard-to-replicate technical advantage.

Newer AI cloud providers gain a performance advantage by building their infrastructure entirely on NVIDIA's integrated ecosystem, including specialized networking. Incumbent clouds often must patch their legacy, CPU-centric systems, creating inefficiencies that 'neo-clouds' without technical debt can avoid.

Contrary to the belief that object storage (like S3) is the future, the traditional file system is poised for a comeback as the universal interface for data. Its ubiquity and familiarity make it the ideal layer for next-gen innovation, especially if it can be re-architected for the cloud era.

The AI space moves too quickly for slow, consensus-driven standards bodies like the IETF. MCP opted for a traditional open-source model with a small core maintainer group that makes final decisions. This hybrid of consensus and dictatorship enables the rapid iteration necessary to keep pace with AI advancements.

Complex orchestration middleware isn't necessary for multi-agent workflows. A simple file system can act as a reliable handoff mechanism. One agent writes its output to a file, and the next agent reads it. This approach is simple, avoids API issues, and is highly robust.