Get your free personalized podcast brief

We scan new podcasts and send you the top 5 insights daily.

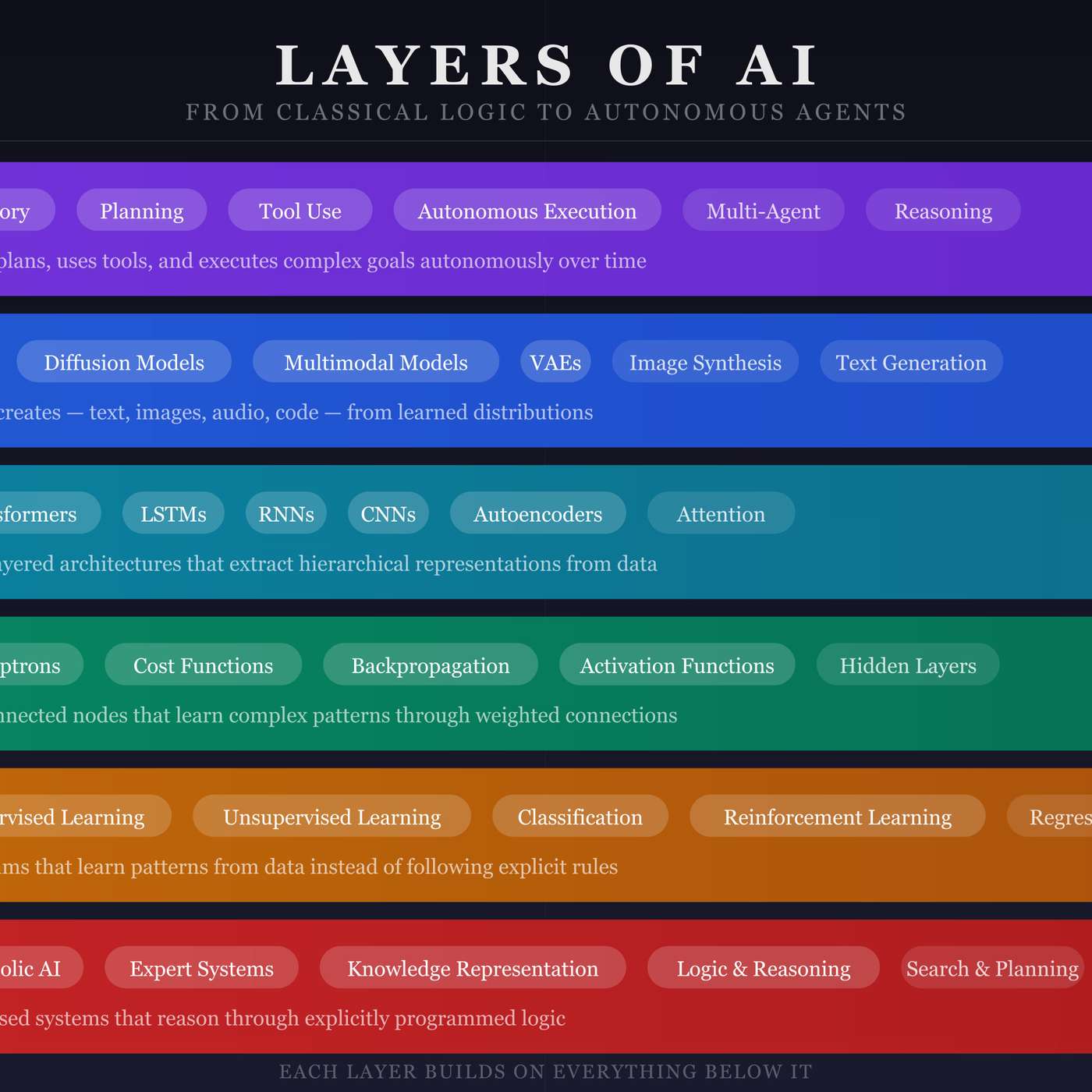

Not every business problem requires an LLM. Using a simple classifier (Layer 2) for email sorting or a deep learning model (Layer 4) for recommendations is more efficient than defaulting to the latest generative AI (Layer 5/6). This layered thinking saves costs, reduces complexity, and builds better products.

Related Insights

Many AI developers get distracted by the 'LLM hype,' constantly chasing the best-performing model. The real focus should be on solving a specific customer problem. The LLM is a component, not the product, and deterministic code or simpler tools are often better for certain tasks.

Instead of relying on a single, large language model to solve every problem, organizations can achieve higher ROI with faster, more accurate results. The key is deploying smaller, specialized AI tools focused on targeted use cases and curated data sets, which avoids introducing unnecessary complexity and error.

Don't use your most powerful and expensive AI model for every task. A crucial skill is model triage: using cheaper models for simple, routine tasks like monitoring and scheduling, while saving premium models for complex reasoning, judgment, and creative work.

High productivity isn't about using AI for everything. It's a disciplined workflow: breaking a task into sub-problems, using an LLM for high-leverage parts like scaffolding and tests, and reserving human focus for the core implementation. This avoids the sunk cost of forcing AI on unsuitable tasks.

For most enterprise tasks, massive frontier models are overkill—a "bazooka to kill a fly." Smaller, domain-specific models are often more accurate for targeted use cases, significantly cheaper to run, and more secure. They focus on being the "best-in-class employee" for a specific task, not a generalist.

A 'GenAI solves everything' mindset is flawed. High-latency models are unsuitable for real-time operational needs, like optimizing a warehouse worker's scanning path, which requires millisecond responses. The key is to apply the right tool—be it an optimizer, machine learning, or GenAI—to the specific business problem.

Instead of relying solely on massive, expensive, general-purpose LLMs, the trend is toward creating smaller, focused models trained on specific business data. These "niche" models are more cost-effective to run, less likely to hallucinate, and far more effective at performing specific, defined tasks for the enterprise.

Resist the urge to apply LLMs to every problem. A better approach is using a 'first principles' decision tree. Evaluate if the task can be solved more simply with data visualization or traditional machine learning before defaulting to a complex, probabilistic, and often overkill GenAI solution.

State-of-the-art models like Claude Opus are often overkill and unnecessarily expensive for simple, routine tasks like summarizing emails. Using cheaper, less powerful models for these straightforward automations provides significant cost savings without sacrificing performance where it's not needed.

Just as you use different social media apps for different purposes, you should use various specialized AI tools for specific tasks. Relying on a single tool like ChatGPT for everything results in watered-down solutions. A better approach is to build a toolkit, matching the right AI to the right problem.