Get your free personalized podcast brief

We scan new podcasts and send you the top 5 insights daily.

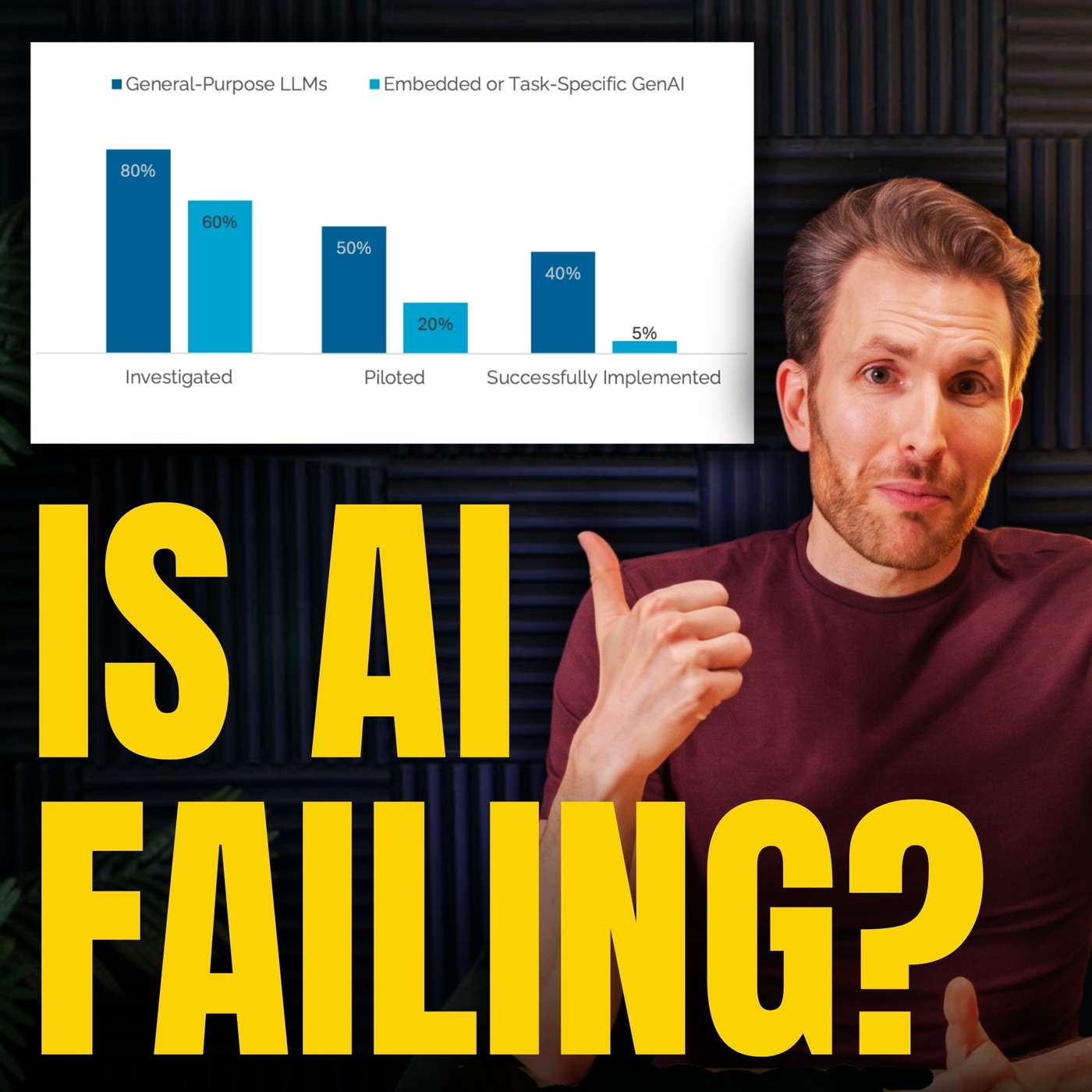

An "MIT study" on AI failures concluded the solution was "agentic AI frameworks," precisely the technology the authors were building and selling. This demonstrates how research, especially when not peer-reviewed, can function as sophisticated content marketing with an undisclosed conflict of interest, using institutional credibility to generate commercial leads.

Related Insights

Anthropic repeatedly launches new models alongside studies on their catastrophic potential. This "Chicken Little" routine, whether sincere or a tactic, effectively generates hype and media attention, creating a sense of urgency that drives market awareness and adoption for their products.

Medium's CEO revealed the company providing data for a critical Wired article about "AI slop" was simultaneously trying to sell its AI detection services to Medium. This highlights a potential conflict of interest where a data source may benefit directly from negative press about a target company.

There is emerging evidence of a "pay-to-play" dynamic in AI search. Platforms like ChatGPT seem to disproportionately cite content from sources with which they have commercial deals, such as the Financial Times and Reddit. This suggests paid partnerships can heavily influence visibility in AI-generated results.

A new marketing tactic involves creating high-quality, AI-generated content on platforms like Reddit to promote a product. The goal is to have this seemingly authentic user content indexed and then surfaced by LLMs like ChatGPT in their summaries, creating an insidious and hard-to-detect marketing channel.

Marketing leaders shouldn't wait for FTC regulation to establish ethical AI guidelines. The real risk of using undisclosed AI, like virtual influencers, isn't immediate legal trouble but the long-term erosion of consumer trust. Once customers feel misled, that brand damage is incredibly difficult to repair.

While commercial conflicts of interest are heavily scrutinized, the pressure on academics to produce positive results to secure their next large institutional grant is often overlooked. This intense pressure to publish favorably creates a significant, less-acknowledged form of research bias.

A growing marketing strategy for new AI companies is to pay influencers for positive promotion without requiring them to disclose it as an advertisement. This creates an artificial sense of organic buzz and can be considered a form of lobbying to win mindshare on social platforms, blurring the line between authentic recommendation and paid placement.

OpenAI's transformation from a non-profit to a for-profit entity is framed as a fundamental deception. This "bait and switch" enabled it to amass data and talent under the benevolent banner of research, a move that would have been fiercely resisted by creators and competitors had its commercial ambitions been transparent.

By employing or bankrolling a majority of AI researchers, large tech firms dictate the research agenda. They also censor or fire researchers, like Dr. Timnit Gebru at Google, whose work exposes the harms and limitations of their commercial models.

A flawed study went viral because it carried the "MIT" brand, prompting media to report on it without scrutiny. The actual report was gated behind a request form, preventing journalists from fact-checking its questionable claims. This combination allowed a misleading narrative to shape market sentiment and public opinion before it could be debunked.