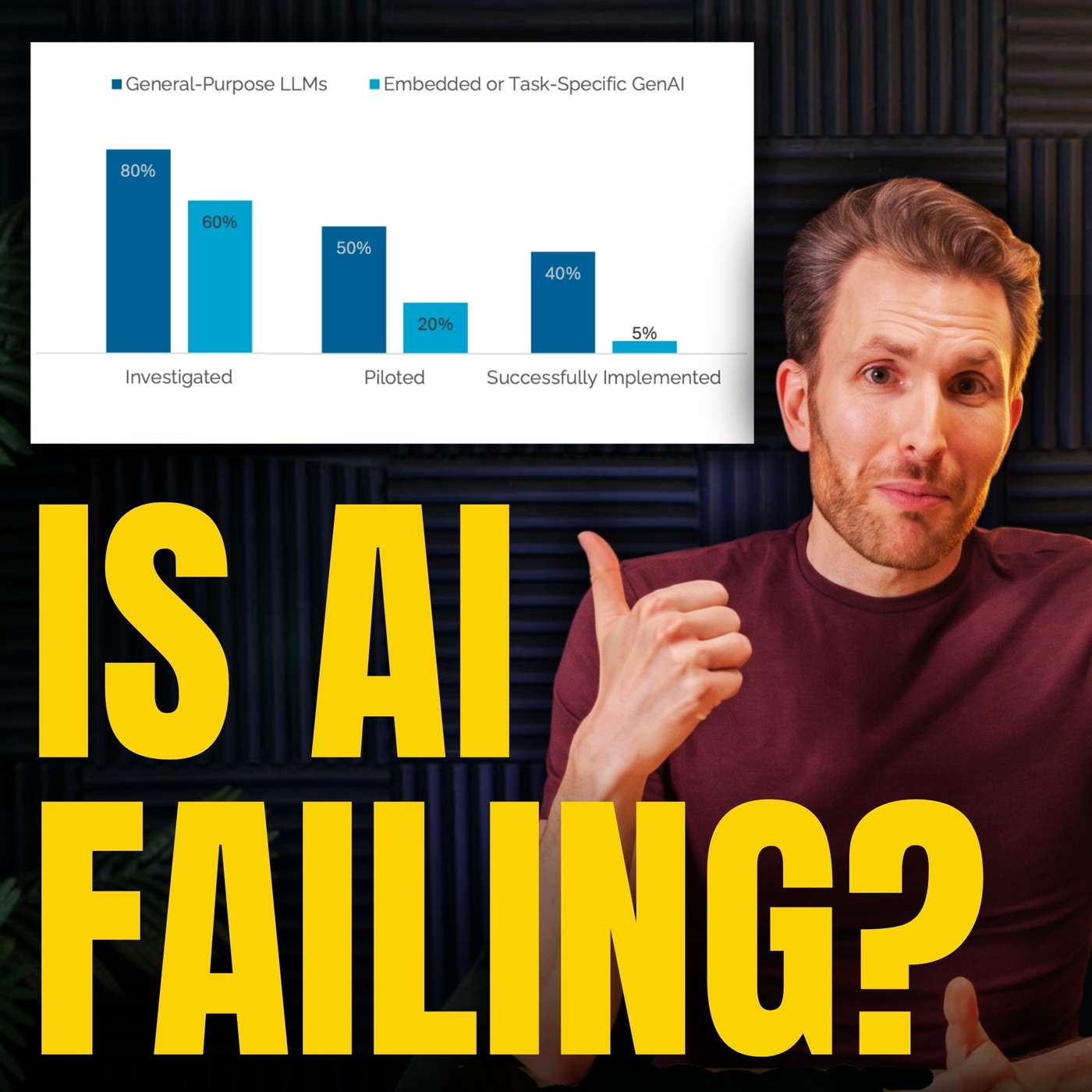

The viral "95% AI failure rate" statistic wasn't about projects failing, but about companies not even starting a pilot. This framing mistake, equating non-participation with failure, created a misleadingly negative perception of AI adoption, a common pitfall in tech reporting that misleads the public and investors.

An "MIT study" on AI failures concluded the solution was "agentic AI frameworks," precisely the technology the authors were building and selling. This demonstrates how research, especially when not peer-reviewed, can function as sophisticated content marketing with an undisclosed conflict of interest, using institutional credibility to generate commercial leads.

An AI pilot study defined success as "marked and sustained" profit impact within six months. This impossibly high bar automatically classified projects that broke even, were on track for future profit, or provided non-financial benefits as "failures," thus obscuring the real, incremental value of new technology deployments.

A flawed study went viral because it carried the "MIT" brand, prompting media to report on it without scrutiny. The actual report was gated behind a request form, preventing journalists from fact-checking its questionable claims. This combination allowed a misleading narrative to shape market sentiment and public opinion before it could be debunked.