Get your free personalized podcast brief

We scan new podcasts and send you the top 5 insights daily.

A flawed study went viral because it carried the "MIT" brand, prompting media to report on it without scrutiny. The actual report was gated behind a request form, preventing journalists from fact-checking its questionable claims. This combination allowed a misleading narrative to shape market sentiment and public opinion before it could be debunked.

Related Insights

Research from Duncan Watts shows the bigger societal issue isn't fabricated facts (misinformation), but rather taking true data points and drawing misleading conclusions (misinterpretation). This happens 41 times more often and is a more insidious problem for decision-makers.

When media reports on prediction market odds, that coverage itself becomes an event that influences the odds. This creates a feedback loop where the market isn't predicting an external reality but is reacting to its own coverage, effectively monetizing a self-generated rumor mill.

The arrest of Nima Momeni, a tech professional known to Bob Lee, completely contradicted the dominant narrative of a random street crime. However, the initial, incorrect story shaped global perceptions of San Francisco, highlighting that facts struggle to undo the damage of viral misinformation.

When media outlets collectively push a single narrative, it becomes consensus reality. If the story is later proven false, they retract it in unison. This "school of fish" behavior provides safety in numbers, making it impossible to hold any single journalist or outlet accountable for being wrong.

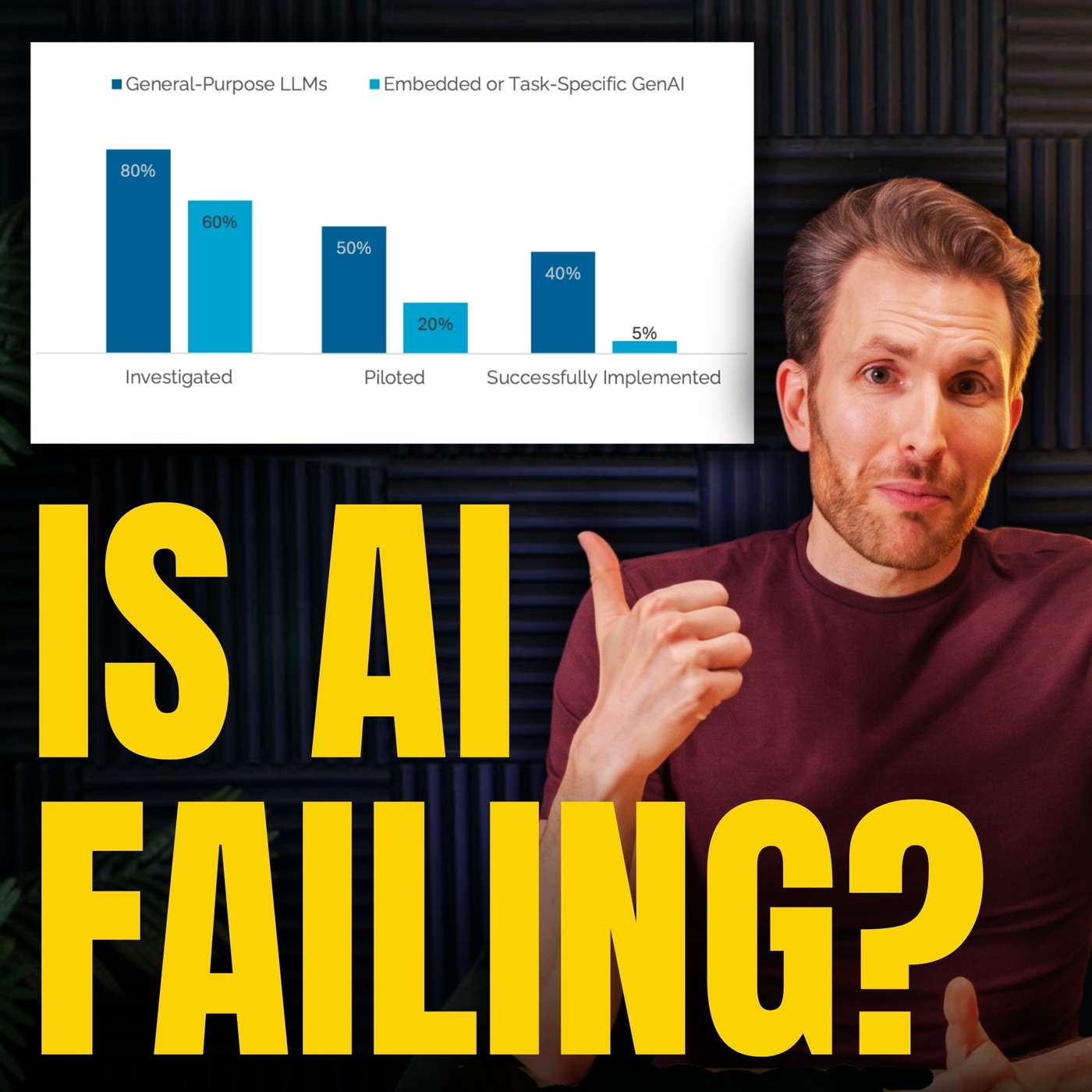

An "MIT study" on AI failures concluded the solution was "agentic AI frameworks," precisely the technology the authors were building and selling. This demonstrates how research, especially when not peer-reviewed, can function as sophisticated content marketing with an undisclosed conflict of interest, using institutional credibility to generate commercial leads.

A viral Substack post detailing a fictional AI-induced economic crisis caused a real market tank. This shows how markets, sensitized to AI risk, can be moved by compelling narratives that masquerade as analysis, even without data—especially when amplified by motivated actors like short-sellers.

The public appetite for surprising, "Freakonomics-style" insights creates a powerful incentive for researchers to generate headline-grabbing findings. This pressure can lead to data manipulation and shoddy science, contributing to the replication crisis in social sciences as researchers chase fame and book deals.

Releasing 3 million documents simultaneously, combined with fabricated files circulating on social media, creates an environment where discerning fact from fiction is nearly impossible. This information overload serves as a modern form of obfuscation, hiding truth in plain sight.

During a crisis, a simple, emotionally resonant narrative (e.g., "colluding with hedge funds") will always be more memorable and spread faster than a complex, technical explanation (e.g., "clearinghouse collateral requirements"). This highlights the profound asymmetry in crisis communications and narrative warfare.

The viral "95% AI failure rate" statistic wasn't about projects failing, but about companies not even starting a pilot. This framing mistake, equating non-participation with failure, created a misleadingly negative perception of AI adoption, a common pitfall in tech reporting that misleads the public and investors.