Get your free personalized podcast brief

We scan new podcasts and send you the top 5 insights daily.

To manage the complexity and risk of AI agents, companies should adopt a centralized model. Rather than allowing individuals to build agents freely, a dedicated internal team should build, govern, and distribute a suite of approved agents to departments, ensuring consistency and control.

Related Insights

An ungoverned AI is like a chaotic, unpredictable forest. To achieve consistent business value, AI must be 'farmed'—a process of applying governance, organization, and boundaries to cultivate predictable results. This regulated approach is key to harnessing AI for reliable revenue generation.

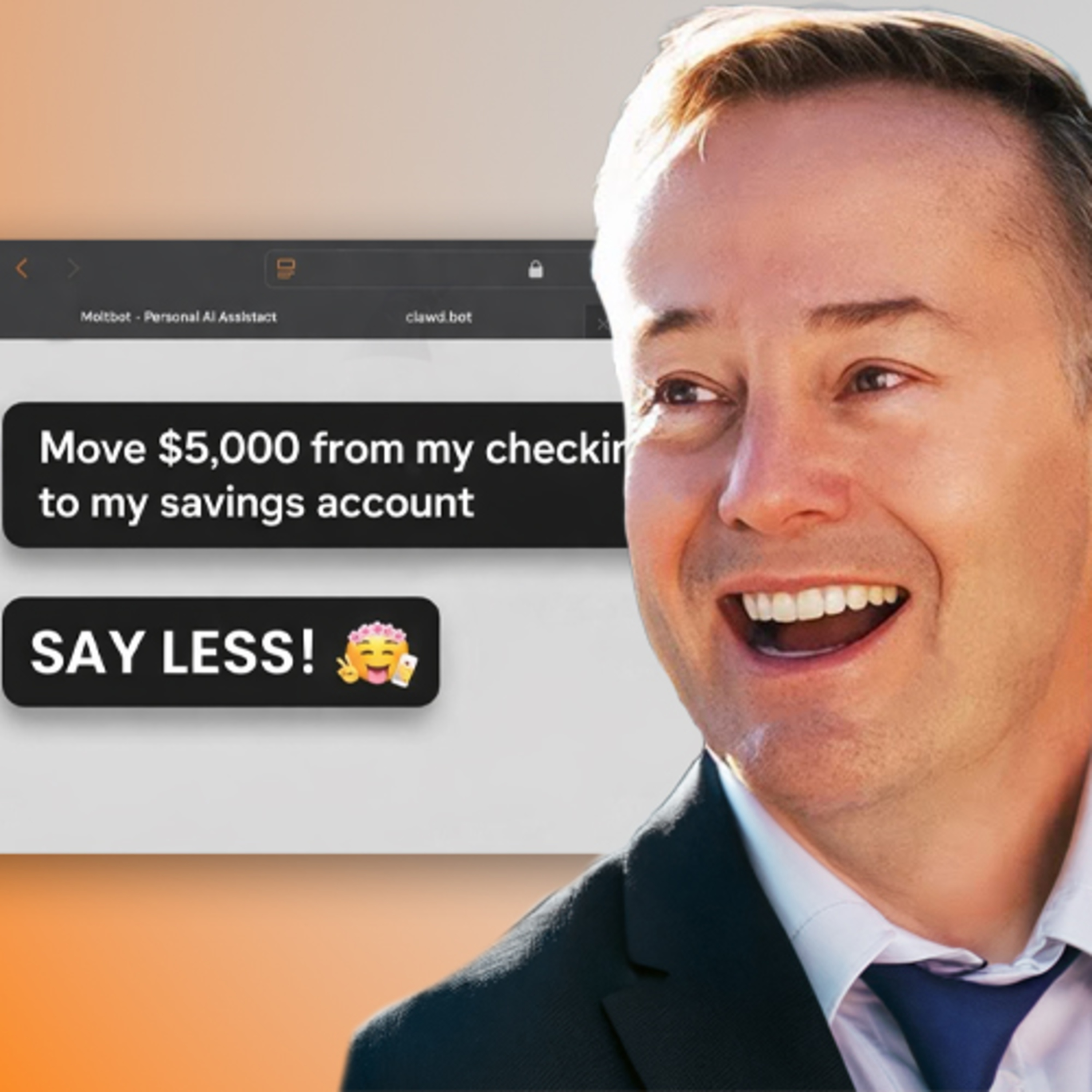

The defining characteristic of an enterprise AI agent isn't its intelligence, but its specific, auditable permissions to perform tasks. This reframes the challenge from managing AI 'thinking' to governing AI 'actions' through trackable access controls, similar to how traditional APIs are managed and monitored.

To ensure governance and avoid redundancy, Experian centralizes AI development. This approach treats AI as a core platform capability, allowing for the reuse of models and consistent application of standards across its global operations.

As AI evolves from single-task tools to autonomous agents, the human role transforms. Instead of simply using AI, professionals will need to manage and oversee multiple AI agents, ensuring their actions are safe, ethical, and aligned with business goals, acting as a critical control layer.

Managing numerous AI agents is like managing a team of people, creating a single point of failure. This necessitates a new dedicated role, a "Chief Agent Officer," with a blend of technical and marketing skills to oversee operations, prevent system failure, and ensure continuity.

Instead of relying solely on human oversight, AI governance will evolve into a system where higher-level "governor" agents audit and regulate other AIs. These specialized agents will manage the core programming, permissions, and ethical guidelines of their subordinates.

Air Inc.'s tooling shows that scaling recursive self-improvement requires more than a feedback loop. A crucial component is a governance system that isolates the "blast radius" of agents interacting with external, potentially malicious, data. This involves limiting their tools and permissions to prevent a single compromised agent from damaging the system.

For enterprises, scaling AI content without built-in governance is reckless. Rather than manual policing, guardrails like brand rules, compliance checks, and audit trails must be integrated from the start. The principle is "AI drafts, people approve," ensuring speed without sacrificing safety.

Create a clear chain of command for AI agents. Allow a primary "builder" agent to spawn sub-agents for specific tasks, but hold it directly responsible for their output. The "reviewer" or quality agent, however, should be a singleton with no subordinates, acting as a final, singular gatekeeper like a principal engineer.

Instead of creating one monolithic "Ultron" agent, build a team of specialized agents (e.g., Chief of Staff, Content). This parallels existing business mental models, making the system easier for humans to understand, manage, and scale.