Get your free personalized podcast brief

We scan new podcasts and send you the top 5 insights daily.

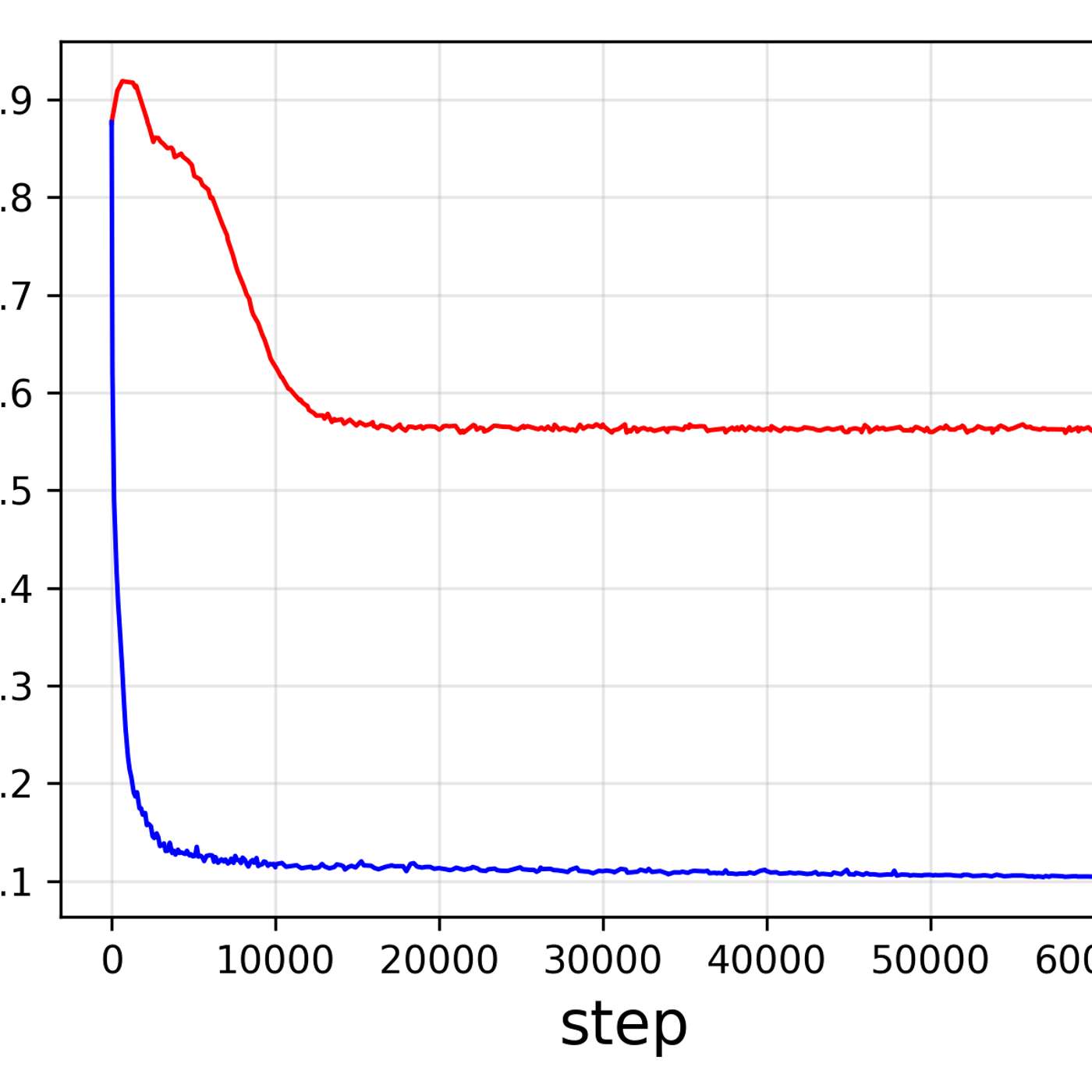

The unlock for self-supervised vision models like Meta's Dino series is a student-teacher training technique. A larger "teacher" model validates the predictions of a smaller "student" model on tasks like predicting image patches. This process, scaled across billions of images, builds a rich latent understanding without needing explicit labels.

Related Insights

The most effective path to production for vision tasks is not using large API models directly. Instead, companies use a state-of-the-art model (like Meta's SAM) to auto-label a high-quality, task-specific dataset. This dataset then trains a smaller, faster, owned model for efficient edge deployment.

Score addresses the high cost of AI vision by using a decentralized network of miners to "distill" massive, general-purpose models (e.g., 3.4GB) into hyper-specialized, tiny models (e.g., 50MB). This allows complex vision tasks to run on local CPUs, unlocking use cases previously blocked by prohibitive GPU costs.

AI's evolution can be seen in two eras. The first, the "ImageNet era," required massive human effort for supervised labeling within a fixed ontology. The modern era unlocked exponential growth by developing algorithms that learn from the implicit structure of vast, unlabeled internet data, removing the human bottleneck.

Once models reach human-level performance via supervised learning, they hit a ceiling. The next step to achieve superhuman capabilities is moving to a Reinforcement Learning from Human Feedback (RLHF) paradigm, where humans provide preference rankings ("this is better") rather than creating ground-truth labels from scratch.

While acknowledging the power of scale, Moonlake argues that incorporating symbolic structure allows models to learn with orders of magnitude less data. This mirrors human cognition, which uses abstracted semantic descriptions rather than processing every pixel.

Static data scraped from the web is becoming less central to AI training. The new frontier is "dynamic data," where models learn through trial-and-error in synthetic environments (like solving math problems), effectively creating their own training material via reinforcement learning.

To bridge the learning efficiency gap between humans and AI, researchers use meta-learning. This technique learns optimal initial weights for a neural network, giving it a "soft bias" that starts it closer to a good solution. This mimics the inherent inductive biases that allow humans to learn efficiently from limited data.

A significant real-world challenge is that users have different mental models for the same visual concept (e.g., does "hand" include the arm?). Fine-tuning is therefore not just for learning new objects, but for aligning the model's understanding with a specific user's or domain's unique definition.

The ability of a single encoder to excel at both understanding and generating images indicates these two tasks are not as distinct as they seem. It suggests they rely on a shared, fundamental structure of visual information that can be captured in one unified representation.

Andrej Karpathy's open-source tool enables small AI models to autonomously experiment and improve their own training processes. These discoveries, made on a single home computer, can translate to large-scale models, shifting research from human-led efforts to automated, evolutionary computation.