Get your free personalized podcast brief

We scan new podcasts and send you the top 5 insights daily.

Ford and the Department of Agriculture both claimed 75% of tractors used different fuels. Neither was interested in resolving the discrepancy, instead preferring to assert their expertise. This shows how institutions can prioritize being seen as an authority over being correct, perpetuating misinformation and institutional failure.

Related Insights

We over-rely on the reputations of institutions like Ford or government departments. Their past successes create a 'brand halo' that leads us to accept their data uncritically, even when it's contradictory. Our systems lack a reliable mechanism to challenge flawed institutional pronouncements after they are made official.

Lying is an inherent function of all powerful institutions throughout history, not an exception. Meetings in government often focus on 'what' to tell the public, not 'how' to tell the truth. Examples like asbestos in baby powder and the dangers of opioids show a pattern of denial that can last for decades before the truth is admitted.

Invoking 'studies say' or 'science backed' has become a rhetorical trick to claim intellectual authority and shut down conversation. It's wiser to adopt a default position of skepticism, as these phrases often precede weak or misrepresented claims, especially in soft sciences.

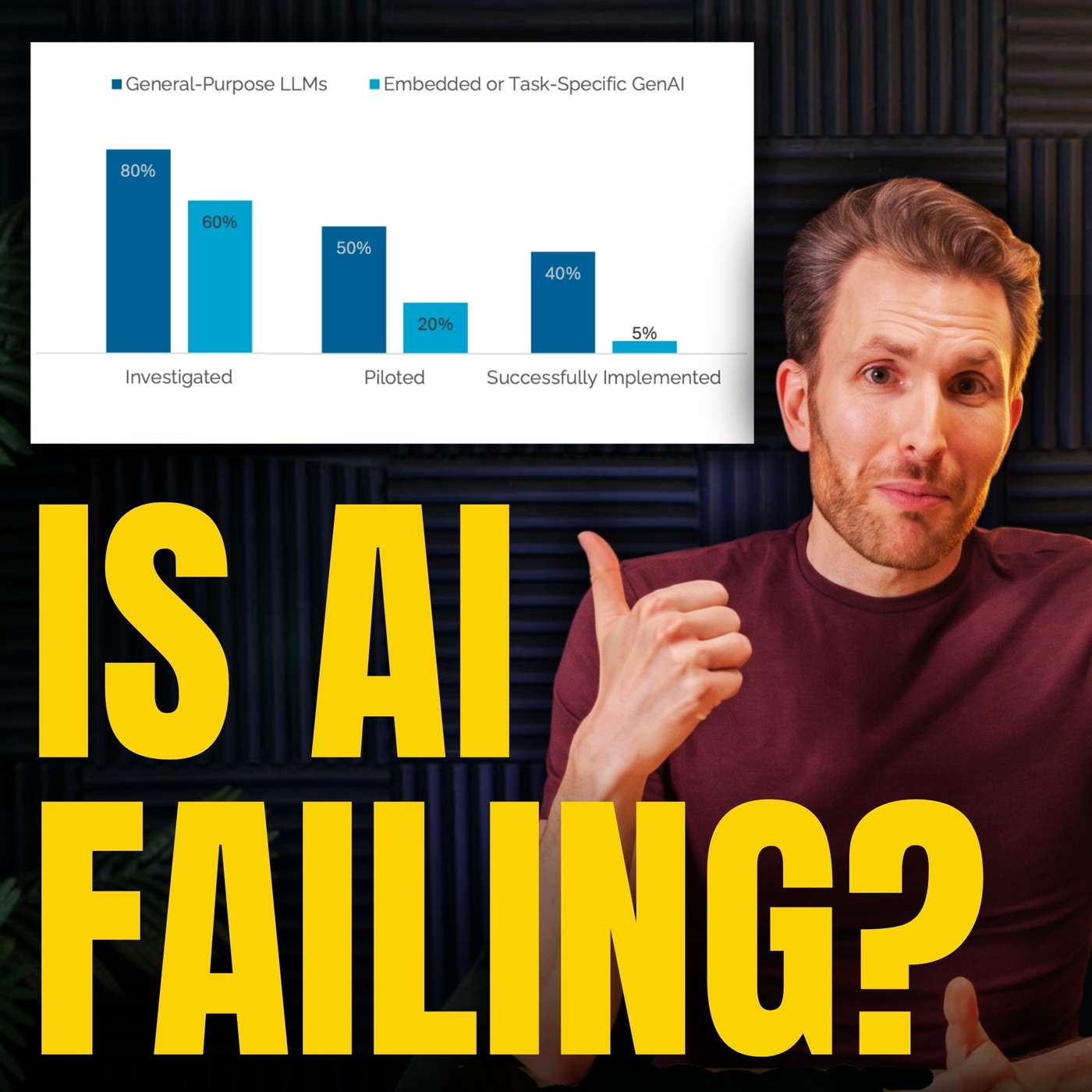

The reason smart AI experts continue to disagree on outcomes, despite new evidence, is that they operate from fundamentally different paradigms. One camp sees "always another bottleneck," while the other sees a pattern of overcoming past limitations. New data is simply used to reinforce these pre-existing worldviews.

Simply stating that conventional wisdom is wrong is a weak "gotcha" tactic. A more robust approach involves investigating the ecosystem that created the belief, specifically the experts who established it, and identifying their incentives or biases, which often reveals why flawed wisdom persists.

When presented with contradictory data on tractor fuel types, senior economists on a national rationing project laughed it off. This reveals a systemic indifference to data integrity, where institutional momentum and expert self-assurance override the need for accurate inputs, undermining the entire project's validity.

A flawed NTSB bus safety study contained too many errors to be a clean conspiracy, yet all errors biased the result in one direction, ruling out random incompetence. The true cause is often a systemic or tribal momentum towards a desired conclusion, a phenomenon more complex than simple fraud or ineptitude.

Contrary to popular belief, publication in a top academic journal doesn't guarantee a study is correct. The social sciences lack the precise experimental validation of hard sciences, allowing incorrect theories to have "long legs and survive" due to a lack of rigorous, focused scrutiny from peers.

Unlike financial traders who can quickly reverse a bad position, institutions like government agencies and media outlets find retractions too costly to their status and careers. They often 'stand by' flawed work rather than admit error, creating a system that lacks the self-correcting mechanisms necessary for finding truth.

A flawed study went viral because it carried the "MIT" brand, prompting media to report on it without scrutiny. The actual report was gated behind a request form, preventing journalists from fact-checking its questionable claims. This combination allowed a misleading narrative to shape market sentiment and public opinion before it could be debunked.