Get your free personalized podcast brief

We scan new podcasts and send you the top 5 insights daily.

We over-rely on the reputations of institutions like Ford or government departments. Their past successes create a 'brand halo' that leads us to accept their data uncritically, even when it's contradictory. Our systems lack a reliable mechanism to challenge flawed institutional pronouncements after they are made official.

Related Insights

Research from Duncan Watts shows the bigger societal issue isn't fabricated facts (misinformation), but rather taking true data points and drawing misleading conclusions (misinterpretation). This happens 41 times more often and is a more insidious problem for decision-makers.

Corporate financials require maker-checker systems, audit trails, and severe penalties for fraud. Scientific research data often lacks these controls, with no audit trails or meaningful penalties for errors. This disparity suggests we should apply at least as much skepticism to academic papers as to financial reports.

Ford and the Department of Agriculture both claimed 75% of tractors used different fuels. Neither was interested in resolving the discrepancy, instead preferring to assert their expertise. This shows how institutions can prioritize being seen as an authority over being correct, perpetuating misinformation and institutional failure.

When presented with contradictory data on tractor fuel types, senior economists on a national rationing project laughed it off. This reveals a systemic indifference to data integrity, where institutional momentum and expert self-assurance override the need for accurate inputs, undermining the entire project's validity.

A flawed NTSB bus safety study contained too many errors to be a clean conspiracy, yet all errors biased the result in one direction, ruling out random incompetence. The true cause is often a systemic or tribal momentum towards a desired conclusion, a phenomenon more complex than simple fraud or ineptitude.

Contrary to popular belief, publication in a top academic journal doesn't guarantee a study is correct. The social sciences lack the precise experimental validation of hard sciences, allowing incorrect theories to have "long legs and survive" due to a lack of rigorous, focused scrutiny from peers.

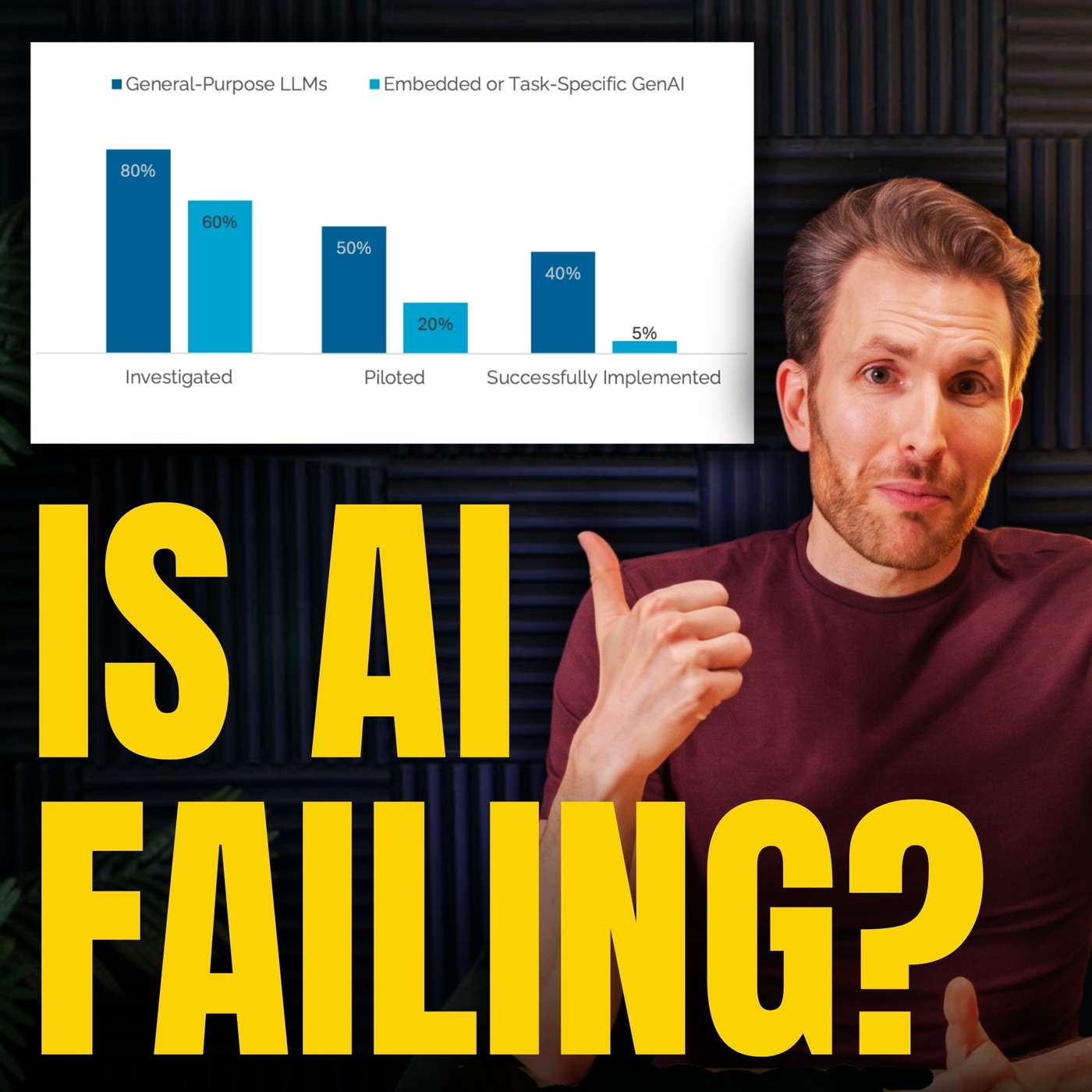

Creating a reliable AI agent for a well-known brand is paradoxically harder than for an unknown one. The LLM's vast pre-existing knowledge of the famous brand creates a 'temptation' to answer from memory instead of sticking to provided documentation, making factual grounding a significant challenge.

Unlike financial traders who can quickly reverse a bad position, institutions like government agencies and media outlets find retractions too costly to their status and careers. They often 'stand by' flawed work rather than admit error, creating a system that lacks the self-correcting mechanisms necessary for finding truth.

While commercial conflicts of interest are heavily scrutinized, the pressure on academics to produce positive results to secure their next large institutional grant is often overlooked. This intense pressure to publish favorably creates a significant, less-acknowledged form of research bias.

A flawed study went viral because it carried the "MIT" brand, prompting media to report on it without scrutiny. The actual report was gated behind a request form, preventing journalists from fact-checking its questionable claims. This combination allowed a misleading narrative to shape market sentiment and public opinion before it could be debunked.