Get your free personalized podcast brief

We scan new podcasts and send you the top 5 insights daily.

Developers claiming 10x speedups from AI often aren't 10x faster on their core tasks. Instead, they're tackling new side projects that were previously impossible, creating a perception of "infinite" speedup. However, these new tasks are often less economically valuable, inflating the true productivity gain on business-critical work.

Related Insights

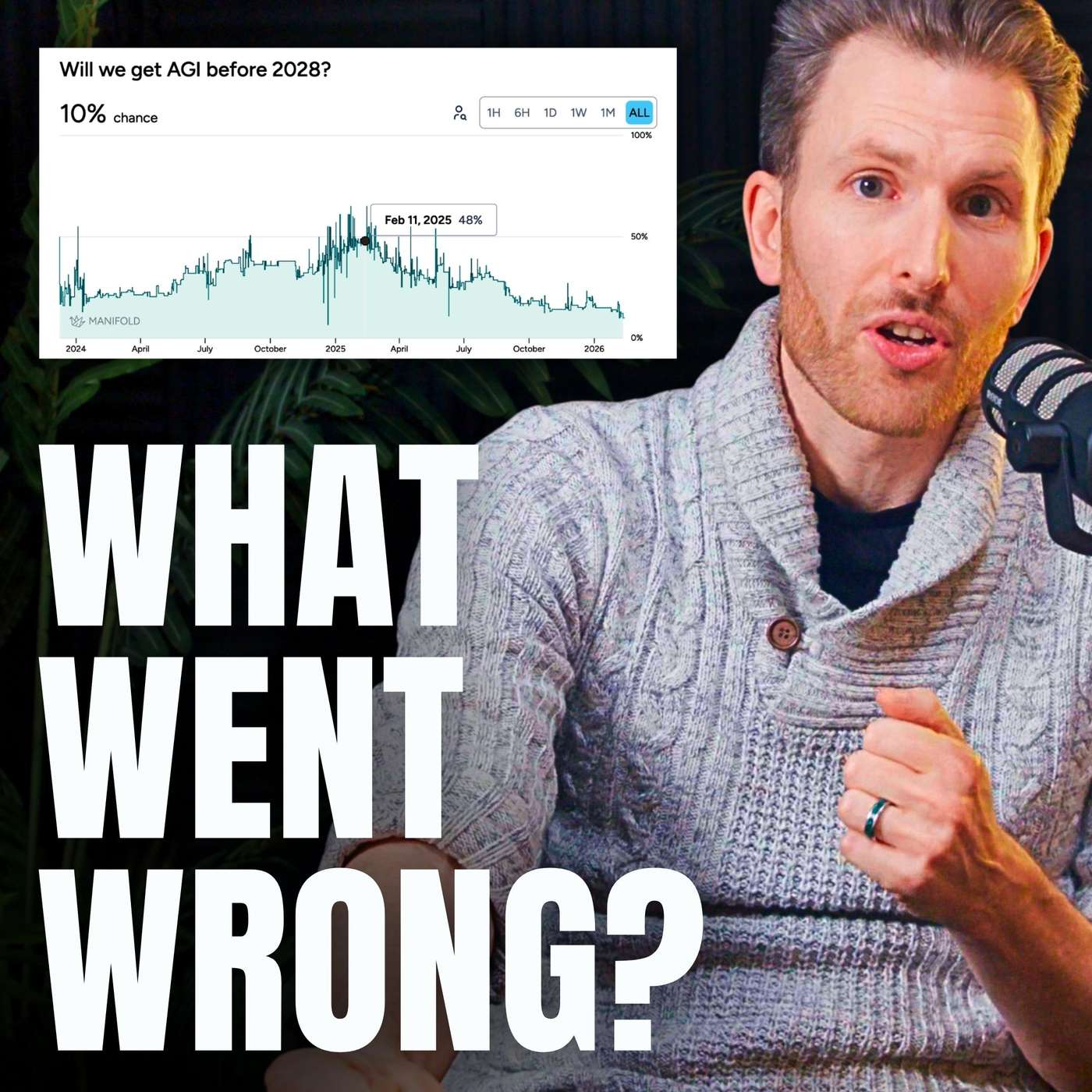

There's a significant gap between AI performance on structured benchmarks and its real-world utility. A randomized controlled trial (RCT) found that open-source software developers were actually slowed down by 20% when using AI assistants, despite being miscalibrated to believe the tools were helping. This highlights the limitations of current evaluation methods.

According to OpenAI co-founder Andrej Karpathy, the true impact of AI code generation is less about a linear speedup on existing tasks. Instead, it expands the scope of what's feasible, allowing engineers to attempt projects they would have previously deemed not worth the effort or beyond their skillset.

A randomized controlled trial revealed a nearly 40% perception gap in developer productivity. While experienced developers using AI tools were measurably 19% slower, they self-reported feeling 20% faster. This highlights the unreliability of self-reported metrics for assessing AI's impact.

Human intuition is a poor gauge of AI's actual productivity benefits. A study found developers felt significantly sped up by AI coding tools even when objective measurements showed no speed increase. The real value may come from enabling tasks that otherwise wouldn't be attempted, rather than simply accelerating existing workflows.

Productivity models often wrongly assume time saved by AI is redeployed into other work. In reality, many employees use efficiency gains to finish early. This 'human slack' factor dampens macro-level productivity gains, except in highly driven fields like tech, where workers use it to work even more.

A growing gap exists between AI's performance in demos and its actual impact on productivity. As podcaster Dwarkesh Patel noted, AI models improve at the rapid rate short-term optimists predict, but only become useful at the slower rate long-term skeptics predict, explaining widespread disillusionment.

AI's true productivity leverage is not just speed but enabling more attempts. A human might get one shot at a complex task, whereas an AI-assisted workflow allows for three or more "turns at the wheel." The critical human skill shifts from initial creation to rapid review and refinement of these iterations.

A recent study found that AI assistants actually slowed down programmers working on complex codebases. More importantly, the programmers mistakenly believed the AI was speeding them up. This suggests a general human bias towards overestimating AI's current effectiveness, which could lead to flawed projections about future progress.

While AI coding assistants appear to boost output, they introduce a "rework tax." A Stanford study found AI-generated code leads to significant downstream refactoring. A team might ship 40% more code, but if half of that increase is just fixing last week's AI-generated "slop," the real productivity gain is much lower than headlines suggest.

A Meta study found expert programmers were less productive with AI tools. The speaker suggests this is because users thought they were faster while actually being distracted (e.g., social media) waiting for the AI, highlighting a dangerous gap between perceived and actual productivity.