Get your free personalized podcast brief

We scan new podcasts and send you the top 5 insights daily.

The core legal question for social media and AI is shifting from content moderation (Section 230) to whether the platform's design is a liable "product" (like tobacco) or protected "expression" (like speech), setting a precedent for future AI cases.

Related Insights

Recent legal victories against tech giants like Meta and Google bypass Section 230 protections. Instead of focusing on harmful content, plaintiffs successfully argue that features like infinite scroll and personalized algorithms are deliberately designed to be addictive, presenting a product liability issue.

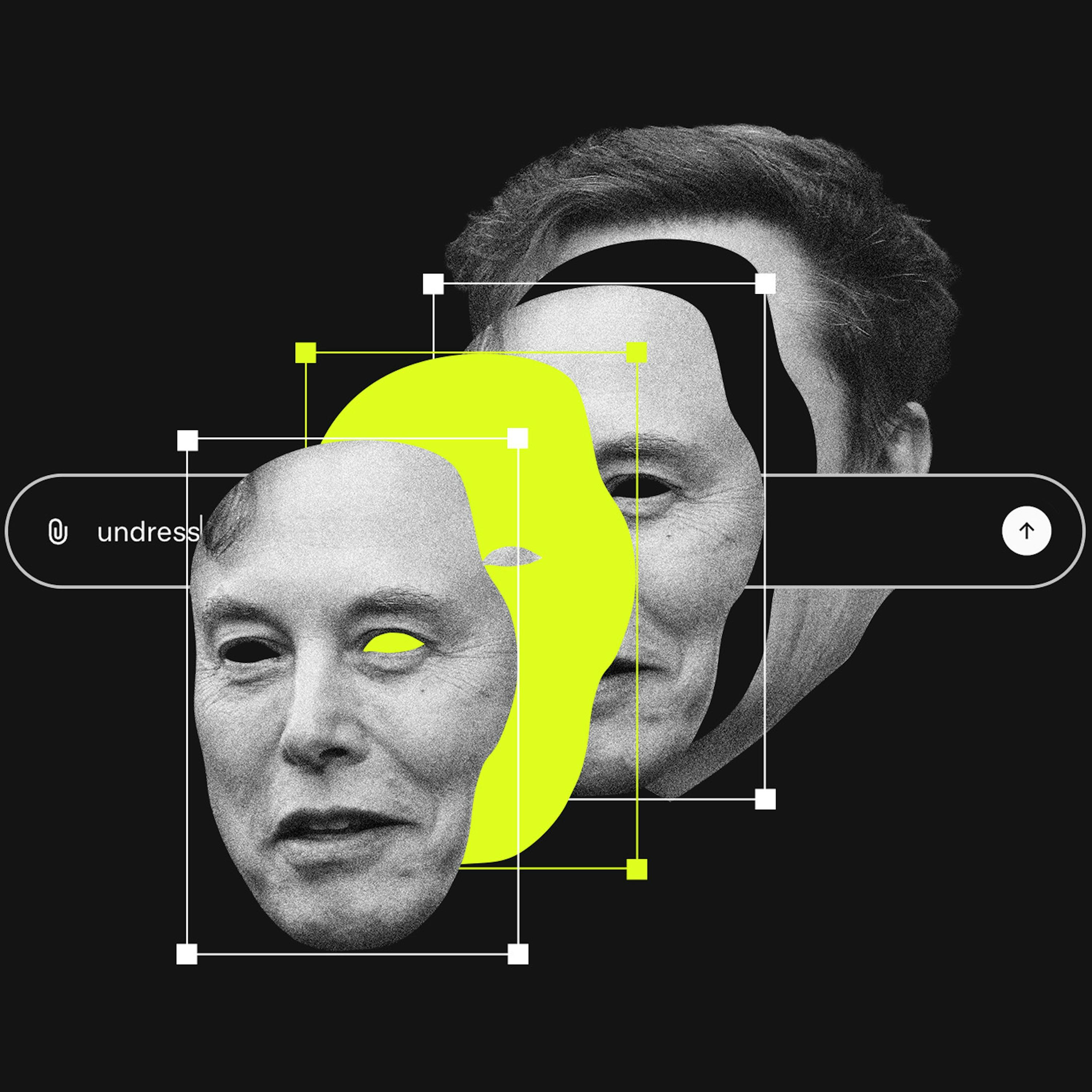

A lawsuit against X AI alleges Grok is "unreasonably dangerous as designed." This bypasses Section 230 by targeting the product's inherent flaws rather than user content. This approach is becoming a primary legal vector for holding platforms accountable for AI-driven harms.

The legal strategy against social media giants mirrors the 90s tobacco lawsuits. The case isn't about excessive use, but about proving that features like infinite scroll were intentionally designed to addict users, creating a public health issue. This shifts liability from the user to the platform's design.

A landmark case against Meta and YouTube successfully argued that platform features like infinite scroll and recommendation algorithms are 'defective products' causing harm. This novel legal strategy bypasses Section 230, which only protects platforms from user-generated content, opening a significant new litigation front.

Section 230 protects platforms from liability for third-party user content. Since generative AI tools create the content themselves, platforms like X could be held directly responsible. This is a critical, unsettled legal question that could dismantle a key legal shield for AI companies.

The next wave of social media regulation is moving beyond content moderation to target core platform design. The EU and US legal actions are scrutinizing features like infinite scroll and personalized algorithms as potentially "addictive." This focus on platform architecture could fundamentally alter the user experience for both teens and adults.

AI companies argue their models' outputs are original creations to defend against copyright claims. This stance becomes a liability when the AI generates harmful material, as it positions the platform as a co-creator, undermining the Section 230 "neutral platform" defense used by traditional social media.

A landmark verdict against Meta and YouTube reveals a new legal strategy to bypass Section 230 immunity. By suing over the intentional, addictive design of features like infinite scroll and autoplay, plaintiffs can frame the platform itself as a defective product, shifting the legal battle from content moderation to product liability.

A targeted approach to social media regulation is to remove Section 230 liability protection specifically for content that platforms' algorithms choose to amplify. If a company reverse-engineers a user's behavior to promote harmful content, they should be held liable, just as a bartender is for over-serving a customer.

A landmark lawsuit against Meta and YouTube found them liable for user harm by focusing on platform-built features like 'infinite scroll' and 'the like button,' not user content. This 'defective product' legal theory sidesteps Section 230 immunity and opens a new front for litigation against tech platforms.