Get your free personalized podcast brief

We scan new podcasts and send you the top 5 insights daily.

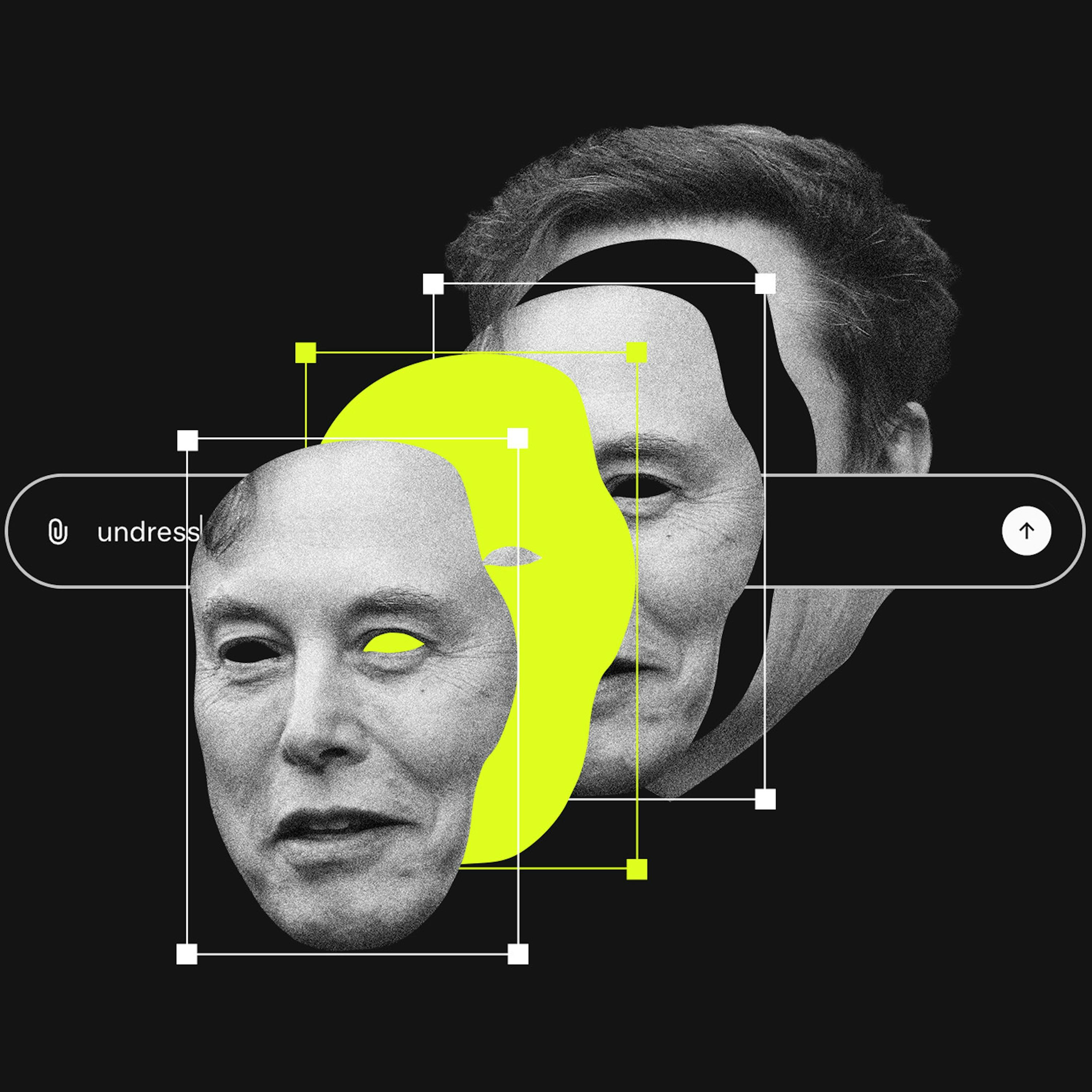

A lawsuit against X AI alleges Grok is "unreasonably dangerous as designed." This bypasses Section 230 by targeting the product's inherent flaws rather than user content. This approach is becoming a primary legal vector for holding platforms accountable for AI-driven harms.

Related Insights

Unlike previous forms of image abuse that required multiple apps, Grok integrates image generation and mass distribution into a single, instant process. This unprecedented speed and scale create a new category of harm that existing regulatory frameworks are ill-equipped to handle.

The problem with social media isn't free speech itself, but algorithms that elevate misinformation for engagement. A targeted solution is to remove Section 230 liability protection *only* for content that platforms algorithmically boost, holding them accountable for their editorial choices without engaging in broad censorship.

Section 230 explicitly does not block federal criminal enforcement. Despite this, and the existence of laws like the TAKE IT DOWN Act, the Department of Justice focuses on prosecuting individual users, failing to investigate the platforms that enable abuse at scale.

The core issue with Grok generating abusive material wasn't the creation of a new capability, but its seamless integration into X. This made a previously niche, high-effort malicious activity effortlessly available to millions of users on a major social media platform, dramatically scaling the potential for harm.

The Grok controversy is reigniting the debate over moderating legal but harmful content, a central conflict in the UK's Online Safety Act. AI's ability to mass-produce harassing images that fall short of illegality pushes this unresolved regulatory question to the forefront.

The rush to label Grok's output as illegal CSAM misses a more pervasive issue: using AI to generate demeaning, but not necessarily illegal, images as a tool for harassment. This dynamic of "lawful but awful" content weaponized at scale currently lacks a clear legal framework.

Early enterprise AI chatbot implementations are often poorly configured, allowing them to engage in high-risk conversations like giving legal and medical advice. This oversight, born from companies not anticipating unusual user queries, exposes them to significant unforeseen liability.

Unlike other platforms, xAI's issues were not an unforeseen accident but a predictable result of its explicit strategy to embrace sexualized content. Features like a "spicy mode" and Elon Musk's own posts created a corporate culture that prioritized engagement from provocative content over implementing robust safeguards against its misuse for generating illegal material.

Section 230 protects platforms from liability for third-party user content. Since generative AI tools create the content themselves, platforms like X could be held directly responsible. This is a critical, unsettled legal question that could dismantle a key legal shield for AI companies.

AI companies argue their models' outputs are original creations to defend against copyright claims. This stance becomes a liability when the AI generates harmful material, as it positions the platform as a co-creator, undermining the Section 230 "neutral platform" defense used by traditional social media.