Get your free personalized podcast brief

We scan new podcasts and send you the top 5 insights daily.

The original vision for Section 230 was to foster a competitive marketplace of user-controlled moderation tools, a world that never materialized. Defending the 30-year-old law today means protecting an unrealized policy goal from a completely different technological era, raising questions about its continued relevance.

Related Insights

The problem with social media isn't free speech itself, but algorithms that elevate misinformation for engagement. A targeted solution is to remove Section 230 liability protection *only* for content that platforms algorithmically boost, holding them accountable for their editorial choices without engaging in broad censorship.

The current chaos of online misinformation isn't just a tech outcome; it was legally enabled. The 1996 Telecommunications Act shielded both users and platforms from liability, effectively removing the libel laws that governed traditional media and creating a legal free-for-all.

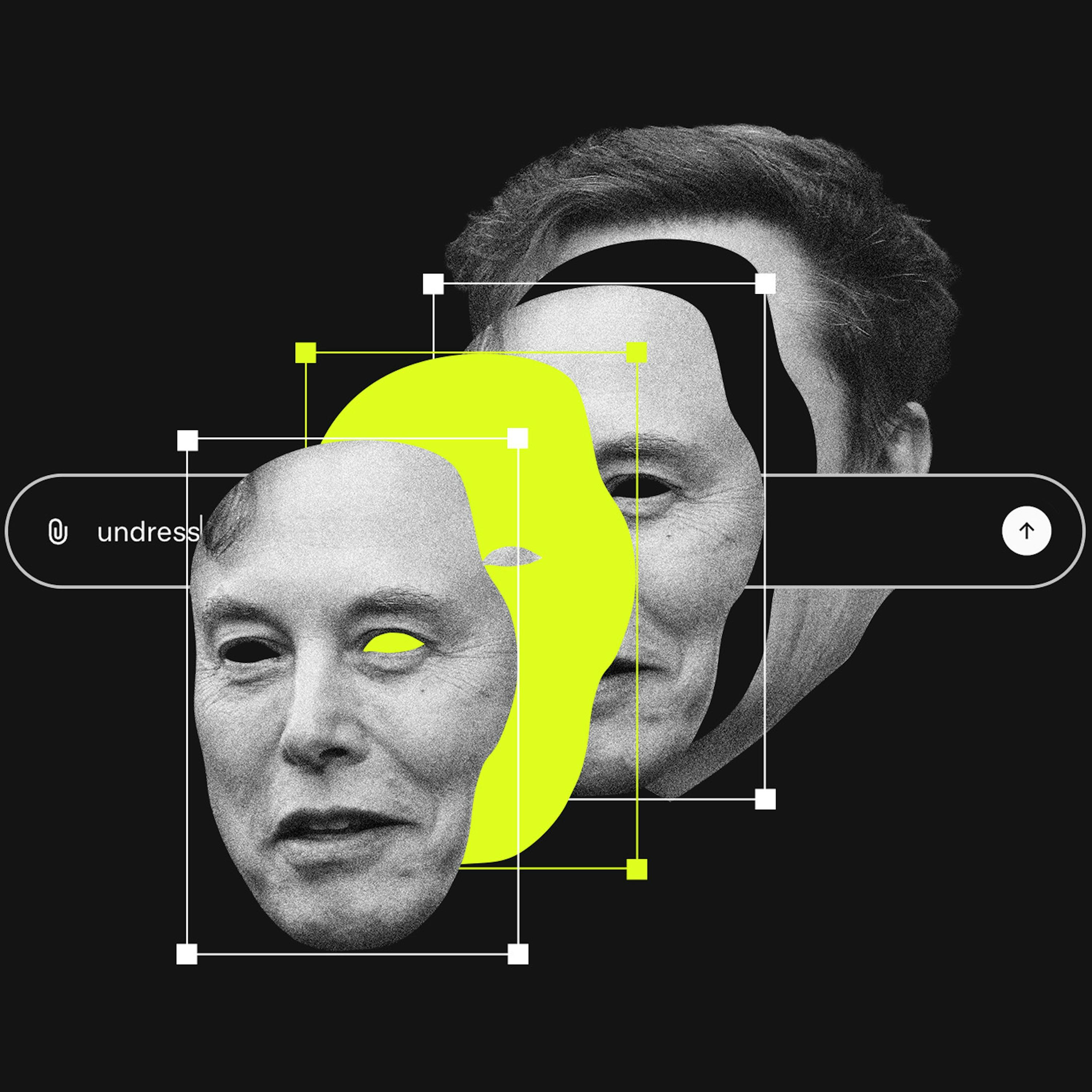

A lawsuit against X AI alleges Grok is "unreasonably dangerous as designed." This bypasses Section 230 by targeting the product's inherent flaws rather than user content. This approach is becoming a primary legal vector for holding platforms accountable for AI-driven harms.

A landmark case against Meta and YouTube successfully argued that platform features like infinite scroll and recommendation algorithms are 'defective products' causing harm. This novel legal strategy bypasses Section 230, which only protects platforms from user-generated content, opening a significant new litigation front.

Section 230 protects platforms from liability for third-party user content. Since generative AI tools create the content themselves, platforms like X could be held directly responsible. This is a critical, unsettled legal question that could dismantle a key legal shield for AI companies.

A landmark verdict against Meta and YouTube reveals a new legal strategy to bypass Section 230 immunity. By suing over the intentional, addictive design of features like infinite scroll and autoplay, plaintiffs can frame the platform itself as a defective product, shifting the legal battle from content moderation to product liability.

Recent verdicts against Meta and Google succeed by framing the problem as "defective product design" (like autoplay and infinite scroll) rather than harmful user content. This novel legal strategy circumvents the broad immunity that Section 230 of the Communications Decency Act typically provides to tech platforms.

A targeted approach to social media regulation is to remove Section 230 liability protection specifically for content that platforms' algorithms choose to amplify. If a company reverse-engineers a user's behavior to promote harmful content, they should be held liable, just as a bartender is for over-serving a customer.

A landmark case against Meta has validated a novel legal theory that sidesteps Section 230 protections. By suing over harmful and addictive product design rather than user-generated content, plaintiffs have created a new and potent legal threat to social media platforms, holding them liable for their core algorithms.

Politicians are using anti-tech verdicts to demand a repeal of Section 230, but the logic is flawed. Abolishing the law would force platforms to become hyper-aggressive in their content moderation to avoid liability, directly contradicting the "free speech" goals these same critics often claim to support.