Get your free personalized podcast brief

We scan new podcasts and send you the top 5 insights daily.

AI's ability to code seems like advanced reasoning, but it's actually just navigating the most complete archive of human knowledge ever created. Programming's version control, documentation, and forums provide a perfectly mapped territory for AI to search, not a complex problem for it to solve through intelligence.

Related Insights

LLMs shine when acting as a 'knowledge extruder'—shaping well-documented, 'in-distribution' concepts into specific code. They fail when the core task is novel problem-solving where deep thinking, not code generation, is the bottleneck. In these cases, the code is the easy part.

Specialized coding models often fail because a developer's workflow isn't just writing code; it's a complex conversation involving brainstorming, compliance, and web research. The best coding assistants are the most generalist models because every complex task has AGI-like qualities.

The structured, hierarchical nature of code (functions, libraries) provides a powerful training signal for AI models. This helps them infer structural cues applicable to broader reasoning and planning tasks, far beyond just code generation.

LLMs excel at coding because internet data (e.g., GitHub) provides complete source code, dependencies, and reasoning. In contrast, mathematical texts online are often just condensed summaries or final proofs, lacking the step-by-step process. This makes it harder for models to learn mathematical reasoning from pre-training alone.

AI coding assistants rapidly conduct complex technical research that would take a human engineer hours. They can synthesize information from disparate sources like GitHub issues, two-year-old developer forum posts, and source code to find solutions to obscure problems in minutes.

Unlike traditional programming, which demands extreme precision, modern AI agents operate from business-oriented prompts. Given a high-level goal and minimal context (like a single class name), an AI can infer intent and generate a complete, multi-file solution.

The ability to code is not just another domain for AI; it's a meta-skill. An AI that can program can build tools on demand to solve problems in nearly any digital domain, effectively simulating general competence. This makes mastery of code a form of instrumental, functional AGI for most economically valuable work.

The next major advance for AI in software development is not just completing tasks, but deeply understanding entire codebases. This capability aims to "mind meld" the human with the AI, enabling them to collaboratively tackle problems that neither could solve alone.

Contrary to human intuition, a massive, well-documented domain makes an AI's job easier, not harder. More documentation provides more 'maps' for the AI to navigate. In contrast, a simple human conflict is unsolvable for an AI because its context isn't formalized or archived, creating a void of information.

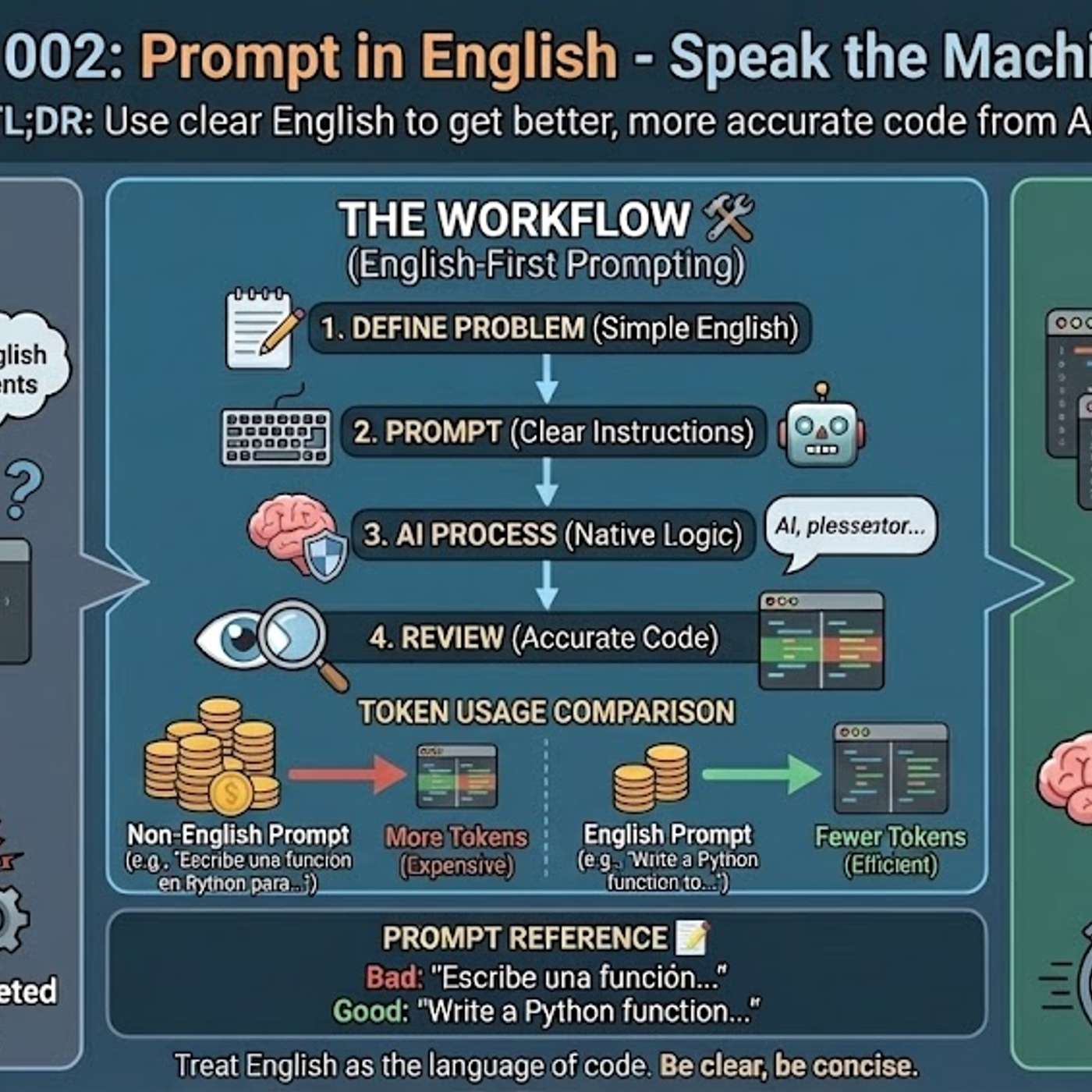

The primary reason AI models generate better code from English prompts is their training data composition. Over 90% of AI training sets, along with most technical libraries and documentation, are in English. This means the models' core reasoning pathways for code-related tasks are fundamentally optimized for English.

![[State of Code Evals] After SWE-bench, Code Clash & SOTA Coding Benchmarks recap — John Yang thumbnail](https://assets.flightcast.com/V2Uploads/nvaja2542wefzb8rjg5f519m/01K4D8FB4MNA071BM5ZDSMH34N/square.jpg)