Get your free personalized podcast brief

We scan new podcasts and send you the top 5 insights daily.

The visceral rejection of AI-generated content as "slop" is not the root cause of anti-AI sentiment; it's a symptom. People already skeptical of AI for other reasons (job fears, ethics) are predisposed to view its output negatively. This dislike is a cultural manifestation of a pre-existing bias.

Related Insights

Users despise AI "slop" but admire the "farmer" who creates. This paradox highlights a tension: is an AI content creator still a noble artisan, or just a purveyor of low-quality feed for the masses? The value of "craft" is being re-evaluated.

The widespread belief that social media made the world worse, despite initial optimism, has eroded public trust in technology as inherent progress. This "hangover" from the last tech wave creates a default environment of skepticism for AI, making positive perception significantly more challenging.

Many people's negative opinions on AI-generated content stem from a deep-seated fear of their jobs becoming obsolete. This emotional reaction will fade as AI content becomes indistinguishable from human-created content, making the current debate a temporary, fear-based phenomenon.

Unlike the tech industry's forward-looking nostalgia, Hollywood's culture is rooted in preserving traditional filmmaking processes. This cultural attachment makes the creative community view AI not just as a job threat, but as an unwelcome disruption to the established craft and order, slowing its adoption as a creative tool.

The term "slop" is misattributed to AI. It actually describes any generic, undifferentiated output designed for mass appeal, a problem that existed in human-made media long before LLMs. AI is simply a new tool for scaling its creation.

As AI makes content creation easy, a cultural divide emerges. 'Lowbrow' culture imitates machines (e.g., using LLM-like speech). 'Highbrow' culture deliberately creates 'machine-resistant' art and communication to distinguish human effort and creativity from automated output.

The hypothesis suggests artists reject generative AI because text-prompt interfaces feel alien compared to traditional tools. If AI tools had interfaces resembling familiar software like Photoshop or NVIDIA Canvas, the critique would likely be framed as purism rather than a fundamental rejection of users as 'non-artists'.

The gap between AI believers and skeptics isn't about who "gets it." It's driven by a psychological need for AI to be a normal, non-threatening technology. People grasp onto any argument that supports this view for their own peace of mind, career stability, or business model, making misinformation demand-driven.

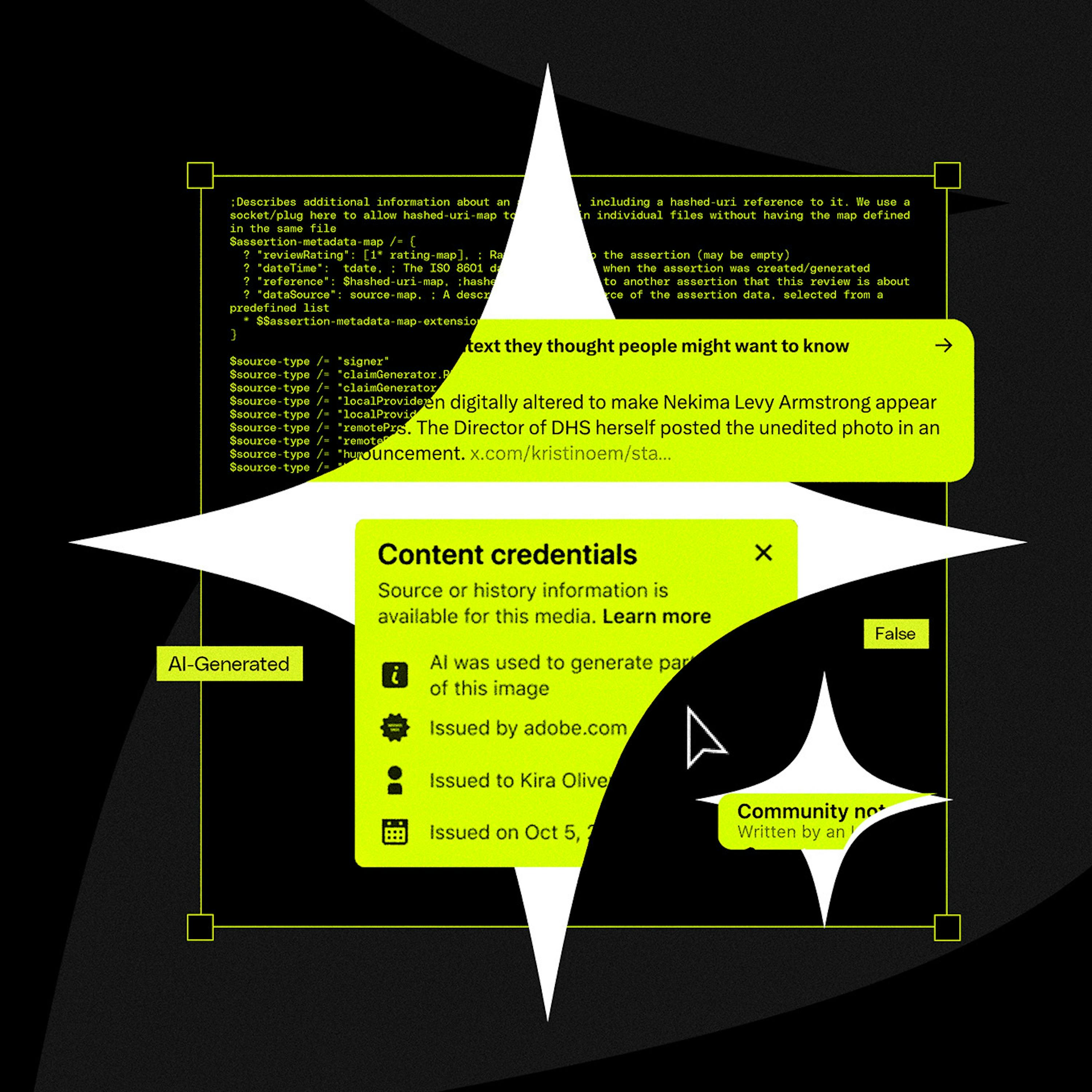

The "AI-generated" label carries a negative connotation of being cheap, efficient, and lacking human creativity. This perception devalues the final product in the eyes of consumers and creators, disincentivizing platforms from implementing labels that would anger their user base and advertisers.

While early media coverage focused on doomsday scenarios, the primary drivers of broad public skepticism are far more immediate. Concerns about white-collar job loss and the devaluation of human art are fueling the anti-AI movement much more effectively than abstract fears of superintelligence.