Get your free personalized podcast brief

We scan new podcasts and send you the top 5 insights daily.

The model's training used "response only masking," where it only learns from the response part of the training data. This method forces the model to first generate a structured "chain of thought" before producing a final answer, directly embedding a systematic problem-solving process into its behavior.

Related Insights

Reinforcement learning incentivizes AIs to find the right answer, not just mimic human text. This leads to them developing their own internal "dialect" for reasoning—a chain of thought that is effective but increasingly incomprehensible and alien to human observers.

The Qwen 3.6 model was fine-tuned using "chain of thought distillation" data from the more powerful Claude Opus. This technique allows smaller models to learn and replicate the structured problem-solving capabilities of larger systems, making advanced AI reasoning more accessible.

The structured, hierarchical nature of code (functions, libraries) provides a powerful training signal for AI models. This helps them infer structural cues applicable to broader reasoning and planning tasks, far beyond just code generation.

Anthropic suggests that LLMs, trained on text about AI, respond to field-specific terms. Using phrases like 'Think step by step' or 'Critique your own response' acts as a cheat code, activating more sophisticated, accurate, and self-correcting operational modes in the model.

Instead of immediately asking an AI to perform a complex task, first prompt it to create a functional spec or a sequential plan. Go back and forth to align on this plan before instructing it to execute, which significantly improves the final output's quality and relevance.

Many AI tools expose the model's reasoning before generating an answer. Reading this internal monologue is a powerful debugging technique. It reveals how the AI is interpreting your instructions, allowing you to quickly identify misunderstandings and improve the clarity of your prompts for better results.

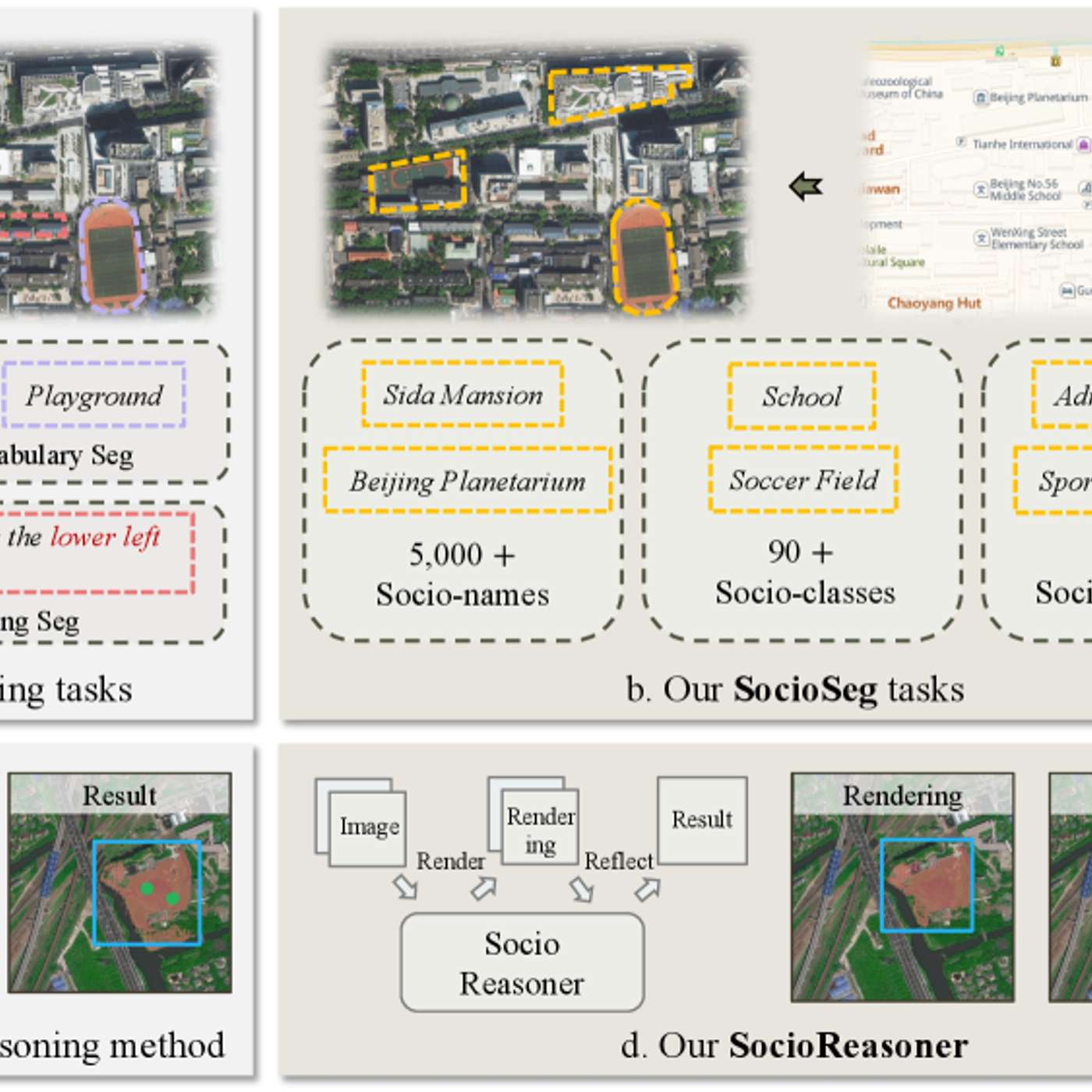

The featured AI model succeeds by reframing urban analysis as a reasoning problem. It uses a two-stage process—generating broad hypotheses then refining with detailed evidence—which mimics human cognition and outperforms traditional single-pass pattern recognition systems.

When fine-tuning a model for question-answering, tokenize questions and answers separately. Then, use a masking technique to force the training process to ignore the question tokens when calculating loss. This concentrates the model's learning on generating correct answers, improving training efficiency and focus.

Advanced reasoning models excel with ambiguous inputs because they first deduce the user's underlying needs before executing a task. This ability to intelligently fill in the blanks from a poor prompt creates a "wow effect" by producing a high-quality, praised result.

To improve LLM reasoning, researchers feed them data that inherently contains structured logic. Training on computer code was an early breakthrough, as it teaches patterns of reasoning far beyond coding itself. Textbooks are another key source for building smaller, effective models.