Get your free personalized podcast brief

We scan new podcasts and send you the top 5 insights daily.

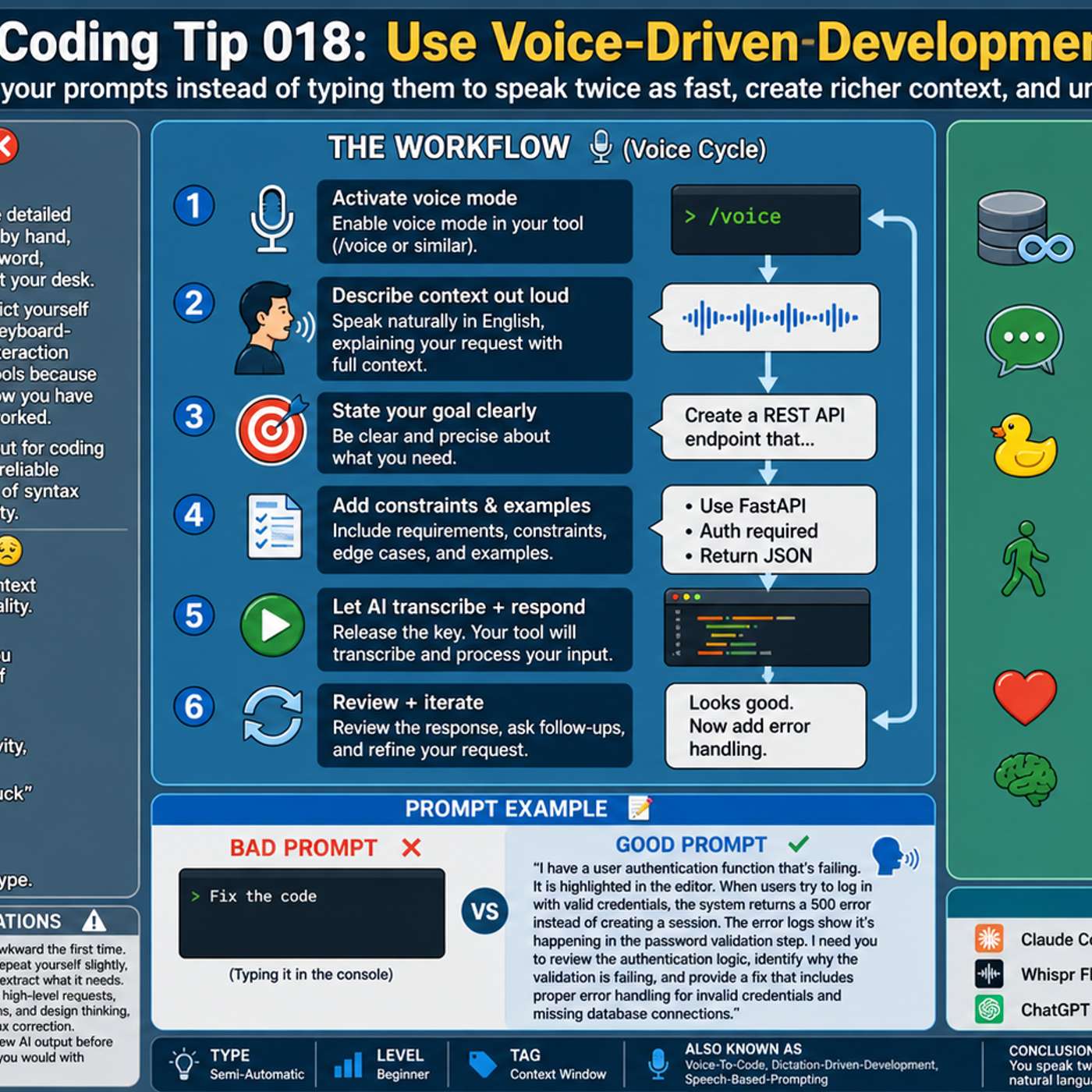

The process of verbally explaining a complex problem to an AI assistant helps developers uncover solutions on their own. This mirrors the traditional "rubber ducking" debugging technique, where vocalizing a problem clarifies one's thinking and reveals the solution.

Related Insights

For stubborn bugs, use an advanced prompting technique: instruct the AI to 'spin up specialized sub-agents,' such as a QA tester and a senior engineer. This forces the model to analyze the problem from multiple perspectives, leading to a more comprehensive diagnosis and solution.

The most effective way to learn and integrate AI is through verbal communication, not just typing. Having spoken conversations with LLMs on various topics builds a natural relationship and intuition, much like practicing a physical skill. This interactive dialogue is key to breaking down initial learning barriers.

Dictating allows developers to remain in a "thinking mode" focused on the problem, rather than context-switching to the mechanical task of typing. This maintains uninterrupted mental focus on the problem, improving ideation and problem-solving.

Instead of typing, dictating prompts for AI coding tools allows for faster and more detailed instructions. Speaking your thought process naturally includes more context and nuance, which leads to better results from the AI. Tools like Whisperflow are optimized with developer terminology for higher accuracy.

Many AI tools expose the model's reasoning before generating an answer. Reading this internal monologue is a powerful debugging technique. It reveals how the AI is interpreting your instructions, allowing you to quickly identify misunderstandings and improve the clarity of your prompts for better results.

Gabor dictates long, detailed prompts to his AI agents. This allows him to provide significantly more context, nuance, and specific constraints than would be practical to type. The AI can parse the verbose input, leading to a much better-specified final product.

Run two different AI coding agents (like Claude Code and OpenAI's Codex) simultaneously. When one agent gets stuck or generates a bug, paste the problem into the other. This "AI Ping Pong" leverages the different models' strengths and provides a "fresh perspective" for faster, more effective debugging.

To ensure comprehension of AI-generated code, developer Terry Lynn created a "rubber duck" rule in his AI tool. This prompts the AI to explain code sections and even create pop quizzes about specific functions. This turns the development process into an active learning tool, ensuring he deeply understands the code he's shipping.

Effective AI prompting involves providing a detailed narrative of the situation, user, and goals. This forces the AI to ask clarifying questions, signaling a deeper understanding and leading to more relevant answers compared to a simple, direct command.

After solving a problem with an AI tool, don't just move on. Ask the AI agent how you could have phrased your prompt differently to avoid the issue or solve it faster. This creates a powerful feedback loop that continuously improves your ability to communicate effectively with the AI.