Get your free personalized podcast brief

We scan new podcasts and send you the top 5 insights daily.

The new generation of image models, like OpenAI's, is moving beyond simple generation. They now employ a "thinking" process that allows for complex tasks like performing web searches for context, synthesizing the results, and embedding functional QR codes directly into the final image.

Related Insights

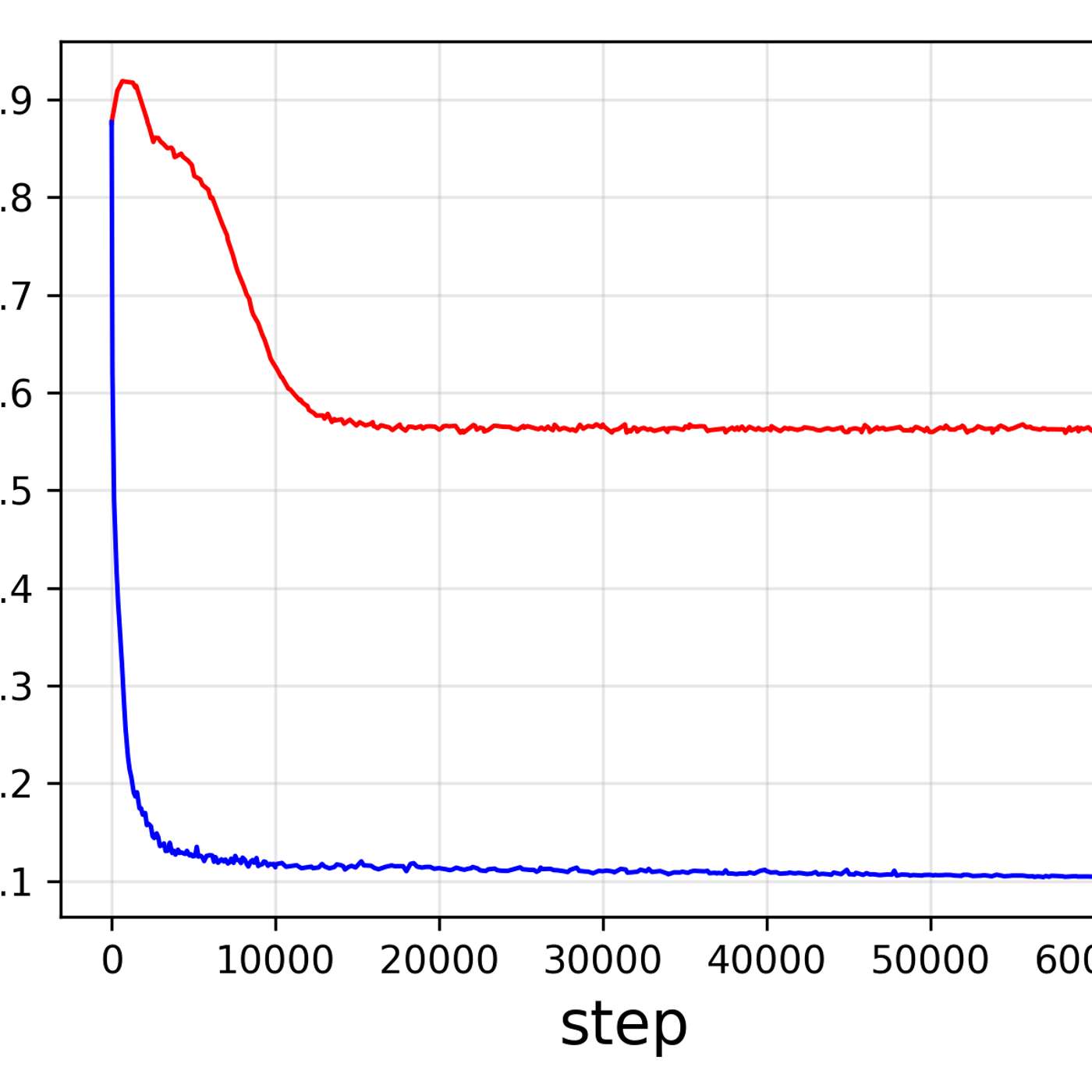

To move beyond keyword search in their media archive, Tim McLear's system generates two vector embeddings for each asset: one from the image thumbnail and another from its AI-generated text description. Fusing these enables a powerful semantic search that understands visual similarity and conceptual relationships, not just exact text matches.

Unlike the now-shelved Sora video generator, which used a different "world model" architecture, OpenAI's image generation tools are built on the same core GPT-style technology as their text models. This allows them to retain a popular feature without diverting resources from their primary research path.

The future of creative AI is moving beyond simple text-to-X prompts. Labs are working to merge text, image, and video models into a single "mega-model" that can accept any combination of inputs (e.g., a video plus text) to generate a complex, edited output, unlocking new paradigms for design.

While language models are becoming incrementally better at conversation, the next significant leap in AI is defined by multimodal understanding and the ability to perform tasks, such as navigating websites. This shift from conversational prowess to agentic action marks the new frontier for a true "step change" in AI capabilities.

Image models like Google's NanoBanana Pro can now connect to live search to ground their output in real-world facts. This breakthrough allows them to generate dense, text-heavy infographics with coherent, accurate information, a task previously impossible for image models which notoriously struggled with rendering readable text.

The key difference between modern AI and older tech like Google Search is its ability to reason about hypotheticals. It doesn't just retrieve existing information; it synthesizes knowledge to "think for itself" and generate entirely new content.

Unlike chatbots that rely solely on their training data, Google's AI acts as a live researcher. For a single user query, the model executes a 'query fanout'—running multiple, targeted background searches to gather, synthesize, and cite fresh information from across the web in real-time.

New image models like Google's Nano Banana Pro can transform lengthy articles and research papers into detailed whiteboard diagrams. This represents a powerful new form of information compression, moving beyond simple text summarization to a complete modality shift for easier comprehension and knowledge transfer.

The most significant recent AI advance is models' ability to use chain-of-thought reasoning, not just retrieve data. However, most business users are unaware of this 'deep research' capability and continue using AI as a simple search tool, missing its transformative potential for complex problem-solving.

The ability of a single encoder to excel at both understanding and generating images indicates these two tasks are not as distinct as they seem. It suggests they rely on a shared, fundamental structure of visual information that can be captured in one unified representation.