Get your free personalized podcast brief

We scan new podcasts and send you the top 5 insights daily.

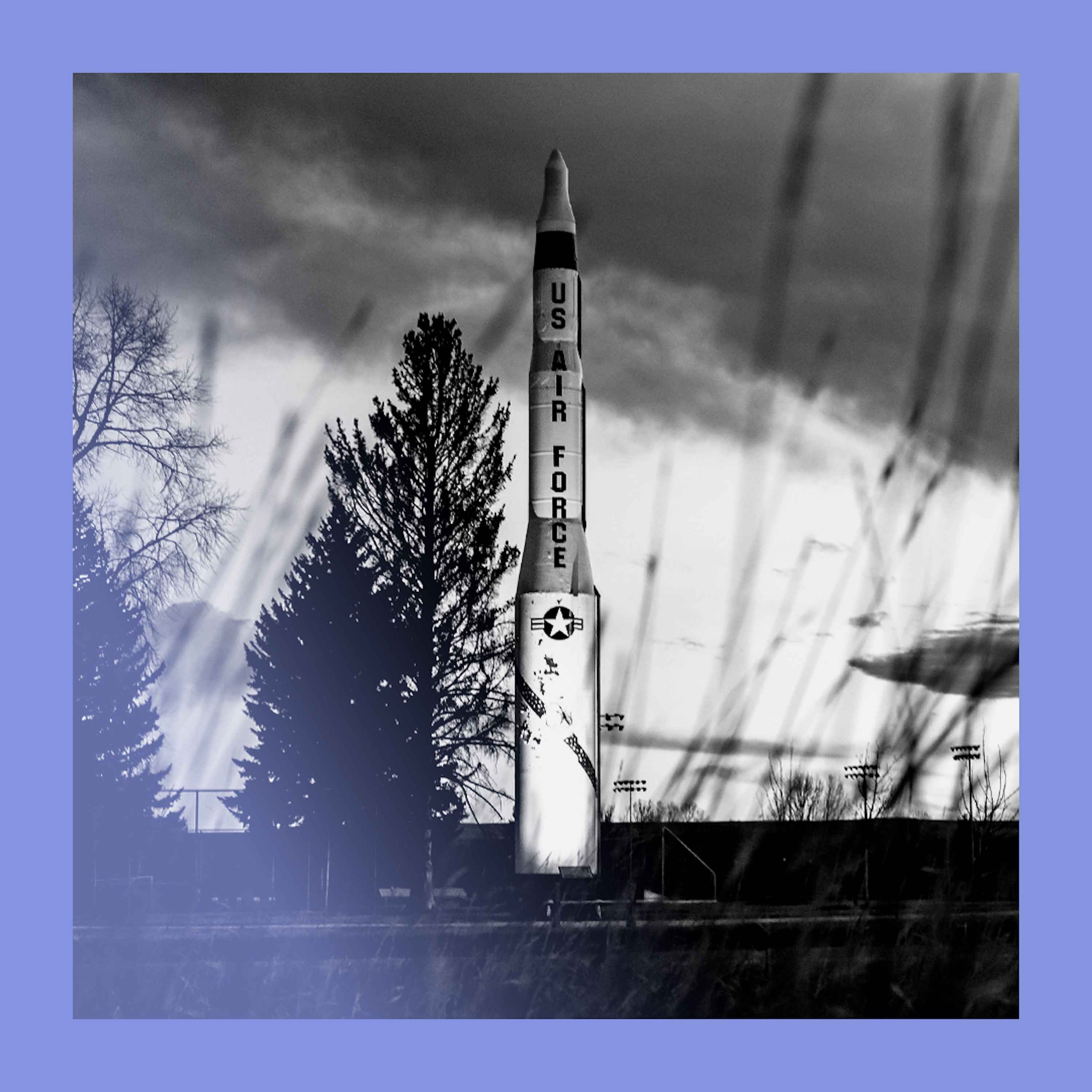

AI can optimize nuclear targeting by more efficiently identifying mobile targets and assessing battle damage. This increased efficiency could reduce the number of weapons needed for a specific objective, potentially alleviating pressure to massively expand the US arsenal and creating future arms control opportunities.

Related Insights

The survivability of nuclear-armed submarines, the cornerstone of second-strike capability, relies on their ability to hide. AI's capacity to parse vast sensor data to find faint signals could 'turn the oceans transparent,' making these massive vessels detectable and upending decades of nuclear deterrence strategy.

A global AI safety regime should learn from nuclear arms control by focusing on the physical infrastructure that enables strategic capabilities. Instead of just seeking promises, it should aim to control access to chokepoints like advanced chip manufacturing and the massive data centers required for frontier models.

The joint statement on keeping humans in control of nuclear weapons is a significant diplomatic achievement demonstrating shared intent. However, it's not a binding agreement, and the real challenge is verifying this commitment, which is difficult given the secrecy surrounding military AI integration.

While the US military opposes bans on autonomous 'killer robots' for conventional warfare, it maintains a firm 'human-in-the-loop' policy for nuclear launch decisions. This reveals a strategic calculation: the normative value of preventing autonomous nuclear use outweighs any marginal benefit, a line not drawn for conventional systems.

Public fear focuses on AI hypothetically creating new nuclear weapons. The more immediate danger is militaries trusting highly inaccurate AI systems for critical command and control decisions over existing nuclear arsenals, where even a small error rate could be catastrophic.

International AI treaties, particularly with nations like China, are unlikely to hold based on trust alone. A stable agreement requires a mutually-assured-destruction-style dynamic, meaning the U.S. must develop and signal credible offensive capabilities to deter cheating.

Countering the common narrative, Anduril views AI in defense as the next step in Just War Theory. The goal is to enhance accuracy, reduce collateral damage, and take soldiers out of harm's way. This continues a historical military trend away from indiscriminate lethality towards surgical precision.

Unlike China's historical "minimal deterrence" (surviving a first strike to retaliate), the US and Russia operate on "damage limitation"—using nukes to destroy the enemy's arsenal. This logic inherently drives a numbers game, fueling an arms race as each side seeks to counter the other's growing stockpile.

International AI treaties are feasible. Just as nuclear arms control monitors uranium and plutonium, AI governance can monitor the choke point for advanced AI: high-end compute chips from companies like NVIDIA. Tracking the global distribution of these chips could verify compliance with development limits.

AI targeting systems excel at generating vast target lists for rapid, shock-and-awe campaigns. However, they are currently being applied to a slower, attritional conflict. This misapplication turns operational excellence into a strategic dead end, where the machine simply produces more targets without a causal link to defeating the enemy.