Get your free personalized podcast brief

We scan new podcasts and send you the top 5 insights daily.

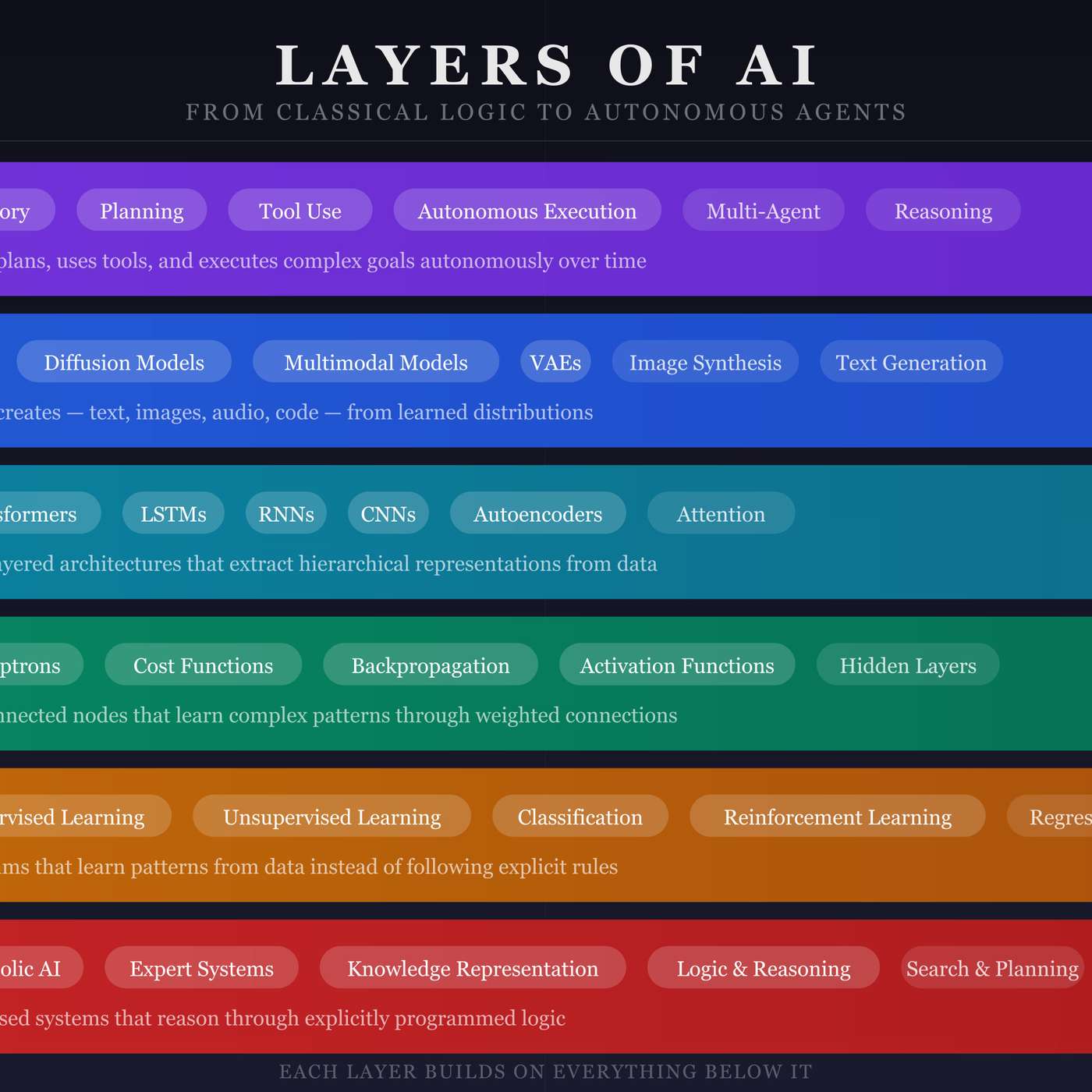

Contrary to the idea that new technologies make old ones obsolete, AI's evolution is a cumulative stack. Each new layer, like deep learning or generative AI, is built upon and extends the capabilities of the one beneath it, all the way down to the principles of classical AI.

Related Insights

History shows that major technological shifts like the internet and AI require a fundamental re-architecting of everything from silicon and networking up to software. The industry repeatedly forgets this lesson, mistakenly declaring parts of the stack, like hardware, as commoditized right before the next wave hits.

Fears of AI's 'recursive self-improvement' should be contextualized. Every major general-purpose technology, from iron to computers, has been used to improve itself. While AI's speed may differ, this self-catalyzing loop is a standard characteristic of transformative technologies and has not previously resulted in runaway existential threats.

Andreessen argues the current AI summer is durable because it's built on four distinct, fundamental breakthroughs in functionality. This stack of capabilities, from language models to self-improving systems, creates a platform for sustained innovation, unlike previous cycles.

While AI progress is marketed in revolutionary "step-changes" (e.g., GPT-3 to GPT-4), the underlying reality is more like compounding interest. A continuous stream of small, incremental improvements are accumulating, and their combined effect is what creates the feeling of an exponential leap in capability over time.

Unlike any prior tool, AI can be directly applied to improve its own creation. It designs more efficient computer chips, writes better training code, and automates research, creating a recursive self-improvement loop that rapidly outpaces human oversight and control.

Debating whether today's methods (like Reinforcement Learning) are sufficient for AGI is likely a moot point. Just as RL shifted focus from pre-training limitations, new conceptual unlocks will emerge from exponential growth in research and compute, rendering current debates outdated before they are ever resolved.

The pace of AI model improvement is faster than the ability to ship specific tools. By creating lower-level, generalizable tools, developers build a system that automatically becomes more powerful and adaptable as the underlying AI gets smarter, without requiring re-engineering.

Breakthroughs will emerge from 'systems' of AI—chaining together multiple specialized models to perform complex tasks. GPT-4 is rumored to be a 'mixture of experts,' and companies like Wonder Dynamics combine different models for tasks like character rigging and lighting to achieve superior results.

While GenAI continues the "learn by example" paradigm of machine learning, its ability to create novel content like images and language is a fundamental step-change. It moves beyond simply predicting patterns to generating entirely new outputs, representing a significant evolution in computing.

Contrary to the belief that AI will flatten technology stacks, history shows that layers persist because they map to organizational boundaries, compatibility needs, and human logic. Instead of eliminating them, AI agents will learn to navigate and operate within these established structures.