Get your free personalized podcast brief

We scan new podcasts and send you the top 5 insights daily.

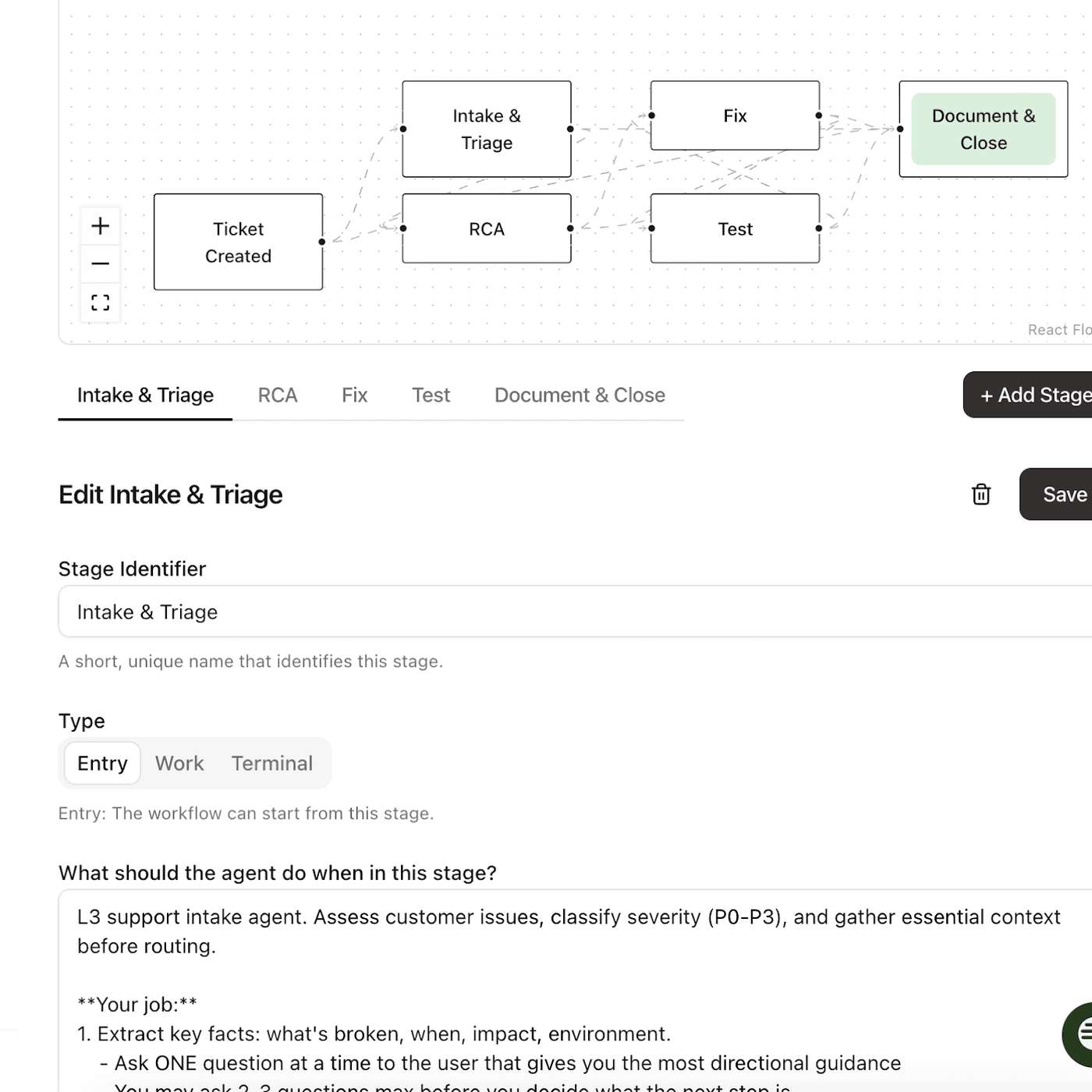

Instead of forcing full autonomy, the AI agent allows teams to start with human approvals at key stages. This 'human-in-the-loop' model builds trust and enables organizations to incrementally automate complex support workflows as they grow more confident in the system's reliability.

Related Insights

To avoid failure, launch AI agents with high human control and low agency, such as suggesting actions to an operator. As the agent proves reliable and you collect performance data, you can gradually increase its autonomy. This phased approach minimizes risk and builds user trust.

Moving beyond the co-pilot model, Genesis has its AI agents work autonomously on complex tasks. They only engage a human when they get stuck or their confidence in a decision drops, inverting the traditional human-in-the-loop workflow for maximum efficiency and creating a system that learns from every interaction.

To overcome employee fear, don't deploy a fully autonomous AI agent on day one. Instead, introduce it as a hybrid assistant within existing tools like Slack. Start with it asking questions, then suggesting actions, and only transition to full automation after the team trusts it and sees its value.

To overcome user distrust of AI agents having access to personal data, the adoption path must be gradual. The AI should first provide suggestions for the user to approve (e.g., draft emails). Only after consistently proving its reliability and allowing users to learn its boundaries can trust be established for autonomous action.

To mitigate risks like AI hallucinations and high operational costs, enterprises should first deploy new AI tools internally to support human agents. This "agent-assist" model allows for monitoring, testing, and refinement in a controlled environment before exposing the technology directly to customers.

Current AI workflows are not fully autonomous and require significant human oversight, meaning immediate efficiency gains are limited. By framing these systems as "interns" that need to be "babysat" and trained, organizations can set realistic expectations and gradually build the user trust necessary for future autonomy.

The most effective AI user experiences are skeuomorphic, emulating real-world human interactions. Design an AI onboarding process like you would hire a personal assistant: start with small tasks, verify their work to build trust, and then grant more autonomy and context over time.

For complex, high-stakes tasks like booking executive guests, avoid full automation initially. Instead, implement a 'human in the loop' workflow where the AI handles research and suggestions, but requires human confirmation before executing key actions, building trust over time.

Onboard users (or yourself) to an AI agent like a new human teammate. Start with easy, high-frequency tasks (e.g., summarizing Slack threads). Progress to harder, multi-step tasks (e.g., scheduling a meeting based on replies). Only then, attempt to automate an entire workflow (e.g., running daily growth experiments).

The concept of "human-in-the-loop" is often misapplied. To effectively manage autonomous AI agents, companies must map the agent's entire workflow and insert mandatory human approval at critical decision points, not just as a final check or initial hand-off.