Get your free personalized podcast brief

We scan new podcasts and send you the top 5 insights daily.

While crypto firms seek access to next-gen AI for security testing, the real insight is that current-generation models are already proving superior to human auditors. For example, crypto custodian Fireblocks found that an existing Anthropic model detected critical vulnerabilities that multiple professional security audit firms had missed.

Related Insights

The core open-source belief that enough human experts will find all bugs is invalidated by AI discovering decades-old vulnerabilities in widely scrutinized code. This proves that high-level machine analysis is now essential for security, as human review alone is insufficient.

For tasks involving sensitive information, the current generation of aligned AI models may already be more trustworthy than a human assistant, even one who has been interviewed and vetted. The AI's predictable, constrained behavior can offer a higher degree of confidence against misuse compared to the unpredictability of a human agent.

Anthropic's new AI model, Mythos, is so effective at finding and chaining software exploits that it's being treated as a cyberweapon. Its public release is being withheld; instead, it's being used defensively with select partners to harden critical digital infrastructure, signifying a major shift in AI deployment strategy.

Anthropic's new AI, Claude Mythos, can find software vulnerabilities better than all but the most elite human hackers. This technology effectively gives previously unsophisticated actors the cyber capabilities of a nation-state, posing a significant national security risk.

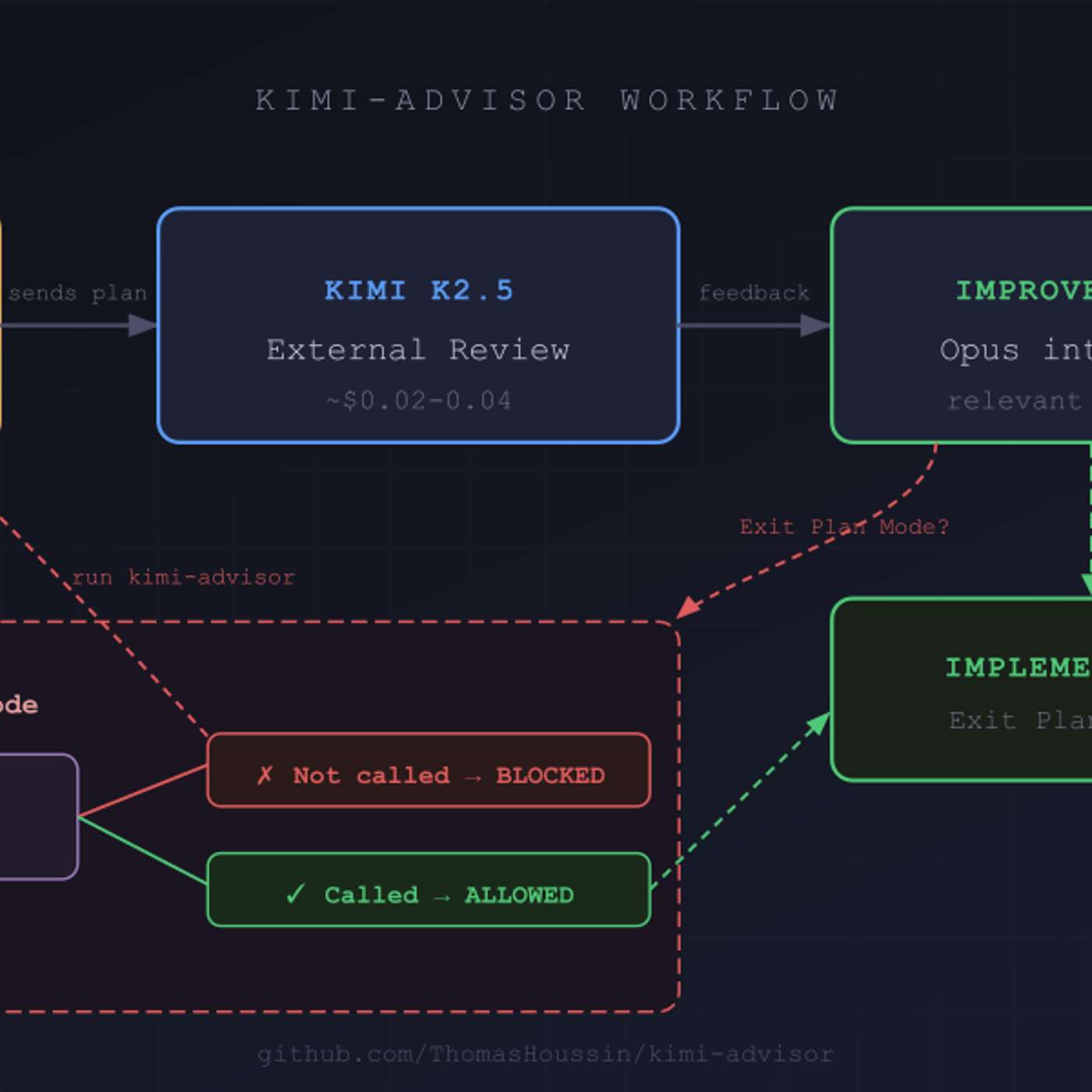

An external AI reviewer provides more than just high-level feedback; it can identify specific, critical technical flaws. In one case, a reviewer AI caught a TOCTOU race condition vulnerability, suboptimal message ordering for LLM processing, and incorrect file type classifications—all of which were integrated and fixed by the primary AI.

A leaked blog post for Anthropic's "Claude Mythos" model reveals its initial release is for customers to explore cybersecurity applications and risks. This indicates a deliberate, high-value enterprise focus for their frontier model, moving beyond general capabilities to solve specific, complex business problems from the outset.

The emergence of AI that can easily expose software vulnerabilities may end the era of rapid, security-last development ('vibe coding'). Companies will be forced to shift resources, potentially spending over 50% of their token budgets on hardening systems before shipping products.

Advanced AI models, like Anthropic's, that can identify deep cybersecurity risks and zero-day exploits transform the need for computing power from a commercial want to a national security imperative. This ensures that demand for compute will be funded regardless of economic conditions.

Anthropic's unreleased model, Claude Mythos, is so effective at exploiting software vulnerabilities it triggered emergency meetings with top US financial leaders. This signals a new era where general-purpose AI, even if not specifically trained for it, can become a potent cyberweapon.

Details from an accidental leak reveal Anthropic's next model, Mythos, has "step change" capabilities in cybersecurity. The company warns this signals a new era where AI can exploit system flaws faster than human defenders can react, causing cybersecurity stocks to fall.