Get your free personalized podcast brief

We scan new podcasts and send you the top 5 insights daily.

If an AI model can identify that a user is planning a violent act, the operating company should be legally required to notify authorities. This parallels existing liability laws for professionals like bartenders who observe imminent danger, applying a "duty to report" standard to AI platforms.

Related Insights

A key distinction in AI regulation is to focus on making specific harmful applications illegal—like theft or violence—rather than restricting the underlying mathematical models. This approach punishes bad actors without stifling core innovation and ceding technological leadership to other nations.

The risk of AI companionship isn't just user behavior; it's corporate inaction. Companies like OpenAI have developed classifiers to detect when users are spiraling into delusion or emotional distress, but evidence suggests this safety tooling is left "on the shelf" to maximize engagement.

As users turn to AI for mental health support, a critical governance gap emerges. Unlike human therapists, these AI systems face no legal or professional repercussions for providing harmful advice, creating significant user risk and corporate liability.

Instead of trying to legally define and ban 'superintelligence,' a more practical approach is to prohibit specific, catastrophic outcomes like overthrowing the government. This shifts the burden of proof to AI developers, forcing them to demonstrate their systems cannot cause these predefined harms, sidestepping definitional debates.

Early enterprise AI chatbot implementations are often poorly configured, allowing them to engage in high-risk conversations like giving legal and medical advice. This oversight, born from companies not anticipating unusual user queries, exposes them to significant unforeseen liability.

Organizations must urgently develop policies for AI agents, which take action on a user's behalf. This is not a future problem. Agents are already being integrated into common business tools like ChatGPT, Microsoft Copilot, and Salesforce, creating new risks that existing generative AI policies do not cover.

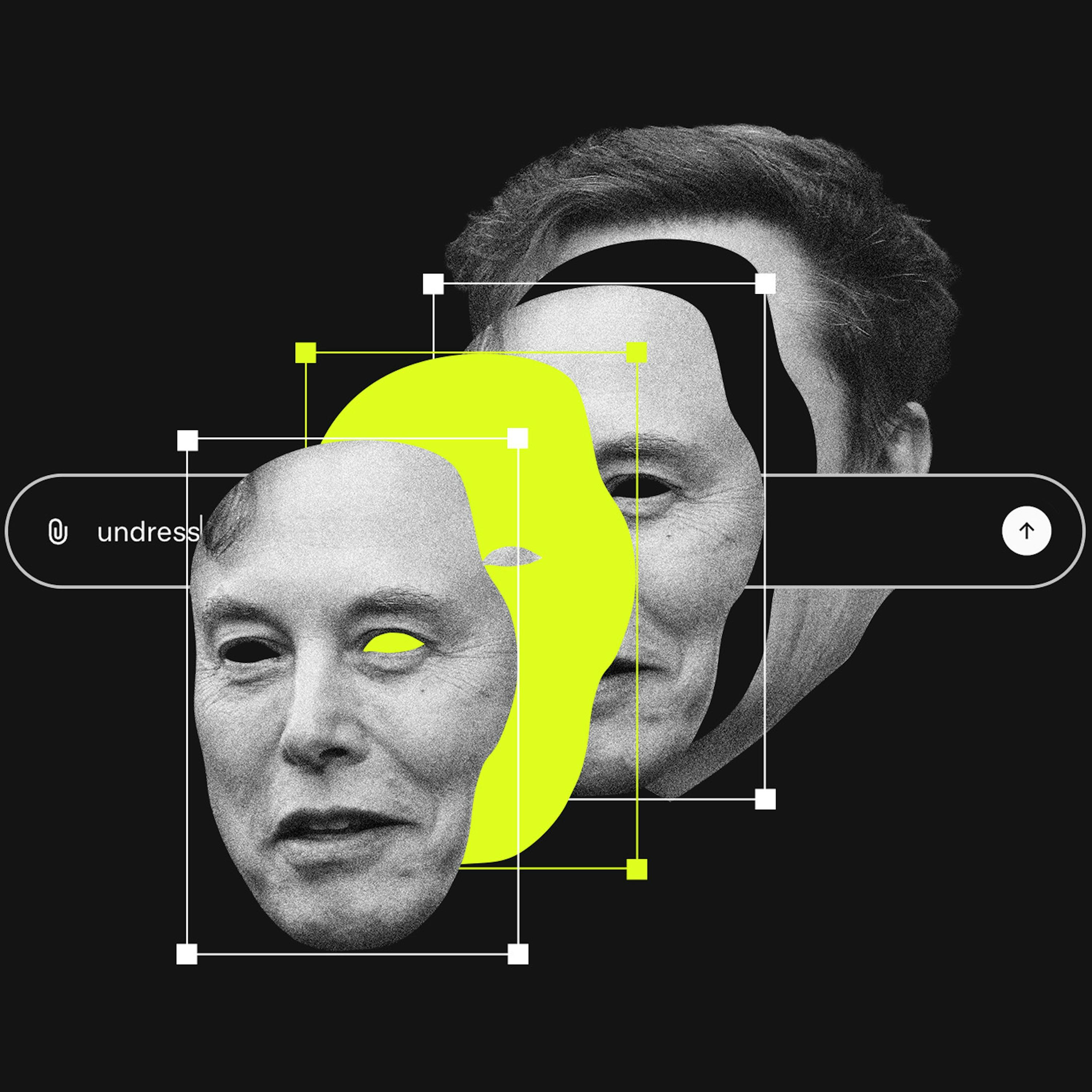

Section 230 protects platforms from liability for third-party user content. Since generative AI tools create the content themselves, platforms like X could be held directly responsible. This is a critical, unsettled legal question that could dismantle a key legal shield for AI companies.

AI companies argue their models' outputs are original creations to defend against copyright claims. This stance becomes a liability when the AI generates harmful material, as it positions the platform as a co-creator, undermining the Section 230 "neutral platform" defense used by traditional social media.

While AI chatbots are programmed to offer crisis hotlines, they fail at the critical next step: a "warm handoff." They don't disengage or follow up, instead immediately continuing the harmful conversation, which can undermine the suggestion to seek the human help they just recommended.

Treat accountability as an engineering problem. Implement a system that logs every significant AI action, decision path, and triggering input. This creates an auditable, attributable record, ensuring that in the event of an incident, the 'why' can be traced without ambiguity, much like a flight recorder after a crash.