Get your free personalized podcast brief

We scan new podcasts and send you the top 5 insights daily.

AlphaGo's architecture mimicked human cognition by pairing a 'fast thinking' neural network for intuition with a 'slow thinking' search algorithm for explicit planning. This hybrid model, combining pattern recognition with calculation, proved more powerful for tackling complex problems than either approach alone.

Related Insights

AI excels at probabilistic thinking and pattern matching (optimization), while humans excel at possibility thinking and innovation. The most powerful approach, the "centaur model," uses AI to handle optimization, freeing human cognition for imaginative tasks that create the future.

According to Demis Hassabis, LLMs feel uncreative because they only perform pattern matching. To achieve true, extrapolative creativity like AlphaGo's famous 'Move 37,' models must be paired with a search component that actively explores new parts of the knowledge space beyond the training data.

Historically, investment tech focused on speed. Modern AI, like AlphaGo, offers something new: inhuman intelligence that reveals novel insights and strategies humans miss. For investors, this means moving beyond automation to using AI as a tool for generating genuine alpha through superior inference.

Demis Hassabis argues against an LLM-only path to AGI, citing DeepMind's successes like AlphaGo and AlphaFold as evidence. He advocates for "hybrid systems" (or neurosymbolics) that combine neural networks with other techniques like search or evolutionary methods to discover truly new knowledge, not just remix existing data.

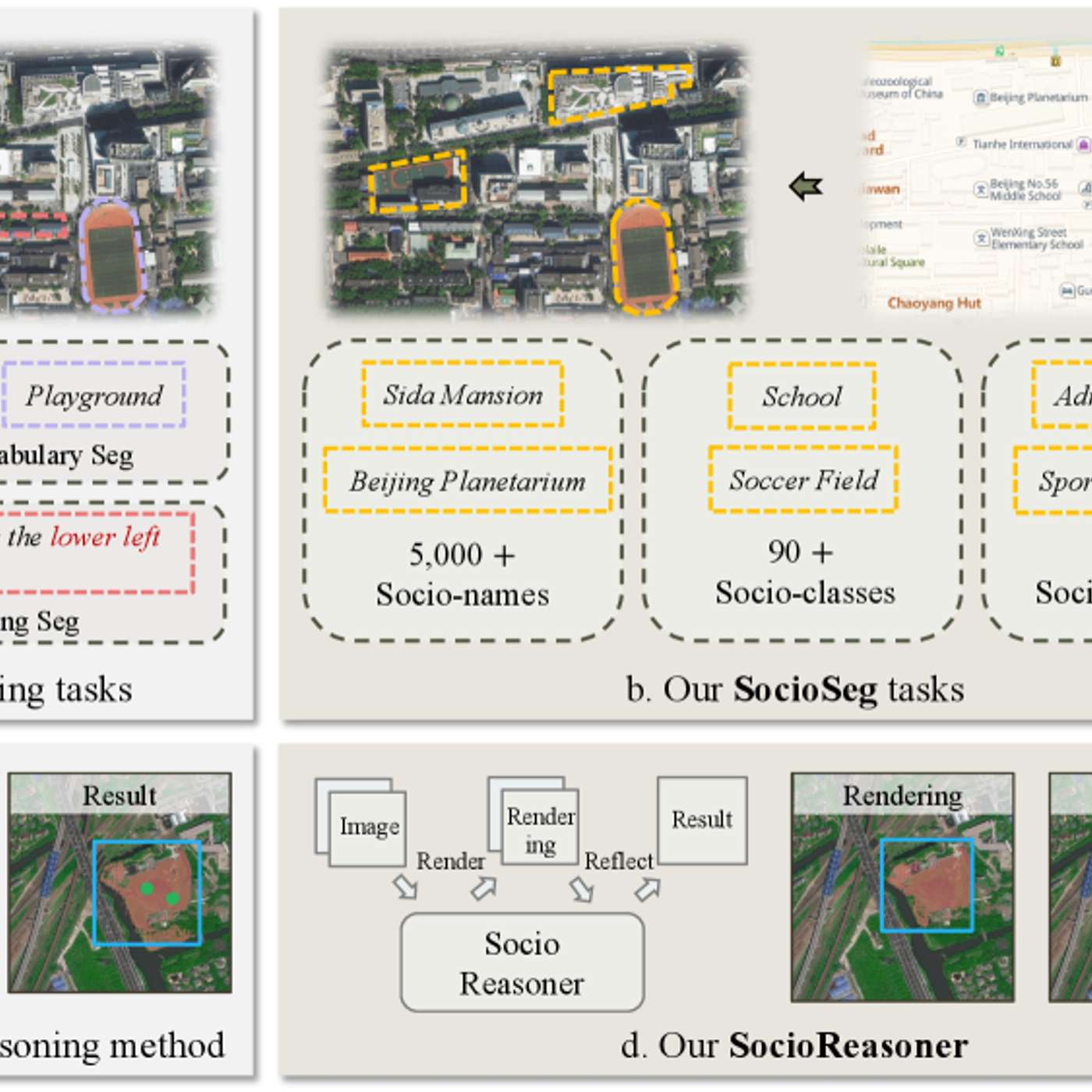

The featured AI model succeeds by reframing urban analysis as a reasoning problem. It uses a two-stage process—generating broad hypotheses then refining with detailed evidence—which mimics human cognition and outperforms traditional single-pass pattern recognition systems.

The most effective AI architecture for complex tasks involves a division of labor. An LLM handles high-level strategic reasoning and goal setting, providing its intent in natural language. Specialized, efficient algorithms then translate that strategic intent into concrete, tactical actions.

Google DeepMind CEO Demis Hassabis argues that today's large models are insufficient for AGI. He believes progress requires reintroducing algorithmic techniques from systems like AlphaGo, specifically planning and search, to enable more robust reasoning and problem-solving capabilities beyond simple pattern matching.

A core legacy of AlphaGo is turning complex search problems into 'games' for AI agents. AlphaTensor reframed the challenge of finding the fastest matrix multiplication algorithm as a game, allowing it to discover a more efficient method than any human had found in over 50 years, proving the approach's power for scientific discovery.

In the endgame, AlphaGo made moves that seemed suboptimal, even giving up points. This was because it wasn't optimizing for a large victory margin (a human heuristic) but purely for maximizing the probability of winning, even by a half-point. This reveals how literal AI objective functions can differ from human proxies for success.

The "temporal difference" algorithm, which tracks changing expectations, isn't just a theoretical model. It is biologically installed in brains via dopamine. This same algorithm was externalized by DeepMind to create a world-champion Go-playing AI, representing a unique instance of biology directly inspiring a major technological breakthrough.