Get your free personalized podcast brief

We scan new podcasts and send you the top 5 insights daily.

Instead of creating a bespoke memory or messaging protocol for agent-to-agent communication, Notion leverages its core primitives. Agents compose by writing to and reading from shared Notion pages and databases, creating a decoupled, human-editable, and transparent system for coordination.

Related Insights

To prevent autonomous agents from operating in silos with 'pure amnesia,' create a central markdown file that every agent must read before starting a task and append to upon completion. This 'learnings.md' file acts as a shared, persistent brain, allowing agents to form a network that accumulates and shares knowledge across the entire organization over time.

When using multiple agents, file-based memory becomes a bottleneck. A shared, dynamic memory layer (e.g., via a plugin like Google's Vertex AI Memory Bank) is crucial. This allows a correction given to one agent, like a stylistic preference, to be instantly learned and applied by all other agents in the team.

Notion is creating a new, defensible market by positioning its platform not just for human work, but as a central hub where different third-party AI agents can interact, collaborate, and have their actions tracked. This strategy aims to make Notion the essential infrastructure for an emerging agent-driven workforce.

Notion's core vision has fundamentally changed because of AI. The co-founder explained their goal shifted from building the best tool for humans to *directly perform* work, to creating the best platform for humans to *manage agents* that do the work for them, using the same core primitives like pages and databases.

A huge unlock for the 'Claudie' project manager was applying database principles. By creating unique ID conventions for people, sessions, and deliverables, the agent could reliably connect disparate pieces of information, enabling it to maintain a coherent, high-fidelity view of the entire project.

Building a bespoke communication layer for multiple AI agents is a complex "scaffolding" problem. A simpler, more direct solution is to treat agents as digital coworkers, assigning them accounts on existing platforms like Slack or Google Docs, enabling them to interact using established human workflows.

Instead of siloing agents, create a central memory file that all specialized agents can read from and write to. This ensures a coding agent is aware of marketing initiatives or a sales agent understands product updates, creating a cohesive, multi-agent system.

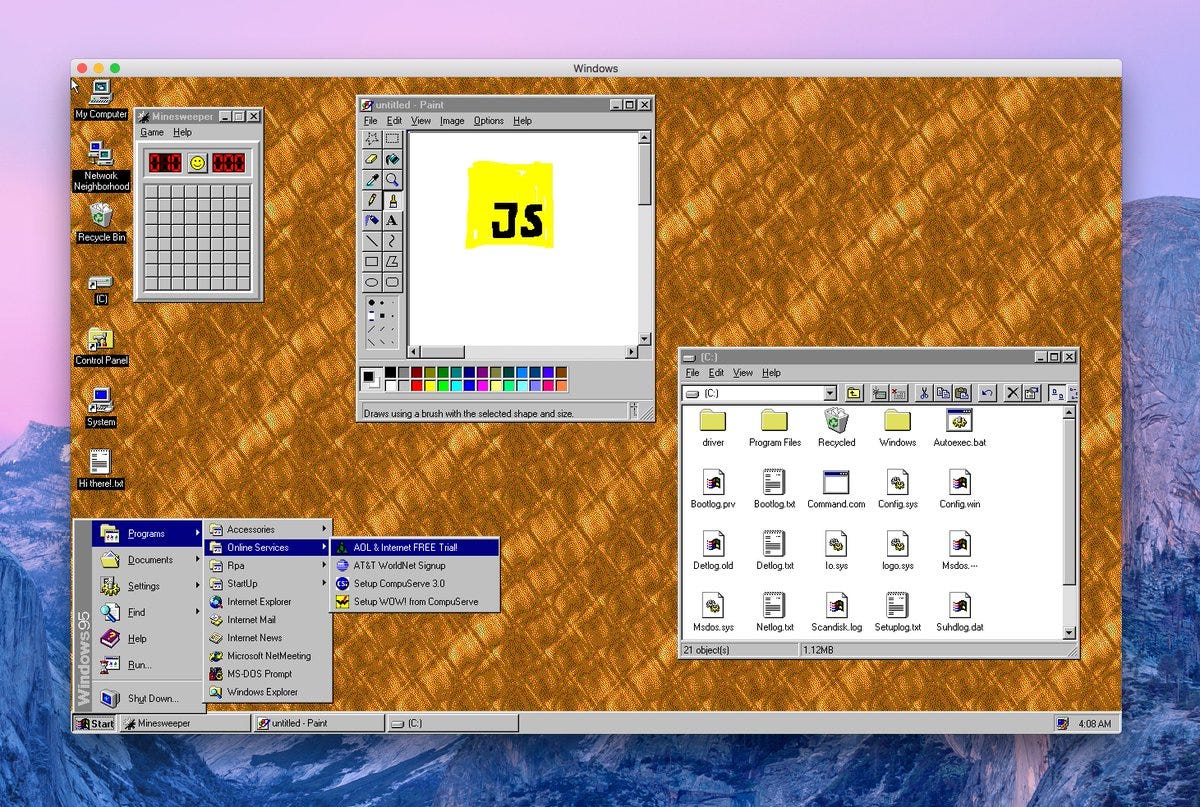

Notion's journey to a working AI agent involved multiple failed attempts. Key lessons were to stop forcing models to use Notion-specific data formats and instead provide them with familiar interfaces like Markdown and SQLite, which they are pre-trained to understand well.

Standard APIs for human developers are often too verbose for AI agents. Notion created agent-centric APIs, like a special markdown dialect and a SQLite interface, by treating the AI as a new type of user. This involved empirical testing to understand what formats agents are naturally good at using.

Complex orchestration middleware isn't necessary for multi-agent workflows. A simple file system can act as a reliable handoff mechanism. One agent writes its output to a file, and the next agent reads it. This approach is simple, avoids API issues, and is highly robust.